A timeline of MIT computing milestones

The dawn of the digital age. The moon landing. The advent of PCs. Secure online commerce on an internet that doesn’t crash. So many critical advances in computing, AI, and robotics have MIT fingerprints all over them. As the MIT Schwarzman College of Computing opens its doors, we look at just a few of the Institute’s countless contributions to the field.

1937 Digital circuits

Graduate student Claude Shannon, SM ’40, PhD ’40, showed that the principles of true/false logic could be equated to the on/off states of electric switches—a concept that came to underlie the field of digital circuits and, therefore, the entire industry of digital computing itself.

1945 Memex

Former MIT professor Vannevar Bush proposed a data system called a “Memex” that would allow a user to “store all his books, records, and communications” and retrieve them at will. The concept inspired the early hypertext systems that led, decades later, to the World Wide Web.

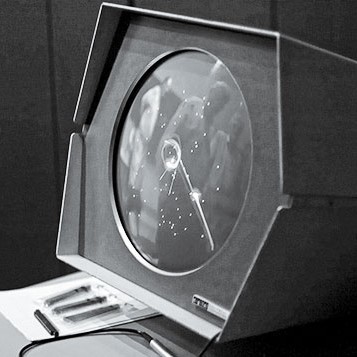

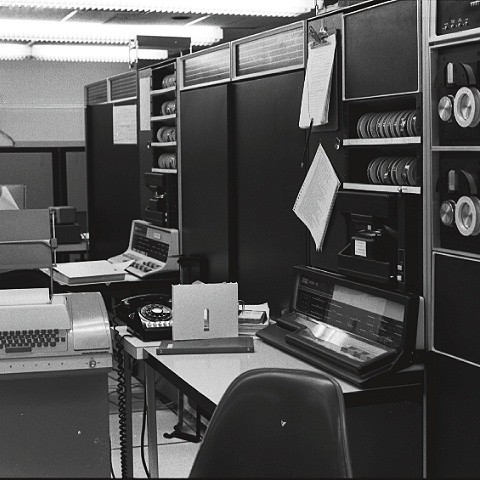

1951 The digital computer

The first digital computer that could operate in real time came out of Project Whirlwind, an MIT initiative led by Jay Forrester, SM ’45, to develop a universal flight simulator for the US Navy. The device’s success led to the creation of MIT Lincoln Laboratory in 1951.

1958 Functional programming

The first functional programming language was invented at MIT by Professor John McCarthy. Before LISP, it was difficult for programs to juggle multiple processes at once, because languages only let programmers specify step-by-step instructions. Functional languages let them describe required behaviors more simply, allowing work on much bigger problems than ever before.

1959 The portable fax

In trying to understand the words of a strongly accented colleague over the phone, MIT student Sam Asano, SM ’61, became frustrated. He wished that they could just draw pictures and instantly send them to each other—so he created a technology to transmit scanned material through phone lines. His fax machine was licensed to a Japanese telecom company before becoming a worldwide phenomenon.

1962 The multiplayer video game

When a PDP-1 computer arrived at MIT’s Electrical Engineering Department, a group of crafty students—including Steven “Slug” Russell ’60, EE ’66, from Marvin Minsky’s AI group—went to work creating SpaceWar! The space-combat video game became very popular among early programmers and is considered the world’s first multiplayer game.

1963 The password

The average person has 13 passwords—and for that you can thank MIT’s Compatible Time-Sharing System, which by most accounts established the first computer password. “We were setting up multiple terminals which were to be used by multiple persons but with each person having his own private set of files,” MIT Professor Fernando “Corby” Corbató, PhD ’56, told Wired. “Putting a password on for each individual user as a lock seemed like a very straightforward solution.”

1963 Graphical user interfaces

Nearly 50 years before the iPad, an MIT PhD student had come up with the idea of directly interfacing with a computer screen. The “Sketchpad” developed by Ivan Sutherland, PhD ’63, allowed users to draw geometric shapes with a touch pen, pioneering the practice of “computer--assisted drafting”—which has proved vital for architects, planners, and even toddlers.

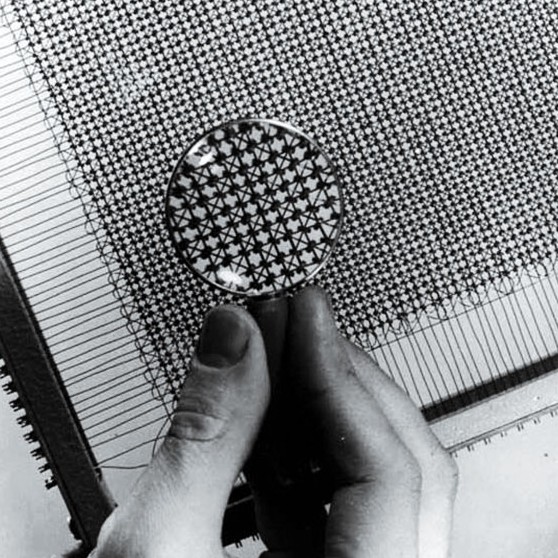

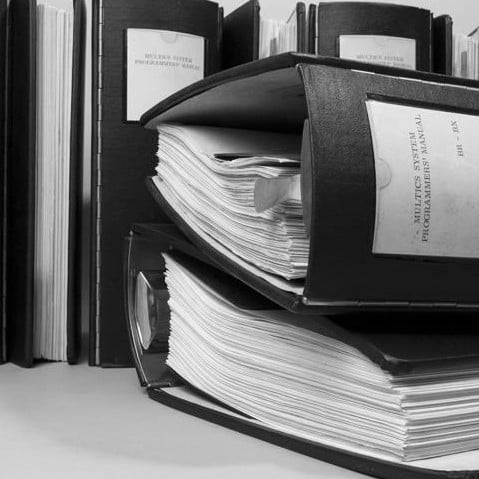

1964 Multics

MIT spearheaded the time--sharing system that inspired UNIX and laid the groundwork for many aspects of modern computing, from hierarchical file systems to buffer-overflow security. Multics, led by Professor Corbató, furthered the idea of the computer as a “utility” to be used anytime, like electricity.

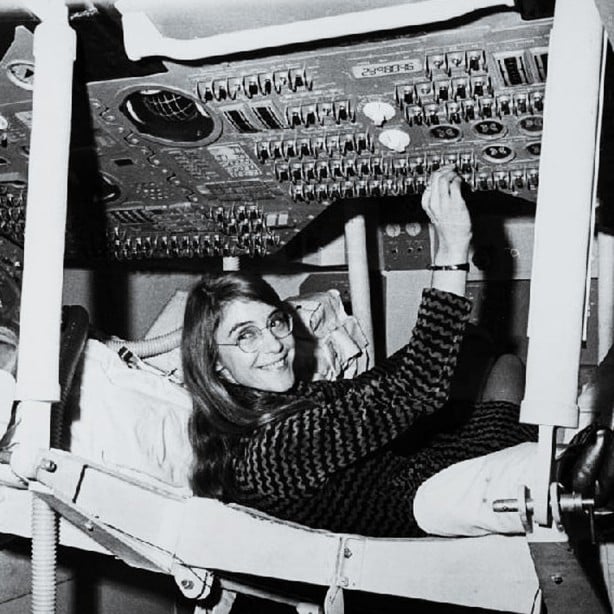

1969 Moon code

Margaret Hamilton led the MIT team that coded the Apollo 11 guidance and control system, which landed astronauts Neil Armstrong and Buzz Aldrin, ScD ’63, on the moon. The robust software overrode a command to switch the flight computer’s priority system to a radar system, and no software bugs were found on any crewed Apollo missions.

1971 Email

The first email ever to travel across a computer network was sent between two computers right next to each other—and it came from Ray Tomlinson ’65 when he was working at spinoff BBN Technologies. (He’s the one you can credit, or blame, for the @ symbol.)

1973 The PC

MIT professor Butler Lampson’s work at Xerox’s Palo Alto Research Center (PARC) earned him the title of “father of the modern PC.” The Xerox Alto platform was used to create the first desktop computer with a graphical user interface (GUI), the first bitmapped display, and the first “what you see is what you get” (WYSIWYG) editor.

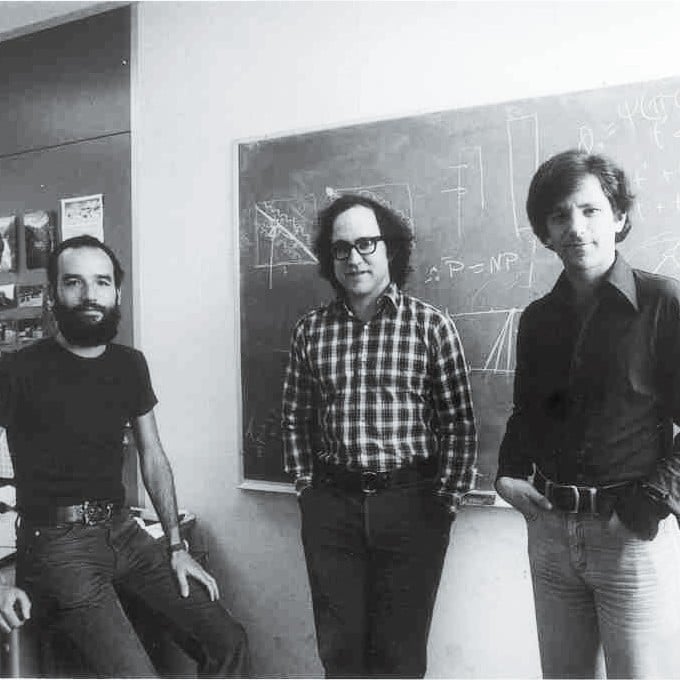

1977 Data encryption

E-commerce was first made possible by MIT professors Adi Shamir, Ron Rivest, and Len Adleman, whose RSA algorithm encrypts data by making use of how difficult it is to factor the product of two huge prime numbers. Who knew math would be why you can get your last-minute holiday shopping done?

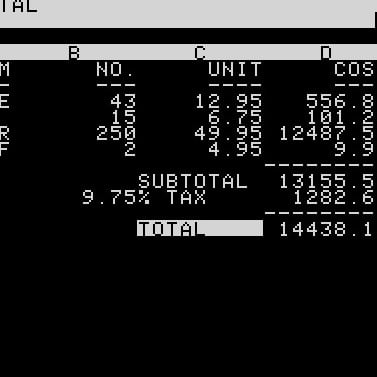

1979 The spreadsheet

In 1979, Bob Frankston ’70 and Dan Bricklin ’73 worked late into the night on an MIT mainframe to create VisiCalc, the first electronic spreadsheet, which sold more than 100,000 copies in its first year. Three years later, Microsoft got into the game with “Multiplan,” a program that later became Excel.

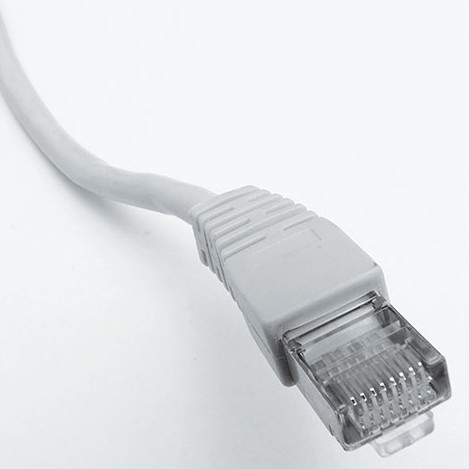

1980 Ethernet

Before there was Wi-Fi, there was Ethernet—the networking technology that lets you get online with a simple cable plug-in. Co-invented by Bob Metcalfe ’68, who was part of MIT’s Project MAC team and later went on to found 3Com, Ethernet helped make the internet the fast, convenient platform that it is today.

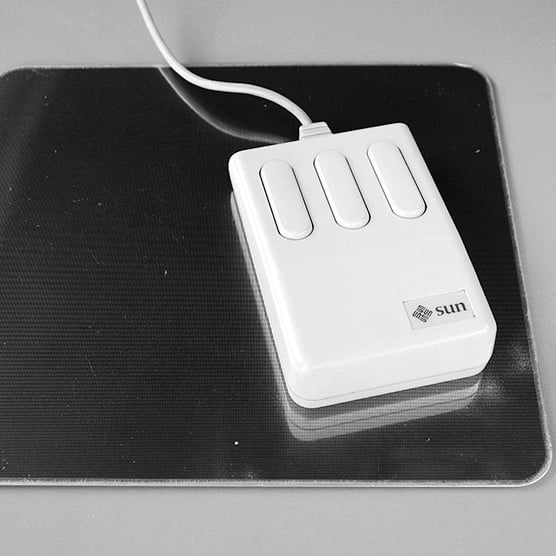

1980 The optical mouse

Undergrad Steve Kirsch ’80 was the first to patent an optical computer mouse—he had wanted to make a “pointing device” with a minimum of precision moving parts—and went on to found Mouse Systems Corp. (He also patented the method of tracking online ad impressions through click-counting.)

1983 The growth of freeware

Early AI Lab programmer Richard Stallman was a major pioneer in hacker culture and the free--software movement through his GNU Project, which set out to develop a free alternative to the Unix OS, and laid the groundwork for Linux and other important computing innovations.

1984 Spanning tree algorithm

Radia Perlman ’73, SM ’76, PhD ’88, hates when people call her “the mother of the internet,” but her work developing the spanning tree protocol was vital for being able to route data across global computer networks. (She also created a toddler version of the educational programming language Logo.)

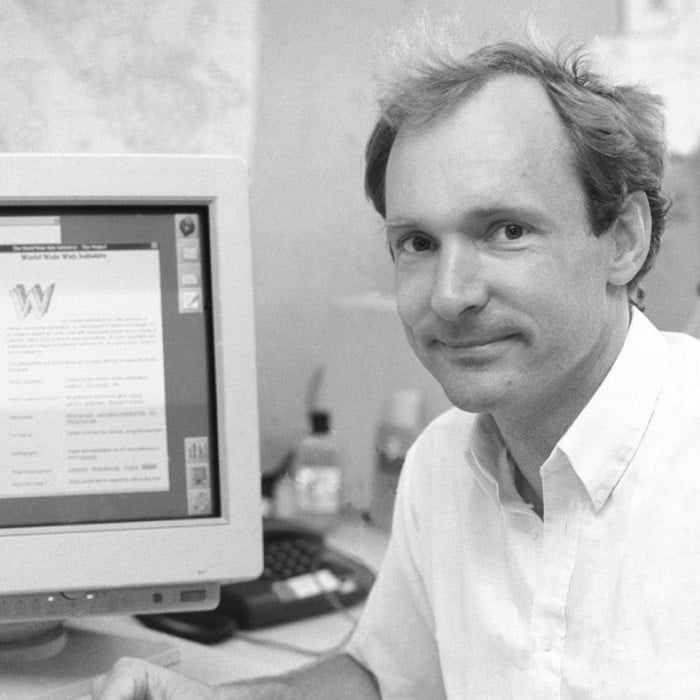

1994 The World Wide Web Consortium (W3C)

After inventing the web, Tim Berners-Lee joined the MIT faculty and launched a consortium devoted to setting global standards for building websites, browsers, and devices. Among other things, W3C standards ensure that sites are accessible, secure, and easily “crawled.”

1999 The birth of blockchain

MIT Institute Professor Barbara Liskov’s paper on practical Byzantine fault tolerance helped kick-start the field of blockchain, a widely used cryptography system. Her team’s protocol could handle high-transaction throughputs and used concepts that are vital for many of today’s blockchain platforms.

2002 Roomba

We don’t yet have robots running errands for us, but we do have robo-vacuums—and for that, we can thank MIT spinoff iRobot, founded by Rodney Brooks, Helen Greiner ’89, SM ’90, and Colin Angle ’89, SM ’91. iRobot has sold more than 20 million home robots and spawned an industry of automated cleaning products.

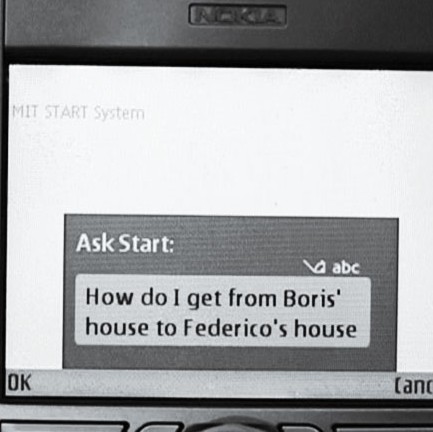

2007 The mobile personal assistant

Before Siri and Alexa, there was MIT professor Boris Katz’s StartMobile, an app that allowed users to schedule appointments, get information, and do other tasks using natural language.

2012 EdX

Led by former CSAIL director Anant Agarwal, MIT’s open-source, nonprofit online learning platform with Harvard University offers free courses that have drawn more than 20 million learners around the globe.

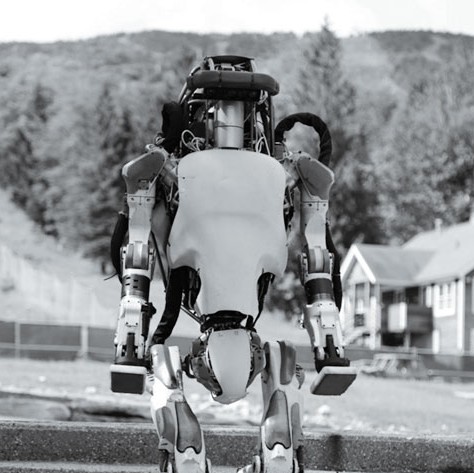

2013 Atlas, the humanoid robot

Boston Dynamics, founded by Marc Raibert, PhD ’77, when he was an MIT professor, unveiled the humanoid robot Atlas, later used in the DARPA Robotics Challenge to develop robots for disaster relief sites. The company’s Big Dog and Spot Mini bots climb, run, jump, and do back flips.

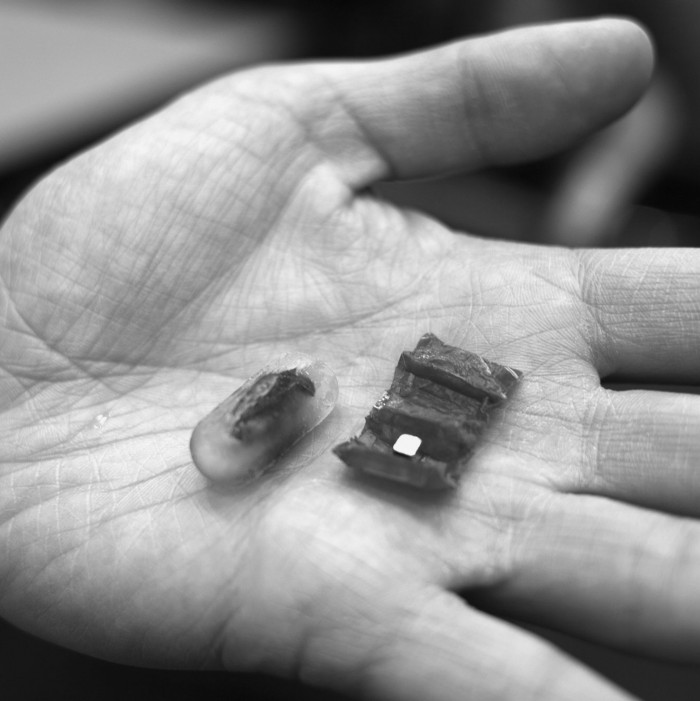

2016 Robots you can swallow

CSAIL director Daniela Rus’s ingestible origami robot can unfold itself from a swallowed capsule. Using an external magnetic field, it could one day crawl across your stomach wall to remove swallowed batteries or patch wounds.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.