Margaret Hamilton

When Margaret Hamilton was a child, her father used to take her on long drives through Michigan’s Upper Peninsula. They would talk the whole time about “philosophy—related things,” the software engineer, now 83, recalls. “Like ‘what ifs’ and ‘whys’ and ‘why nots.’”

About two decades later, Hamilton held her breath in the MIT Instrumentation Lab’s mission monitoring room as one of the greatest “what ifs” in history came to fruition. On July 20, 1969, at 3:17 p.m. Boston time, the lunar module Eagle touched down on the moon. By then, Hamilton was the head of software engineering for the Apollo program. As she likes to say, the Eagle’s crew were the first humans to walk on the lunar surface—and her team’s software was the first to run on it.

In more than a decade working with the Apollo missions, Hamilton helped safely shepherd spaceships into and out of orbit. She led a group of nearly 100 developers, bringing rigor—and respect—to what was then the brand-new field of software engineering. (In fact, she came up with the term “software engineer” and pushed for its use.) And she did all of it with creativity and aplomb, led by a conviction repeatedly borne out: that “the never-going-to--happen can happen.”

Hamilton left college thinking she would be a mathematician, planning to get a job while her husband went to Harvard Law School and then go to grad school herself. In 1959, she started working for the renowned MIT meteorologist Edward Lorenz, who charged her with programming an 800-pound computer called the LGP-30.

Hamilton had never seen a computer. But she took to it right away, finding unorthodox ways to make the lumbering thing work more quickly. “I would take steps that would be called ‘tricky’ programming today,” she says. For instance, by-the-book debugging involved slowly feeding the long paper roll that held an incorrect program back through the machine. Hamilton, who had no patience for this, learned binary code and started editing the sheets herself—poking a hole in the paper with a sharp pencil to turn a 0 to a 1, and using a small piece of tape for the reverse. (The work done by Hamilton and others eventually helped lead to Lorenz’s groundbreaking work on chaos theory.)

In 1961, Hamilton brought this outside-the-box approach to her next position, inventing a new error protocol for MIT’s massive, unruly SAGE air defense system. This computer took up a whole warehouse, and made “foghorn and fire engine sounds” when it crashed, she says. Each time that happened, she’d have the programmer at fault pose for a Polaroid, along with “documentation of where [the mistake] came from,” she says. If the same error occurred again, they could bring in that person to fix it.

Thoughts of that math PhD faded. After years of “discovering things and inventing things,” she realized she was hooked on this strange new field she was helping to create in an atmosphere she has compared to “the Wild West.” Errors, especially, continued to fascinate her: not just finding and correcting them, but tracing the patterns they follow in order to predict and prevent them. “I thought of them as the enemy,” she says.

So in 1964, when she saw an ad from the MIT Instrumentation Lab recruiting programmers to work on software for what would become the Apollo program, Hamilton jumped at the chance. Initially, she was assigned what was presumed to be a low-impact project, writing the code that would kick in if an unmanned mission aborted. The higher-ups were “so sure this wasn’t going to happen,” she says, that she actually named her program “Forget It.” (When one of these missions did abort, she found herself in high demand.)

She was soon promoted to “man-rated” software, where an error could jeopardize an astronaut’s life. Here, good code had to run correctly—but it also had to detect and make up for hardware malfunctions or mistakes made by the astronauts themselves. Hamilton tapped back into that spirit of what-if: What if someone flips this switch at the wrong time? What if an emergency occurs when everyone is busy? Accounting for these possibilities kept her up at night. “That’s all I thought about,” she says.

Sometimes inspiration came from unexpected places. Hamilton would often bring her daughter, Lauren, to the lab with her on nights and weekends. Lauren, then four years old, liked to play astronaut in the team’s simulators, and one day, pressing buttons at random, she caused a spectacular software crash. Hamilton investigated and found that Lauren had confused the program by keying in a pre-launch sequence, called P01, “in the middle of the mission,” she says.

She asked the powers at NASA to let her add a safeguard to prevent that error in a real flight, and they laughed it off as too unlikely. But they let her make a note of it. When an astronaut did make that blooper during the Apollo 8 mission—the first manned flight orbiting the moon—it caused necessary navigational data to vanish, and they called Hamilton in to get it back. “I remember saying to a couple of guys there, ‘It’s the Lauren bug!’” she says, laughing.

It was Apollo 11 that gave the team the greatest scare. Just as the Eagle was about to touch down on the moon, the astronauts were interrupted by five error messages warning that computing power was low. But because the software was so well designed, Houston trusted it to prioritize the most important aspects of the landing, and allowed the mission to proceed. The team had programmed the software to detect errors and recover from them, but watching it do that during Eagle’s descent inspired “sheer terror,” she says.

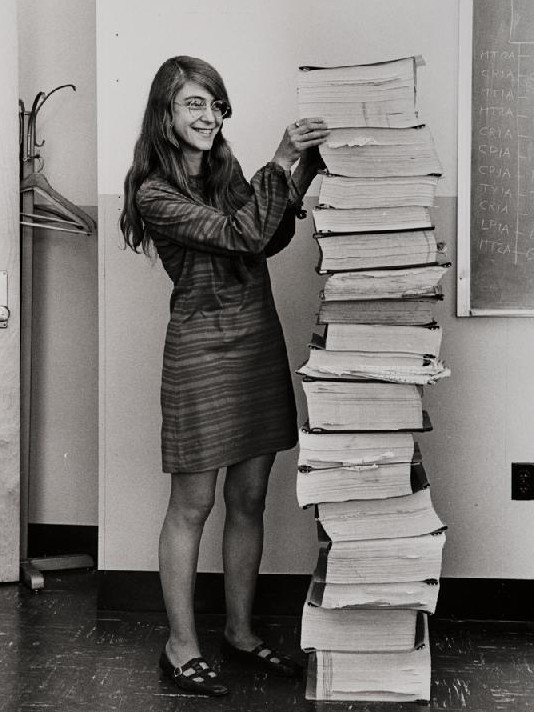

Hamilton’s role in computing history is gaining greater public recognition. In 2016, she received the Presidential Medal of Freedom from Barack Obama, who praised her “American spirit of discovery.” In 2017, an iconic photo of her—bespectacled, grinning, and dwarfed by a stack of her team’s code—was turned into a Lego figurine.

In between her Apollo work and her trip to the White House, Hamilton focused on applying what she learned from the Apollo program as she invented a new paradigm for systems design and software development. Her current company, Hamilton Technologies, has developed a language based on this paradigm called the Universal Systems Language. It is meant to prevent the most common types of errors, instead of accounting for them after the fact. She hopes it will eventually be widely adopted.

It’s a tall order for what is now a multitrillion-dollar industry—and no longer quite so wild. But as her childhood self might have said: “Why not?”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.