A bot disguised as a human software developer fixes bugs

“In this world nothing can be said to be certain, except death and taxes,” wrote Benjamin Franklin in 1789. Had he lived in the modern era, Franklin may well have added “software bugs” to his list.

Modern computer programs are so complex that bugs inevitably crop up during the development process. That’s why finding them and writing patches to fix them is an ordinary part of any software development schedule. Indeed, there are companies such as Travis that offer this service to developers.

But finding and fixing patches is a time-consuming business that uses up significant resources. Various researchers have developed bots that automate this process, but they tend be slow or to produce poorly written code that does not pass muster. So developers would dearly love to be able to rely on a fast, high-quality bot that scours code for errors and then writes patches to fix them.

Today, their dreams come true thanks to the work of Martin Monperrus and pals at the KTH Royal Institute of Technology in Stockholm, Sweden. These guys have finally built a bot that can compete with human developers in finding bugs and writing high-quality patches.

These guys call their bot Repairnator and have successfully tested it by allowing it to compete against human developers to find fixes. “This is a milestone for human-competitiveness in software engineering research on automatic program repair,” they say.

Computer scientists have long known that it is possible to automate the process of writing patches. But it is not clear whether bots can do this work as quickly as humans and to the same quality.

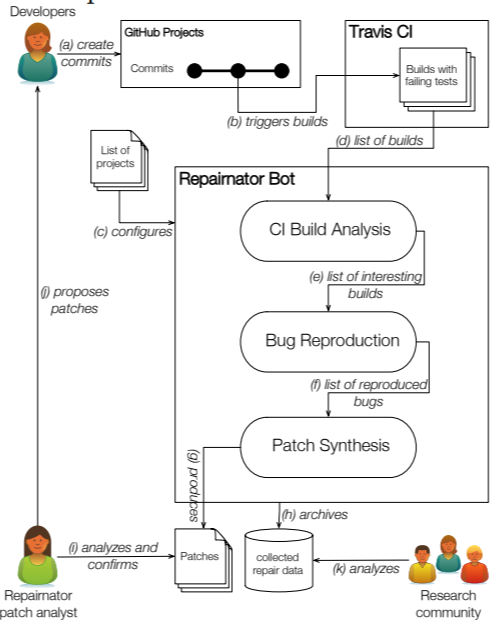

So Monperrus and co tested this by disguising Repairnator as a human developer and allowing it to compete with humans to develop patches on GitHub, a version control website for software developers. “The key idea of Repairnator is to automatically generate patches that repair build failures, then to show them to human developers, to finally see whether those human developers would accept them as valid contributions to the code base,” say Monperrus and co.

The team created a GitHub user called Luc Esape, who appeared to be a software engineer at their research lab. “Luc has a profile picture and looks like a junior developer, eager to make open-source contributions on GitHub,” they say.

But Luc is actually Repairnator in disguise. This deception was necessary because human moderators tend to assess the work of bots and humans differently. “This camouflage is required to test our scientific hypothesis of human competitiveness,” say Monperrus and co, who have now informed the humans involved of the ruse.

The team carried out two runs to test Repairnator. The first ran from February to December 2017, when the team ran Repairnator on a fixed list of 14,188 GitHub projects looking for errors. “We found that our prototype is capable of performing approximately 30 repair attempts per day,” they say.

During this time, Repairnator analyzed over 11,500 builds with failures. Of these, it was able to reproduce the failure in over 3,000 cases. It then went on to develop a patch in 15 cases.

However, none of these patches were accepted into the build because Repairnator took too long to develop them or wrote low-quality patches that could not be accepted.

The second experimental run was more successful. This time, the team set Luc to work on the Travis continuous integration service from January to June 2018. Although the team did not specify what improvements they made to Repairnator, on January 12 it wrote a patch that a human moderator accepted into a build. “In other words, Repairnator was human-competitive for the first time,” they say.

Over the next six months, Repairnator went on to produce five patches that human moderators accepted.

That’s impressive work that sets the scene for a new generation of software development. It also raises some interesting questions. Monperrus and co point to a patch Repairnator developed for a GitHub project called “eclipse/ditto” on May 12.

The team then a received the following message from one of the developers: “We can only accept pull-requests which come from users who signed the Eclipse Foundation Contributor License Agreement.”

That raises a thorny issue, since a bot cannot physically sign a license agreement. “Who owns the intellectual property and responsibility of a bot contribution: the robot operator, the bot implementer or the repair algorithm designer?” ask Monperrus and co.

This kind of issue will have to be resolved before humans and bots can collaborate in more detail. But Monperrus and co are optimistic. “We believe that Repairnator prefigures a certain future of software development, where bots and humans will smoothly collaborate and even cooperate on software artifacts,” they say.

Franklin, a famously creative inventor himself, would surely have been impressed.

Ref: arxiv.org/abs/1810.05806 : Human-competitive Patches in Automatic Program Repair with Repairnator

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.