The “neuropolitics” consultants who hack voters’ brains

Maria Pocovi slides her laptop over to me with the webcam switched on. My face stares back at me, overlaid with a grid of white lines that map the contours of my expression. Next to it is a shaded window that tracks six “core emotions”: happiness, surprise, disgust, fear, anger, and sadness. Each time my expression shifts, a measurement bar next to each emotion fluctuates, as if my feelings were an audio signal. After a few seconds, a bold green word flashes in the window: ANXIETY. When I look back at Pocovi, I get the sense she knows exactly what I’m thinking with one glance.

Petite with a welcoming smile, Pocovi, the founder of Emotion Research Lab in Valencia, Spain, is a global entrepreneur par excellence. When she comes to Silicon Valley, she doesn’t even rent an office—she just grabs a table here at the Plug and Play coworking space in Sunnyvale, California. But the technology she’s showing me is at the forefront of a quiet political revolution. Campaigns around the world are employing Emotion Research Lab and other marketers versed in neuroscience to penetrate voters’ unspoken feelings.

This spring there was a widespread outcry when American Facebook users found out that information they had posted on the social network—including their likes, interests, and political preferences—had been mined by the voter-targeting firm Cambridge Analytica. While it’s not clear how effective they were, the company’s algorithms may have helped fuel Donald Trump’s come-from-behind victory in 2016.

But to ambitious data scientists like Pocovi, who has worked with major political parties in Latin America in recent elections, Cambridge Analytica, which shut down in May, was behind the curve. Where it gauged people’s receptiveness to campaign messages by analyzing data they typed into Facebook, today’s “neuropolitical” consultants say they can peg voters’ feelings by observing their spontaneous responses: an electrical impulse from a key brain region, a split-second grimace, or a moment’s hesitation as they ponder a question. The experts aim to divine voters’ intent from signals they’re not aware they’re producing. A candidate’s advisors can then attempt to use that biological data to influence voting decisions.

Political insiders say campaigns are buying into this prospect in increasing numbers, even if they’re reluctant to acknowledge it. “It’s rare that a campaign would admit to using neuromarketing techniques—though it’s quite likely the well-funded campaigns are,” says Roger Dooley, a consultant and author of Brainfluence: 100 Ways to Persuade and Convince Consumers with Neuromarketing. While it’s not certain the Trump or Clinton campaigns used neuromarketing in 2016, SCL—the parent firm of Cambridge Analytica, which worked for Trump—has reportedly used facial analysis to assess whether what voters said they felt about candidates was genuine.

But even if US campaigns won’t admit to using neuromarketing, “they should be interested in it, because politics is a blood sport,” says Dan Hill, an American expert in facial-expression coding who advised Mexican president Enrique Peña Nieto’s 2012 election campaign. Fred Davis, a Republican strategist whose clients have included George W. Bush, John McCain, and Elizabeth Dole, says that while uptake of these technologies is somewhat limited in the US, campaigns would use neuromarketing if they thought it would give them an edge. “There’s nothing more important to a politician than winning,” he says.

The trend raises a torrent of questions in the run-up to the 2018 midterms. How well can consultants like these use neurological data to target or sway voters? And if they are as good at it as they claim, can we trust that our political decisions are truly our own? Will democracy itself start to feel the squeeze?

Unspoken truths

Brain, eye, and face scans that tease out people’s true desires might seem dystopian. But they’re offshoots of a long-standing political tradition: hitting voters right in the feels. For more than a decade, campaigns have been scanning databases of consumer preferences—what music people listen to, what magazines they read—and, with the help of computer algorithms, using that information to target appeals to them. If an algorithm shows that middle-aged female SUV drivers are likely to vote Republican and care about education, chances are they’ll receive campaign messages crafted explicitly to push those buttons.

Biometric technologies raise the stakes further. Practitioners say they can tap into truths that voters are often unwilling or unable to express. Neuroconsultants love to cite psychologist Daniel Kahneman, winner of the Nobel Prize in economics, who distinguishes between “System 1” and “System 2” thinking. System 1 “operates automatically and quickly, with little or no effort and no sense of voluntary control,” he writes; System 2 involves conscious deliberation and takes longer.

“Before, everyone was focused on System 2,” explains Rafal Ohme, a Polish psychologist who says his firm, Neurohm, has advised political campaigns in Europe and the United States. For the past decade, Ohme has devoted most of his efforts to probing consumers’ and voters’ System 1 leanings, which he thinks is as important as listening to what they say. It’s been great for his business, he says, because his clients are impressed enough with the results to keep coming back for more.

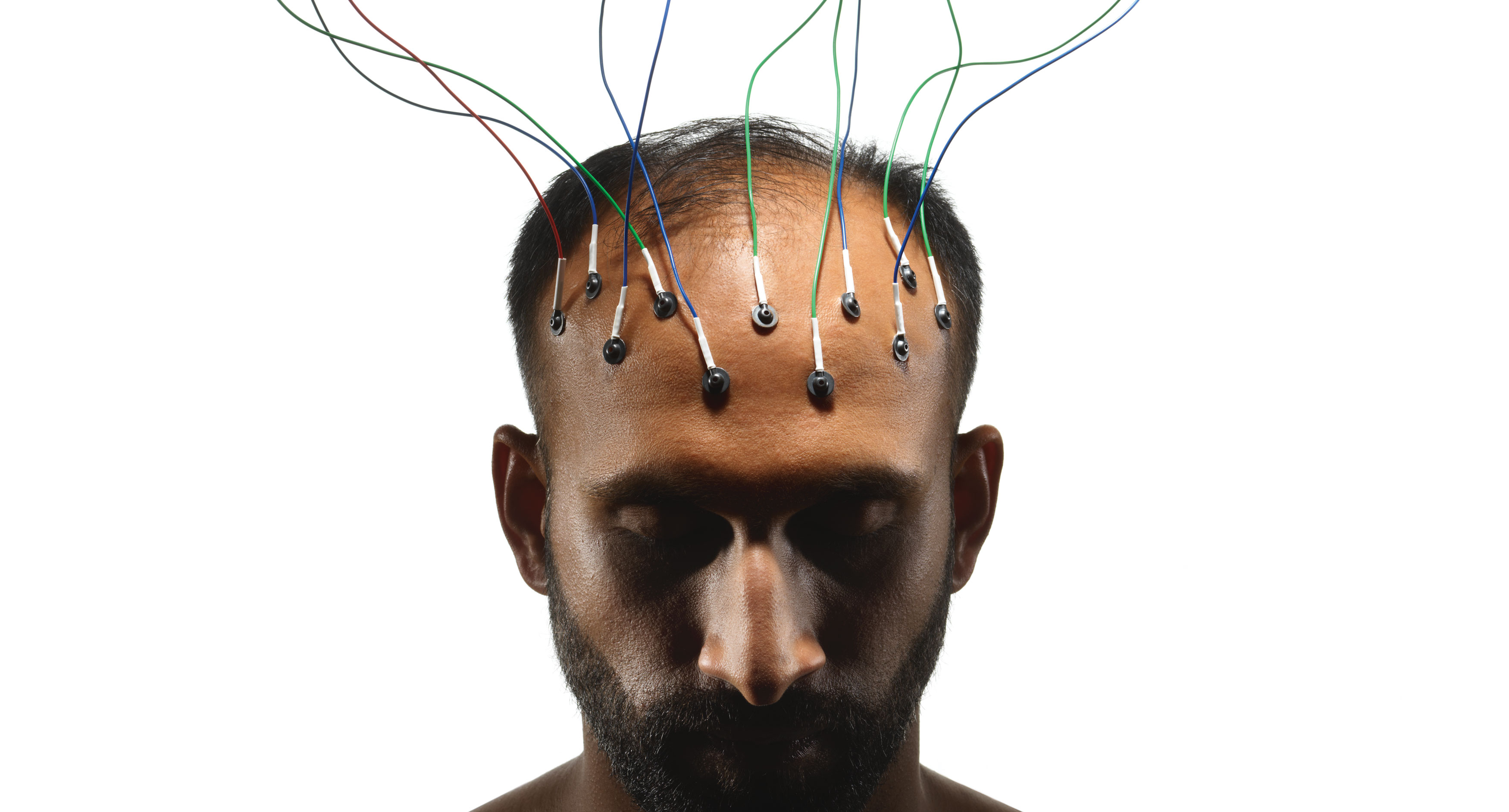

Many neuroconsulting pioneers built their strategy around so-called “neuro-focus groups.” In these studies, involving anywhere from a dozen to a hundred people, technicians fit people’s scalps with EEG electrodes and then show them video footage of a candidate or campaign ad. As subjects watch, scalp sensors pick up electrical impulses that reveal, second by second, which areas of the brain are activated.

“One of the things we can analyze is the attentional process,” says Mexico City neurophysiologist Jaime Romano Micha, whose former firm, Neuropolitka, was one of the top providers of brain-based services to political campaigns. Romano Micha would place electrodes on a subject’s scalp to detect activity in the reticular formation, a part of the brain stem that tracks how engaged someone is. So if subjects are watching a political ad and activity in their reticular formation spikes, say, 15 seconds in, it means the message has truly caught their attention at that point.

“There’s nothing more important to a politician than winning.”

Other brain areas provide important clues too, Romano Micha says. Electrical activity on the left side of the cerebral cortex suggests people are working hard to understand a political message; similar activity on the right side may reveal the precise moment the message’s meaning clicks into place. With these kinds of insights, campaigns can refine a message to maximize its oomph: placing the most gripping moment at the beginning, for instance, or cutting the parts that cause people’s attention to wander.

But while brain imaging remains part of the neuropolitical universe, most neuroconsultants say it’s hardly sufficient by itself. “EEG gives us very general information about the decision process,” Romano Micha says. “Some people are saying that through EEG we can go into the mind of people, and I think that’s not possible yet.” There are cheaper and more reliable tools, several consultants claim, for getting at a voter’s true feelings and desires.

Electrodes everywhere

EEG scans, in fact, are now just one in a smorgasbord of biometric techniques. Romano Micha also uses near-infrared eye trackers and electrodes around the orbital bone to track “saccades,” minuscule movements of the eye that indicate viewers’ attentional focus as they watch a campaign spot. Other electrodes supply a rough gauge of arousal by measuring electrical activity on the surface of a person’s skin.

Of course, you can’t stick electrodes on every person watching TV and browsing Facebook. But you don’t need to. The results from experiments on small neuro-focus groups can be used to influence voters who aren’t being sampled themselves. If, for example, biodata reveals that liberal women over 50 are fearful when they see an ad about illegal immigration, campaigns that want to stoke such fear can broadcast that same message to millions of people with similar demographic and social profiles.

Pocovi’s approach at Emotion Research Lab requires only a video player and a front-facing webcam. When volunteers enroll in her political focus groups online, she sends them videos of an ad spot or a candidate that they can watch on their laptop or phone. As they digest the content, she tracks their eye movements and subtle shifts in their facial expressions.

“We have developed algorithms to read the microexpressions in the face and translate in real time the emotions people are feeling,” Pocovi says. “Many times, people tell you, ‘I’m worried about the economy.’ But what are really the things that move you? In my experience, it’s not the biggest things. It’s the small things that are close to you.” Something as small as a candidate’s inappropriately furrowed brow, she says, can color our perception without our realizing it.

Pocovi says her facial analysis software can detect and measure “six universal emotions, 101 secondary emotions, and eight moods,” all of which interest campaigns anxious to learn how people are responding to a message or a candidate. She also offers a crowd-analytics service to track the emotional reactions of individual faces in a human sea, meaning that campaigns can take the temperature of a room as their candidate is speaking.

ERL’s software is built around the facial action coding system (FACS) developed by Paul Ekman, a famed American psychologist. Pocovi’s algorithm deconstructs each facial image from the webcam into more than 50 “action units,” movements of specific muscle groups. Distinct clusters of action units correspond to particular emotions: cheek and outer-lip muscles contracting at the same time reveal happiness, while lowered brows and raised upper eyelids betray anger. Pocovi trains her system to recognize each one by showing it many reference images from a large database of faces expressing that emotion.

Some critics of Ekman’s system, such as neuroscientist Lisa Feldman Barrett, have argued that facial expressions don’t necessarily correlate with emotional states. Still, a variety of studies have shown at least some correspondence. In a 2014 study at Ohio State University, cognitive scientists defined 21 “distinct emotions,” based on the consistent ways most of us move our facial muscles.

Pocovi says her surveys also operate as an image-refining tool for candidates themselves. She analyzes video of candidates to pinpoint precise moments when their expressions make voters feel confused, disgusted, or angry. Politicians can then use this information to rehearse a different emotional approach, which can itself be vetted using Pocovi’s survey platform until it produces the desired response in viewers. In one campaign Pocovi advised, a candidate was recording a TV ad spot with an uplifting, positive message, but it kept getting terrible reviews in test screenings. The spot’s poor performance was a mystery—until Pocovi’s analysis of the candidate’s face showed he was unwittingly conveying anger and disgust. Once he realized what was going on, he was able to tweak his presentation and get a better response from the public.

Several onetime devotees of brain-scan analysis are also pursuing simpler and cheaper techniques these days. Before the 2008 financial crisis, Ohme says, international clients were more willing to fly five guys from Poland out to perform on-site brain studies. After the recession, though, that business mostly dried up.

That prompted Ohme to develop a different strategy, one untethered to time, space, or EEG electrodes. His updated approach stems from that used in unconscious-bias studies by social psychologist Anthony Greenwald, who became a mentor when Ohme visited the US on a Fulbright scholarship. Ohme says his smartphone-based test—which he calls iCode—reveals covert political leanings that would never surface in traditional questionnaires or focus groups.

When Ohme asked test subjects whether Hillary Clinton shared their values, they often hesitated for an unusually long time.

Ohme’s survey takers begin by answering calibration questions to assess their baseline reaction time. A habitually slower person, for instance, might have a “unit time” lasting 585 milliseconds, while someone quicker might take 387 milliseconds. Then images of politicians are shown on the screen, each paired with a single attribute, such as “trustworthy,” “well-known,” or “shares my values.” Users tap “yes” or “no” to indicate whether they agree with each pairing. As the test proceeds, the app tracks not just how they answer but how quickly they touch the screen and what tapping rhythm they establish.

What’s interesting, Ohme says, isn’t how people respond to the questions per se, but how much they dither first. “When we measure the hesitation level, we can see that some answers are positive but with hesitation, and some are positive and instantaneous,” he says. “We measure how much you deviated [from baseline]. This deviation is key.”

Ohme declines to discuss his current political clients in much detail, citing confidentiality agreements. But he volunteers that in an iCode survey of nearly 900 people, he predicted Hillary Clinton’s 2016 defeat before the election. Throughout the year, Clinton ran comfortably ahead of Trump in traditional polls. But when Ohme asked test subjects whether Clinton shared their values, they often hesitated for an unusually long time before responding that she did. Ohme knew a sense of shared values was a big factor motivating people to vote in 2016 (in previous elections “powerful” and “leader” were key), so the results of the test gave him serious doubts about a Clinton victory. He argues that if Clinton’s campaign had run one of his studies before the election, she would have understood the depth of her vulnerability and could have made course corrections.

Ohme claims to have helped other candidates in similar straits. One of his tests revealed that while a certain European client had a good-sized base of supporters, many weren’t motivated to get out and vote because they assumed their candidate would win. Armed with this knowledge, the campaign made a renewed push to get its loyal base to the polls. The client ended up winning in a squeaker.

The biggest lies in life

Does measuring people’s spontaneous reactions to a TV ad or a stump speech tell you how they will ultimately vote, however? “On the applied side, it’s pretty unclear, the hype from the reality,” says Darren Schreiber, a professor in political science at the University of Exeter and author of Your Brain Is Built for Politics. “It’s easy to over-believe the ability of these tools.” So far cognitive tests have had mixed results. Contrasting studies have shown that implicit attitudes both do and don’t predict how people vote.

Still, Schreiber, who has conducted brain-scan tests of political attitudes, admits the technologies are worrisome. Democracy assumes the presence of rational actors, capable of digesting information from all quarters and coming to reasoned conclusions. If neuroconsultants are even half as good as they claim at probing people’s innermost thoughts and shifting their voting intentions, it calls that assumption into question.

“We are susceptible in multiple ways, and not aware of our susceptibility,” Schreiber says. “The fact that attitudes can be manipulated in ways we’re not aware of has a lot of implications for political discourse.” If campaigns are nudging voters toward their candidate without voters’ knowledge, political discussions that were once exchanges of reasoned views will become knee-jerk skirmishes veering ever further from the democratic ideal. “I don’t think it’s time to run in panic,” Schreiber says, “but I don’t think we can be sanguine about it.”

Ohme insists that voters can inoculate themselves against neuroconsultants’ tactics if they’re savvy enough. “I measure hesitation. I can change your mind only if you hesitate. If you are a firm believer, I cannot change anything,” he says. “If you’re scared to be manipulated, learn. The more you learn, the more firm and stable your attitudes are, and the more difficult it is for someone to convince you otherwise.”

That’s perfectly reasonable advice. But I wonder. After meeting Pocovi, I logged into Emotion Research Lab to let its software track my face while I watched a demo video. The video was of a laughing baby, and I felt the corners of my mouth quirking up. After, the computer asked me how I’d felt while watching. “Happy,” I clicked. I’m a mom, right? I love babies. Yet when my emotion analysis arrived, it showed almost no trace of happiness on my face.

Thinking about the results, I realized the emotion software was right. I hadn’t really been happy at all. I had taken the test late at night, and I had been exhausted. The computer had seen me in a way I wasn’t used to seeing myself. I thought of something Dan Hill, the former advisor to the Mexican president’s campaign, had told me. “The biggest lies in life,” he’d said, “are the ones we tell ourselves.”

Elizabeth Svoboda is a science writer in San Jose, California, and the author ofWhat Makes a Hero?: The Surprising Science of Selflessness.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.