Quantum Inside: Intel Manufactures an Exotic New Chip

Intel has begun manufacturing chips for quantum computers.

The new hardware is too feeble to do much real work, but it offers a strong signal that the technology is inching closer to real-world applications. “We’re [moving] quantum computing from the academic space to the semiconductor space,” says Jim Clarke, director of quantum hardware at Intel.

While regular computers store and manipulate data by representing binary 1s and 0s, a quantum computer uses quantum bits or “qubits,” exploiting quantum phenomena to represent data in more than one state at once. This makes it possible to compute information in a fundamentally different way, and to perform some parallel calculations in the same time it would take to perform a single one.

Quantum computing has long been an academic curiosity, and there are enormous challenges to handling quantum information reliably. The sense is now growing, however, that the technology could emerge from research labs within a matter of years (see “10 Breakthrough Technologies 2017: Practical Quantum Computers”).

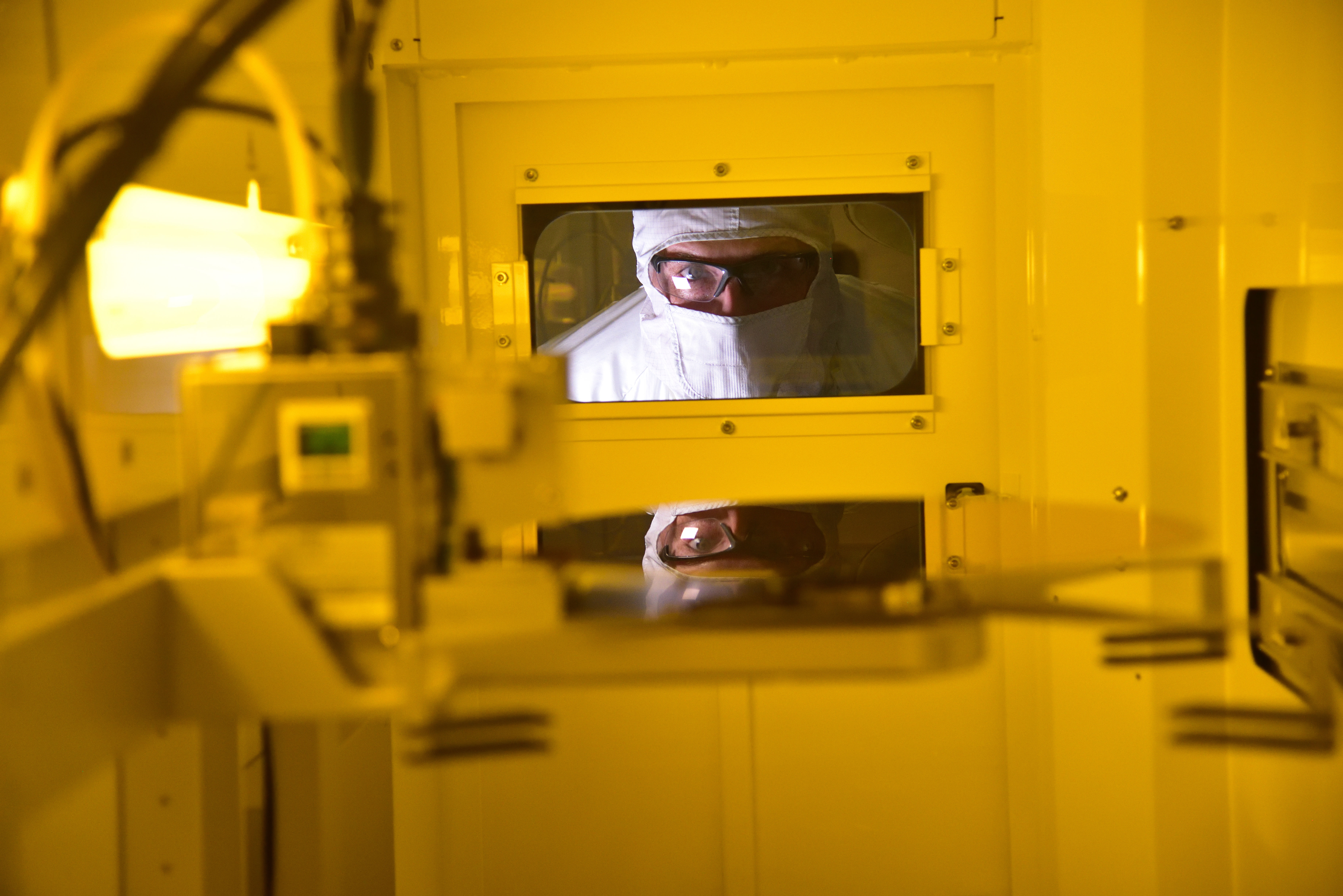

Intel’s quantum chip uses superconducting qubits. The approach builds on an existing electrical circuit design but uses a fundamentally different electronic phenomenon that only works at very low temperatures. The chip, which can handle 17 qubits, was developed over the past 18 months by researchers at a lab in Oregon and is being manufactured at an Intel facility in Arizona.

The work was done in collaboration with QuTech, a Dutch company spun out of the University of Delft that specializes in quantum computing. QuTech has made significant progress in recent years toward developing more stable qubits. Intel invested $50 million in QuTech in 2015.

The Intel researchers adapted the company’s existing 300-nanometer “flip chip” processor design to support the delicacy of quantum processing. This means the processors have to work at super-low temperatures and must be impervious to radio frequency interference. The qubits are stable only in extreme cold, and the researchers modified the materials, the circuit design, and the connections between different components.

Intel isn’t the only company working to make quantum computing practical. Google, IBM, Microsoft, and others are also pushing to develop the first quantum machine capable of performing real work.

Intel is relatively late to the game, but the company is betting that its fabrication expertise can help it catch up with or surpass its rivals. Clarke says the company chose to focus on quantum computing in 2014, figuring that it could accelerate progress using existing manufacturing methods. “Intel is the only player that has advanced manufacturing and packaging technologies,” he says (see “Intel Bets It Can Turn Everyday Silicon into Quantum Computing’s Wonder Material”).

As the capabilities of quantum chips scale up, these devices should reach a tipping point where certain kinds of calculations can get much faster. This should most immediately affect fields such as chemistry and materials science by enabling immensely complex molecular modeling. But the new capabilities could also spawn a range of new ideas.

Of late, there has been some hope that quantum computing could be used to accelerate machine learning. Several new algorithms have been proposed for “quantum machine learning,” but with each, significant challenges persist.

Jim Held, director of emerging technologies at Intel Labs, says the company is exploring algorithms alongside its research on hardware. “We think there are major developments in having hybrid algorithms that can use the best of classical capabilities with quantum computers’ strengths,” he says.

Hartmut Neven, who leads Google’s quantum computing project, has said that the company will build a 49-qubit system by next year. At that point the machine would be able to perform calculations that could not be simulated on a conventional supercomputer, a benchmark referred to as “quantum supremacy.”

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.