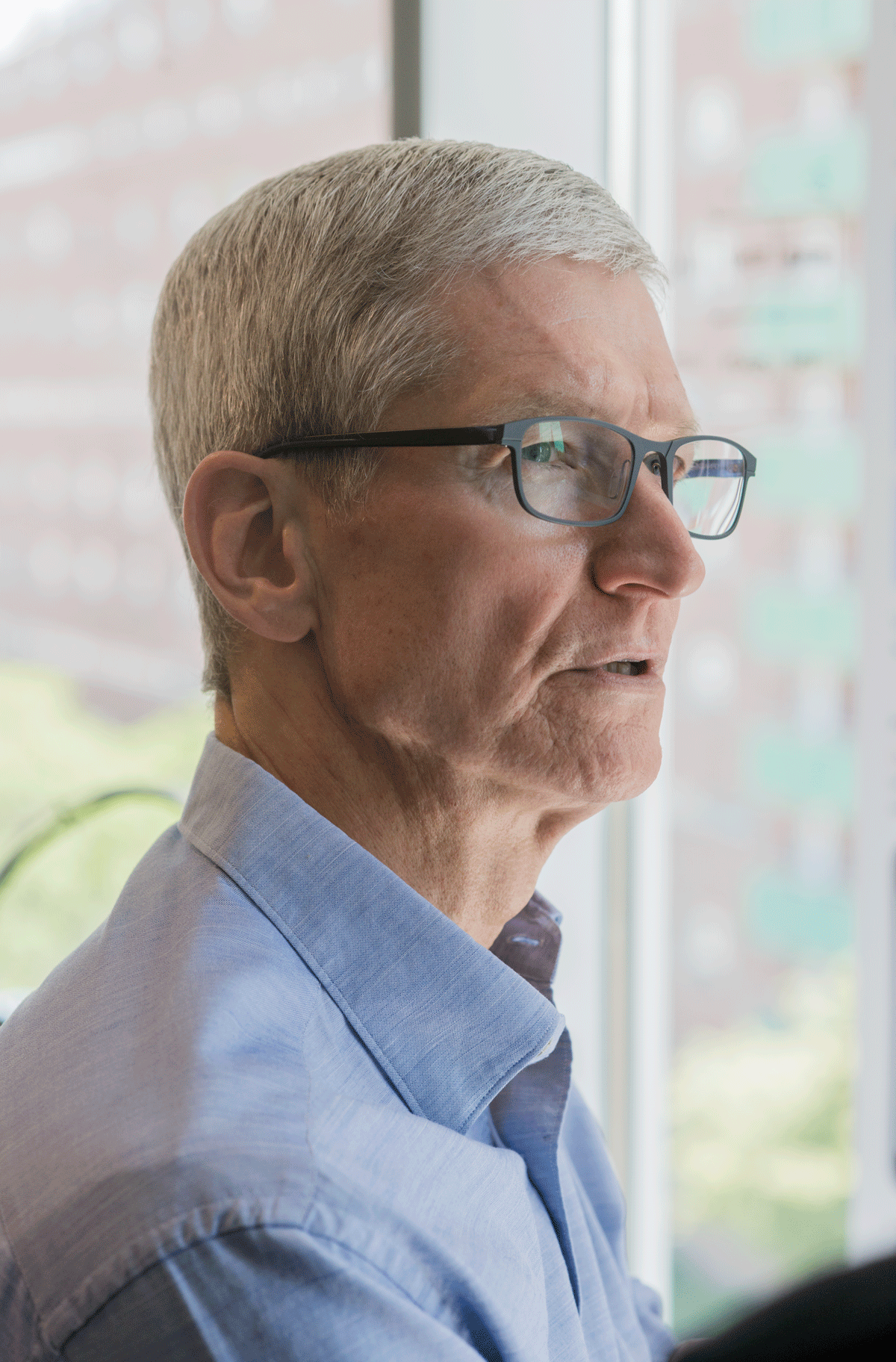

Tim Cook: Technology Should Serve Humanity, Not the Other Way Around

Earlier this week, striding across the stage at Apple’s annual developer conference in front of a crowd of thousands at the San Jose Convention Center, Tim Cook was animated and gushing, an evangelist for a series of new products and features.

Among them: the HomePod smart speaker (see "Apple Is Countering Amazon and Google with a Siri-Enabled Speaker") and new ways developers can build artificial intelligence into apps.

By Thursday morning, the Apple CEO was on the other coast, sitting on a gray couch next to a yellow emoji pillow (a happy face) at the MIT Media Lab’s Affective Computing Group listening to Rosalind Picard talk about depression. Cook, who will give this year’s MIT commencement address, is spending the day before learning more about research on campus, much of it involving sensors and AI.

Picard, an expert in using wearable devices and phone data to measure human emotions, is researching how data pulled from cell phones might help identify and perhaps even predict depression, a problem expected to be the second leading cause of disability in the world by 2020.

In time, Picard hopes to be able to predict when a person will become vulnerable to depression even before they get there. “We want to not just recognize, but try to forecast it,” she tells Cook.

As our phones become smarter about our behavior, they could play an important role in helping us track and understand ourselves, and our future behavior.

One way can be by leveraging artificial intelligence, and though Apple is often termed a laggard on AI when compared to companies like Google, Microsoft, and Amazon, Cook argues that machine learning is already well integrated into iPhones.

In an interview with MIT Technology Review conducted a few hours after the meeting with Picard, Cook ticks off a list: image recognition in our photos, for example, or the way Apple Music learns from what we have been listening to and adjusts its recommendations accordingly. Even the iPhone battery lasts longer now because the phone’s power management system uses machine learning to study our usage and adjust accordingly, he says.

Cook says the fact that the press doesn’t always give Apple credit for its AI may be due to the fact that Apple only likes to talk about the features of products it is ready to ship, while many others “sell futures.” Says Cook: “We are not going to go through things we’re going to do in 2019, '20, '21. It’s not because we don’t know that. It’s because we don’t want to talk about that.”

While he calls AI “profound” and increasingly capable of doing unbelievable things, on matters that require judgment he’s not comfortable with automating the human entirely out of the equation. “When technological advancement can go up so exponentially I do think there’s a risk of losing sight of the fact that tech should serve humanity, not the other way around.”

Part of that perspective, for Cook, continues to be keeping an eye on what iPhone data Apple should be able to access, and what is too personal, something that became an issue in its high profile refusal last year to unlock iPhones at the behest of law enforcement (see "What If Apple is Wrong?").

Picard’s lab doesn’t use Apple phones for its research today, though she tells Cook she would like to. For their current study of student emotional health, her team can’t get certain data from an iPhone that they need. For instance, who the user is calling and texting, something the researchers use (with study participants' permission) to build an understanding of the students' social activities.

Apple does have a platform for mobile health research and development called ResearchKit, but even exploring that, they haven’t been able to make it work, Picard says. Before the discussion is done, Cook has taken his red chrome iPhone from his pocket to make a note to himself to inquire about that internally.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.