Machine-Learning Algorithm Watches Dance Dance Revolution, Then Creates Dances of Its Own

Dance Dance Revolution is one of the classic video games of the late 20th century. A testament to its success, novelty, and longevity is that it is still popular today, almost 20 years since its launch.

This is a dancing game consisting of a screen and a dance platform that players control with their feet. The platform has four pads, which players must touch to music in the order specified by a chart on the screen. So players must dance to the music in the way the game demands.

The game also allows players to design and distribute their own dances. Over the years, people have created enormous databases of dances for a huge range of popular songs.

That gave Chris Donahue and pals, at the University of California, San Diego, an idea. Why not use this huge database to train a deep-learning machine to create dances of its own?

Today, they show how they have done just that. Their system—called Dance Dance Convolution—takes as an input the raw audio files of pop songs and produces dance routines as an output. The result is a machine that can choreograph music.

The game itself is straightforward in principle. As the music plays, the player touches the pads on the dance platform in the order shown on the screen. Each pad can be in one of four states: on, off, hold (or freeze), and release. Because the four pads can be activated or released independently, there are 256 possible step combinations at any instant.

Of course, the dances become progressively harder, with most songs having dances with five levels of difficulty. The difficulty is determined by the speed of the rhythmic subdivisions. Beginner-level games have steps on quarter and eighth notes, but higher difficulty dances have 16th note steps and some patterns involving 12th and 24th notes.

There are also other informal rules for the creation of dance charts. “Chart authors strive to avoid patterns that would compel a player to face away from the screen,” say Donahue and co. The result is dances with a wide variety of rich structures.

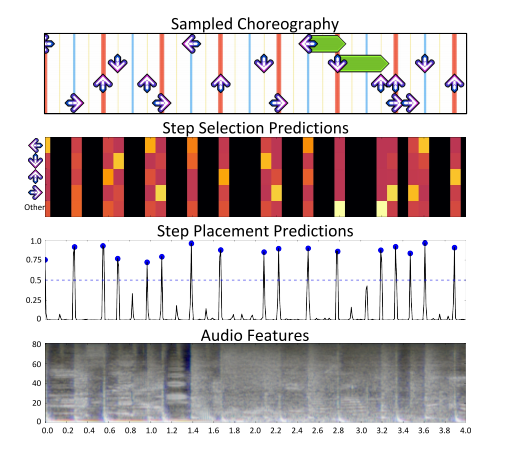

The task of automating the creation of dance charts is by no means simple. Donahue and co divide it into two parts. The first is deciding when to place steps, and the second is deciding which steps to select. They then train a machine-learning algorithm to learn each task.

The first task boils down to identifying a set of timestamps in a song at which to place steps. This is similar to a well-studied problem in music research called onset detection. This involves identifying important moments in a song such as melody notes or drum strikes.

“While not every onset in our data corresponds to a Dance Dance Revolution step, every Dance Dance Revolution step corresponds to an onset,” say Donahue and co.

Once the timestamps for each step have been identified, the second task is to select a step to take at each instant.

In all machine-learning tasks, the training data set is crucial. Music research has been hampered in the past because copyright issues can prevent songs being used in research (or at least being passed on along with the results).

DDR gets around this because so many dance charts have been created by ordinary users. Donahue and co say that one online repository, called Stepmania Online, stores over 350 gigabytes of dance charts on more than 100,000 songs.

For this research, the team focuses on two smaller data sets consisting of recordings plus dance charts. The first contains 90 songs choreographed by a single author, who has produced charts of five levels of difficulty for each song. The second data set contains 133 songs each with a single dance chart.

The team then increases the data set by creating a mirror image of each chart—for example, by swapping left for right and up for down (or both). The result is a data set of 35 hours of music in the form of raw audio files with more than 350,000 steps.

Donahue and co then use 80 percent of the music to train the machine-learning algorithm to recognize times for step placement. They validate and test the resulting model with the remaining 20 percent of the data. And they use similar proportions to train another algorithm to determine the step selection. Similar techniques are widely used in machine learning for tasks, such as natural-language processing.

The results are impressive. “Our experiments establish the feasibility of using machine learning to automatically generate high-quality DDR charts from raw audio,” say Donahue and co.

“By combining insights from musical onset detection and statistical language modeling, we have designed and evaluated a number of deep-learning methods for learning to choreograph,” they say.

That’s entertaining work that shows the utility of machine learning for tasks where there are large annotated data sets. It also shows that, yet again, another bastion of human creativity has fallen to the machines.

Ref: arxiv.org/abs/1703.06891: Dance Dance Convolution

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.