Robot Abuses Google’s Smart Textiles to See How Much They Can Take

Google is relying on a beefy robotic arm to help it test out the reliability of the interactive fabrics it’s making as part of a skunkworks project that can bring gesture and touch recognition to everything from jackets to teddy bears.

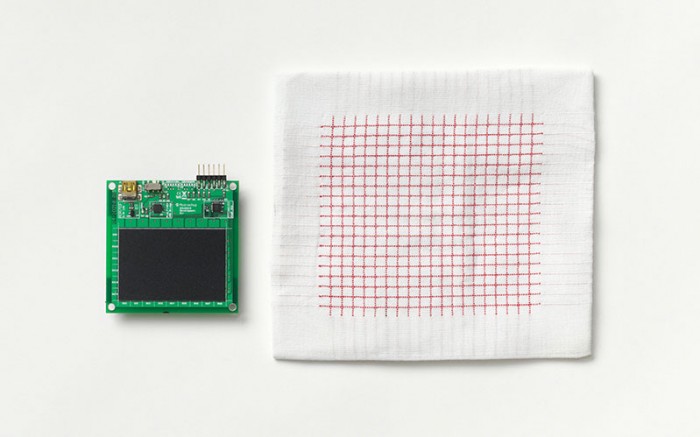

Unveiled last spring, Project Jacquard is creating textiles woven with grids of conductive yarns that are made with typical manufacturing methods—the hope is to make “smart” clothing, furniture, toys, and other fabric-covered items that are connected to electronics but are also affordable and durable (see “Google Wants You to Control Your Gadgets with Finger Gestures, Conductive Clothing”).

There have been plenty of connected textiles in the past, but most of them have been limited to things like fitness apparel and fashion-forward art projects. So before bringing its conductive fabric to the average person’s shirt or jeans, the people behind Project Jacquard want to figure out how well they can expect it to hold up over time, and how well it recognizes gestures.

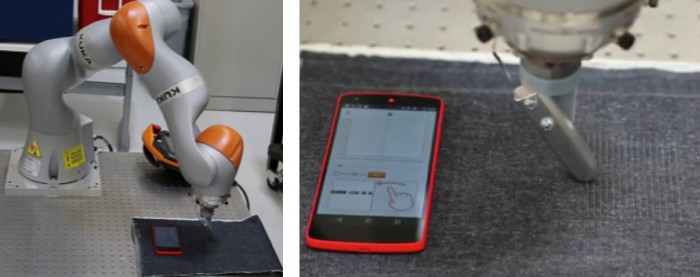

As they describe in a research paper that will be presented at a computer-human interaction conference in May, they used a robotic arm from industrial robot maker KUKA Robotics to repeatedly swipe a piece of their fabric laid atop what they describe as a “flexible sponge foam to best simulate body flexibility.”

After 10 hours of swiping, Project Jacquard researchers determined a life span of three years and 200 days of usage for a swatch of the interactive fabric, assuming you swiped it 200 times per day, for a total of 12,000 swipes. At that rate, gesture recognition was slightly over 95 percent and the fabric wasn’t visibly damaged, the researchers report.

Researchers say they later had the robot arm perform another 30,000 swipes on the same fabric and still reported a gesture-recognition rate of over 95 percent.

The results aren’t as impressive with real people. Researchers say that in another experiment they attached patches of interactive fabric to a jacket sleeve and had a dozen people perform three gestures (swiping left, swiping right, and using a “hold” gesture) while standing, walking, and sitting. Under these conditions, which are a little closer to how you’d probably use the fabric in regular life, the gestures weren’t recognized nearly as easily: researchers report their overall rate of recognition was nearly 77 percent. Ivan Poupyrev, who leads Project Jacquard and coauthored the paper, says that this has improved significantly in more recent work.

Ryan Robucci, an assistant professor at the University of Maryland, Baltimore County, who is working on a fabric-based sensor to help disabled people interact with gadgets via gestures, says gesture recognition is difficult because the person and the fabric can be moving, and, unlike a robotic arm, a person doesn’t always perform the correct gesture.

There are not yet any products publicly available that include Project Jacquard fabric, though clothing maker Levi Strauss, which is working with Google on the project, has said it plans to release a limited number of pairs of jeans with the technology this spring and more in the fall.

This story was updated on April 21 to include a response from Ivan Poupyrev.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.