Robot See, Robot Do: How Robots Can Learn New Tasks by Observing

It can take weeks to reprogram an industrial robot to perform a complicated new task, which makes retooling a modern manufacturing line painfully expensive and slow.

The process could be sped up significantly if robots were able to learn how to do a new job by watching others do it first. That’s the idea behind a project underway at the University of Maryland, where researchers are teaching robots to be attentive students.

“We call it a ‘robot training academy,’” says Yezhou Yang, a graduate student in the Autonomy, Robotics and Cognition Lab at the University of Maryland. “We ask an expert to show the robot a task, and let the robot figure out most parts of sequences of things it needs to do, and then fine-tune things to make it work.”

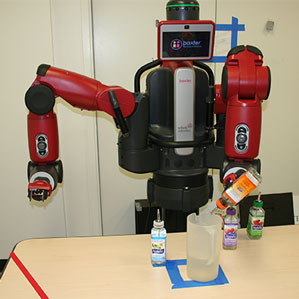

At a recent conference in St. Louis, the researchers demonstrated a cocktail-making robot that uses the approaches they’re working on. The robot—a two-armed industrial machine made by a Boston-based company called Rethink Robotics, watched a person mix a drink by pouring liquid from several bottles into a jug, and would then copy those actions, grasping bottles in the correct order before pouring the right quantities into the jug. Yang carried out the work with Yiannis Aloimonos and Cornelia Fermuller, two professors of computer science at the University of Maryland.

The approach involves training a computer system to associate specific robot actions with video footage showing people performing various tasks. A recent paper from the group, for example, shows that a robot can learn how to pick up different objects using two different systems by watching thousands of instructional YouTube videos. One system learns to recognize different objects; another identifies different types of grasp.

Watching thousands of YouTube videos may sound time-consuming, but the learning approach is more efficient than programming a robot to handle countless different items, and it can enable a robot to deal with a new object. The learning systems used for the grasping work involved advanced artificial neural networks, which have seen rapid progress in recent years and are now being used in many areas of robotics.

The researchers are talking to several manufacturing companies, including an electronics business and a carmaker, about adapting the technology for use in factories. These companies want to find ways to speed up the process by which engineers reprogram their machines. “At many companies it normally takes a month and a half or more to reprogram a robot,” Yang says. “So what are the current AI capabilities we can use to shorten this span, maybe even in half?”

The project reflects two trends in robotics; one is finding new approaches to learning, and another is robots working in close proximity with people. Like other groups, the Maryland researchers wants to connect actions to language to improve robots’ ability to parse spoken or written instructions (see “Robots Learn to Make Pancakes from WikiHow Articles”).

Other academics are also investigating ways of making robots that can learn. A group led by Pieter Abbeel at the University of California, Berkeley, is exploring ways for robots to learn through experimentation. Julie Shah, a professor at MIT, is developing ways for robots to learn not only how to perform a task, but also how to collaborate more effectively with human coworkers (see “Innovators Under 35: Julie Shah”).

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.