Oculus Rift Hack Transfers Your Facial Expressions onto Your Avatar

Virtual reality is set to get a vital dash of social reality.

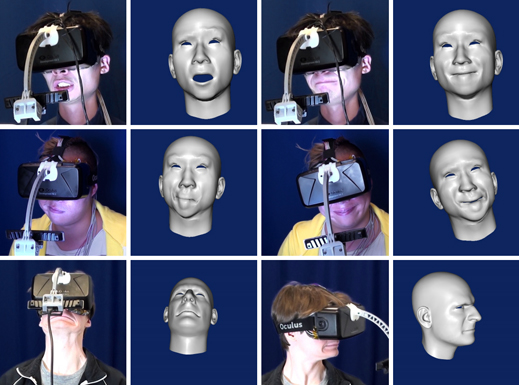

Researchers at the University of Southern California and Facebook’s Oculus division have demonstrated a way to track the facial expressions of someone wearing a virtual-reality headset and transfer them to a virtual character. That could make for much more rewarding socializing, work, or play in virtual worlds, because the expression of a virtual body double or otherworldly avatar could perfectly mimic that of a person’s real face.

The system tracks the motion of a person’s mouth using a 3-D camera attached to the headset with a short boom. Movements of the upper part of the face are measured using strain gauges added to the foam padding that fits the headset to the face. After the two data sources are combined, an accurate 3-D representation of the user’s facial movements can be used to animate a virtual character, whether that’s an ersatz person or something other than human (see video).

That could make inhabiting and interacting in virtual worlds more compelling, says Hao Li, an assistant professor at the University of Southern California who led the project. “To get a virtual social environment, you want to convey this behavior to other people,” he says. “This is the first facial tracking that has been demonstrated through a head-mounted display.” Li was named one of MIT Technology Review’s Young Innovators in 2013.

Facebook CEO Mark Zuckerberg has said he’s interested in the Oculus Rift headset providing new ways to socialize, although he hasn’t divulged any details. The social network acquired Oculus last year (see “What Zuckerberg Sees in Oculus Rift”).

Li says that the Oculus researchers worked with him purely as a research exercise, but that it wouldn’t be too difficult to polish the system they came up with and make it a commercial product. “If people think this is really central to important killer applications, you could get it into production relatively quickly,” he says.

“This is really cool,” says Philip Rosedale, who previously founded Second Life and is now CEO of a virtual worlds startup called High Fidelity. The company is working on enabling realistic virtual social interaction by using webcams and other sensor technology to track facial expressions and arm and hand gestures (see “The Quest to Put More Reality in Virtual Reality”).

There’s good evidence that making people’s avatars display their real-world body language helps them connect to other people in a virtual world, says Rosedale. He agrees with Li that it should be possible to streamline the first proof of concept. The camera could be integrated into the underside of the headset, for example. Efforts by researchers and startups to put eye-tracking cameras inside virtual reality headsets might offer an alternative way to gather data on the upper part of the face.

The linchpin of Li’s system is software that can combine data from the sensors tracking the upper and lower parts of the face and match the result onto a 3-D model of a face.

For now, that software requires you to go through a brief calibration process the first time you use the system. First you give your face muscles a 10-second workout by contorting them into a few different expressions while wearing a headset with the display part removed in front of a 3-D camera, so it can get a full view of the face. Then you don the complete headset and give your face a workout for another 10 seconds. The data collected helps the software figure out how to correctly match the streams of data from your upper and lower face.

Li says he’s working to eliminate that step by feeding his software more data on different faces. He is also working on other techniques that could make it easier to copy your real self into a virtual world. Many tools have already been developed to create 3-D replicas of people’s bodies and faces using conventional and 3-D cameras. Li recently made a system that tackles the more challenging task of making a realistic 3-D re-creation of a person’s hairstyle. His software can do it using only a single photo. That project and the face-tracking research will both be presented at the Siggraph computer graphics conference in Los Angeles this August.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.