Google Researchers Make Quantum Computing Components More Reliable

A solution to one of the key problems holding back the development of quantum computers has been demonstrated by researchers at Google and the University of California, Santa Barbara. Many more problems remain to be solved, but experts in the field say it is an important step toward a fully functional quantum computer. Such a machine could perform calculations that would take a conventional computer millions of years to complete.

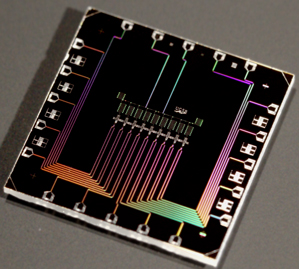

The Google and UCSB researchers showed they could program groups of qubits—devices that represent information using fragile quantum physics—to detect certain kinds of error, and to prevent those errors from ruining a calculation. The new advance comes from researchers led by John Martinis, a professor at the University of California, Santa Barbara, who last year joined Google to set up a quantum computing research lab (see “Google Launches Effort to Build Its Own Quantum Computer”). Martinis now holds a joint position between UCSB and Google, leading work on superconducting aluminum chips that operate at a fraction of a degree above absolute zero. Most of the work behind the new results, reported today in the journal Nature, took place before Martinis joined Google.

Google has been exploring quantum computing since 2009, when it began collaborating with D-Wave Systems, a startup that sells what it calls “the first commercial quantum computer” (see “The CIA and Jeff Bezos Bet on Quantum Computing”). Microsoft also has a sizable quantum computing research program (see “Microsoft’s Quantum Mechanics”).

To make a quantum computer requires wiring together many qubits to work on information together. But the devices are error-prone because they represent bits of data—0s and 1s—using delicate quantum mechanical effects that are only detectable at super-cold temperatures and tiny scales. This allows qubits to achieve “superposition states” that are effectively both 1 and 0 at the same time, allowing quantum computers to take shortcuts through complex calculations. It also makes them vulnerable to heat and other disturbances that distort or destroy the quantum states used to encode information and perform calculations.

Much quantum computing research focuses on trying to get systems of qubits to detect and fix errors. Martinis’s group has demonstrated a piece of one of the most promising schemes for doing this, an approach known as surface codes. The researchers programmed a chip with nine qubits so that they monitored one another for errors called “bit flips,” where environmental noise causes a 1 to flip to a 0 or vice versa. The qubits could not correct bit flips, but they could take action to ensure that they did not contaminate later steps of an operation.

“More work needs to be done before we can say that all the elements required for fault-tolerant quantum computation are in place, but I do think this work shows that we are close,” says Daniel Gottesman, who works on quantum error correction at the Perimeter Institute in Waterloo, Ontario.

The elements still required are not trivial, though. The bit flips that Martinis and colleagues took on can be addressed using classical algorithms that work on a conventional computer. A trickier kind of error, where a quantum property of a qubit known as “phase” is altered by environmental noise, can only be tackled using more complex algorithms that exploit quantum effects. Austin Fowler, a quantum electronics engineer with Google, says the group is now working on that, and on demonstrating error checking on more than nine qubits.

Still, recent results from Martinis and others make Gottesman optimistic that the full set of error correction techniques is within reach. “I think there is a good chance we will see such a demonstration by someone, possibly the Martinis group, within the next few years,” he says.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.