Mathematical Proof Reveals How To Make The Internet More Earthquake-Proof

One of the common myths about the internet is that it was originally designed during the Cold War to survive nuclear attack. Historians of the internet are quick to point out that this was not at all one of the design goals of the early network, although the decentralised nature of the system turns out to make it much more robust than any kind of centralised network.

Nevertheless, the internet is still vulnerable. For example, the magnitude 9 earthquake and resulting tsunami that struck Japan on 11 March 2011, caused huge damage to the Japanese telecommunications infrastructure.

The Japanese telecom company NTT says it lost 18 exchange buildings and 65,000 telegraph poles in the disaster which also damaged 1.5 million fixed line circuits and 6300 kilometres of cabling.

That raises an interesting question: could the spatial layout of the internet be made any more robust against this kind of damage?

Today we get an answer of sorts thanks to the work of Hiroshi Saito at NTT’s Network Technology Laboratories in Tokyo. Saito has calculated how the likelihood of a network being damaged in a disaster depends on its spatial geometry. In other words, he’s worked out how the shape of the network determines its chances of coming to grief.

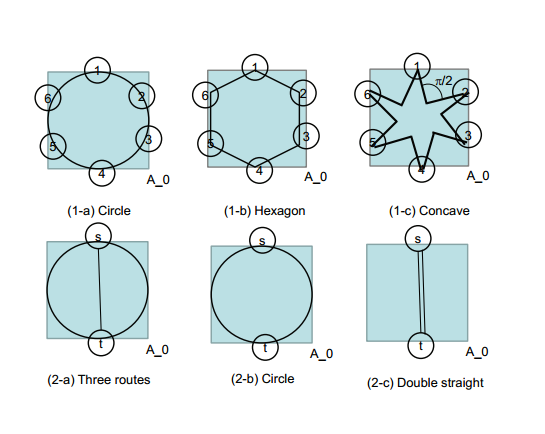

Saito begins by imagining a network that contains a finite area in which a disaster such as an earthquake occurs. The nodes within this area then have a certain probability of failing. So the questions is how to best organise the nodes to minimise the probability that they cross a disaster area should one occur.

The secret mathematical sauce that Saito uses to prove his results is a discipline known as integral geometry. He uses this deftly to prove a number of handy rules of thumb that network scientists can easily exploit. “This theoretical method explicitly reveals physical network design rules robust against earthquakes,” he says.

For example, a route passing through several nodes inevitably traces out a zigzag shape. One rule is that shorter zigzags reduce the probability that a network intersects a disaster area. Another is that if the network forms a ring, then additional routes within the ring cannot reduce the probability that all the routes between a pair of nodes intersect the disaster area.

He goes on to test these ideas using the intensity data from several real earthquakes in Japan all greater than magnitude 5 (on the Japanese scale of 7). This included the 1995 Kobe earthquake which is the second most destructive in Japanese history.

(But it doesn’t include the 2011 quake data since it occurred under the sea and the disaster area cannot be completely enclosed within the telecoms network. This earthquake violates one of the basic mathematical assumptions in his proofs.)

He then superimposed various networks on the earthquake data and tested to see how they would fare. Sure enough, the results showed that his theoretical ‘rules of thumb’ ought to work just as well in the real world as they do theory. “The analysis results are validated through empirical earthquake data,” he says.

That’s an entirely new way to think about network reliability. Today, engineers design networks so that they are first protected from damage and second, relatively easy to restore should they become damaged.

But Saito’s approach is more ambitions. “The proposed design method is the first step in disaster management aiming at disaster avoidance,” he says. In other words, better to miss the disaster altogether, if possible.

Interesting stuff. Earthquakes have a long history of encouraging innovation in telecoms networks. For example, Japan dramatically increased the number of microwave links in its network after a large earthquake in 1968 and started using mobile stations to communicate with telecoms satellites after another in 1993. Could Saito’s ideas also become mainstream?

Ref: arxiv.org/abs/1402.6835 : Spatial Design of Physical Network Robust Against Earthquakes

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.