Syrian Web Censorship Techniques Revealed

Back in October 2011, a group of hackers and net activists called Telecomix leaked the logs showing exactly how Syrian authorities were monitoring and filtering internet traffic within the country. The logs comprised of 600 GB of data representing 750 million requests on the web and showing exactly which requests were allowed and which were denied.

Today, Abdelberi Chaabane at Inria in France and a few pals, publish the first detailed analysis of this data, revealing exactly how the traffic was filtered, which IP addresses and websites were blocked and which keywords were targeted for filtering. What their work reveals is unique. These logs provide a snapshot of a real-world censorship ecosystem, the first time this kind of detail has become available from an authoritarian regime.

Internet researchers have a long history of studying censorship. In general, they’ve done this by generating traffic and observing what kind of content is blocked in countries such as China and Iran. That allows them to infer what information is being censored.

But these techniques have important limitations. For example, it is only ever possible to generate a limited amount of traffic so it can only provide a skewed representation of censorship policies. It’s also hard to measure what proportion of the overall traffic is being censored. By contrast, the censorship logs from Syria provide precise insight into both these issues.

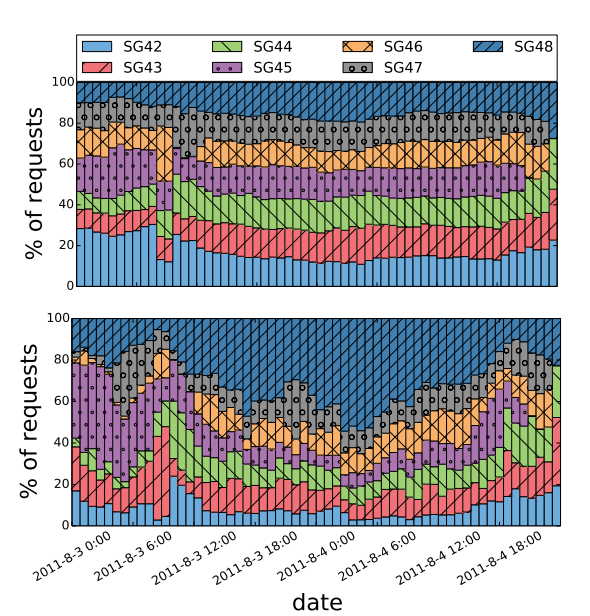

The data comes from seven Blue Coat SG-9000 proxies that Syrian authorities were using to monitor, filter and block traffic within the country. These devices are usually placed between a monitored network and the internet backbone, where they are capable of intercepting traffic transparently without anybody noticing.

Blue Coat SG-9000 proxies perform essentially three functions. When they receive a request from a client, they can serve the original content, they can serve up a result from a stored cache or they can deny the request.

The Syrian logs show exactly what the proxies did over a period of nine days in July and August 2011. Because of the huge size of the dataset (600GB), Chaabane and co conduct much of their analysis on a randomly chosen sample consisting of about 32 million requests, roughly 4% of the total.

Their analysis of this data reveals some surprising facts. It turns out the Syrians were censoring only a small fraction of the traffic, less than 1 per cent. “The vast majority of requests is either allowed (93.28%) or denied due to network errors (5.37%),” say Chaabane and co.

But this 1 per cent shows exactly how Syrian authorities conducted censorship at that time. “We found that censorship is based on four main criteria: URL-based filtering, keyword-based filtering, destination IP address, and a custom category-based censorship,” say Chaabane and co.

The Syrians concentrated their URL-based filtering on instant messaging software such as Skype, which is heavily edited. And much of the blocked keywords and domains relate to political news content as well as video sharing and censorship-circumvention technologies.

Israel features strongly in the logs. “Israeli-related content is heavily censored as the keyword Israel, the .il domain, and some Israeli subnets are blocked,” say Chaabane and co.

Social media is also an interesting example. Following the Arab Spring in 2010, the Syrian authorities decided to allow access to Facebook, Twitter and YouTube in February 2011, fearing the possibility of unrest if they censored it wholesale.

The logs show that censorship of social networking sites is relatively light touched–most traffic on networks like Facebook and Twitter is allowed unless it contains blacklisted words, such as ‘proxy’. Also, a few specific pages are blocked such as the “Syrian Revolution” Facebook page.

Overall, this is a fascinating study that provides the first detailed insight into state-sponsored censorship on the web. At least as it was operated in 2011.

An interesting question is how censorship operates today in Syria after two years of civil war. Chaabane and co say that the Syrian authorities invested $500k in additional surveillance equipment in 2011, suggesting that a more powerful filtering architecture may have been put in place since this log was leaked.

But unless there are other leaks of the same kind of data, we will probably never know how Syrian censorship has changed over this time.

An interesting corollary to this work is that Chaabane and co acknowledge that their analysis could be beneficial to both sides of the censorship battle. And they have this to say on the matter: “We believe that our analysis is crucial to better understand the technical aspects of a real-world censorship ecosystem, and that our methodology exposes its underlying technologies, policies, as well as its strengths and weaknesses (and thus can facilitate the design of censorship-evading tools).”

The alternative would be not to publish their results. And that, of course, would be a form of censorship in itself.

Ref: arxiv.org/abs/1402.3401: Censorship in the Wild: Analyzing Web Filtering in Syria

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.