Intel Robot Puts Touch Screens through Their Paces

In a compact lab at Intel’s Silicon Valley headquarters, Oculus the robot is playing the hit game Cut the Rope on a smartphone. Using two fingers with rubbery pads on the ends, the robot crisply taps and swipes with micrometer precision through a level of the physics-based puzzler. It racks up a perfect score.

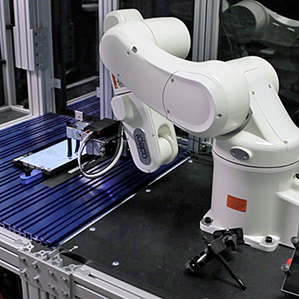

It’s a far cry from the menial work that Oculus’s robot arm was designed for: moving silicon wafers around in a chip fab. But it’s not just a party trick. Intel built Oculus to try to empirically test the responsiveness and “feel” of a touch screen to determine if humans will like it.

Oculus does that by analyzing how objects on a device’s screen respond to its touch. It “watches” the devices that it holds via a Hollywood production camera made by Red that captures video at 300 frames per second in higher than HD resolution. Software uses the footage to measure how a device reacts to Oculus—for example, how quickly and accurately the line in a drawing program follows the robot’s finger, how an onscreen keyboard responds to typing, or how well the screen scrolls and bounces when Oculus navigates a long list.

Numerical scores are converted into a rating between one and five using data from cognitive psychology experiments conducted by Intel to discover what people like in a touch interface. For those experiments, hundreds of people used touch screens set up to have different levels of responsiveness. These tests were devised by psychologists in Intel’s interaction and experience group, which studies the relationships people have with computers (see “Intel Anthropologist Questions the Smart Watch”).

The scores produced by Oculus and the psychological research have proved valuable to engineers at companies working on touch screen devices based around Intel chips. They’ve also been useful to Intel’s chip designers, says Matt Dunford, the company’s user experience manager. “We can predict precisely whether a machine will give people a good experience,” he says, “and give them numbers to say what areas need improving.”

The conventional approach would be to have a user experience expert test a touch screen and give his expert but personal assessment, says Dunford. That doesn’t always offer a specific indication of what needs to be tweaked to improve the feel of a device.

Intel won’t share specific details of how it defines the difference between a touch screen that is sluggish and one that is snappy. But robotics engineer Eddie Raleigh, who helped build Oculus, says a good touch screen follows a swiping finger with only tens of milliseconds of delay.

Intel’s tests on human subjects have also shown that perceptions of quality can vary significantly depending on how people are using a device. People unconsciously raise their standards when using a stylus, for example, says Raleigh. “People are used to pens and pencils, and so it has to be very fast, about one millisecond of delay,” he says. Meanwhile, children generally expect a quicker response from a touch screen than adults, whatever the context.

Raleigh says his team can take such differences into consideration when setting up Oculus for a test. “We can mimic a first-time user who is being slower or someone hopped up on caffeine and really going fast,” he says.

Intel currently has three Oculus robots at work and is completing a fourth. The device can be used on any touch screen device, from a smartphone up to an all-in-one PC. It uses a secondary camera to automatically adjust to new screen sizes.

Intel has also built semi-automated rigs to test the performance of audio systems on phones and tablets. A soundproofed chamber with a dummy head containing speakers and microphones and a camera is used to test the accuracy and responsiveness of voice-recognition and personal assistant apps. A range of sophisticated cameras and imagers are used to check the color a display shows.

Jason Huggins, co-founder and chief technology officer of Sauce Labs, a company that offers phone and Web app testing, says Oculus has secret cousins inside most major phone and tablet makers. “The Samsungs, LGs, and Apples all have these kinds of things, but they don’t talk about it because they don’t want their competitors knowing,” he says. Intel’s Dunford says Oculus represents an improvement on previous devices in the industry because it compares devices using data on how people actually perceive touch screens. Other robots, he says, tend to see how devices perform against certain fixed technical specifications.

Huggins is trying to widen access to such robots because he believes they could help app developers polish their software. He has created an open source design for a robot called Tapster that can operate touch devices using a conventional stylus, with much less finesse than Oculus but at a fraction of the price. Many of the parts can be made on a 3-D printer. Huggins has sold about 40 of the machines and is working on integrating a camera into the design.

“If I can make a robot that can actually test apps, I suspect there’s going to be a serious market,” he says. Software developers currently pay companies like Sauce Labs to test apps using either human workers or software that emulates a phone or Web browser. Huggins says having a robotic third option could be useful, and predicts that robotic testing of all kinds of computing devices will become more common.

“We have to think about this because software is not trapped inside a computer behind a keyboard and mouse anymore,” he says. “You’ve got phones, tablets, Tesla’s 17-inch touch screen, Google Glass, and Leap Motion, where there’s no touching at all. These things depend on people having eyeballs and fingers, so we have to create a robotic version of that.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.