Can Automated Editorial Tools Help Wikipedia’s Declining Volunteer Workforce?

The result is many high quality articles on a huge range of topics in over 200 languages. But there are also articles of poor quality and dubious veracity.

This raises an important question for visitors to the site: how reliable is a given article on Wikipedia?

Today, we get an answer thanks to the work of Xiangju Qin and Pádraig Cunningham at University College Dublin in Ireland. These guys have developed an algorithm that assesses the quality of Wikipedia pages based on the authoritativeness of the editors involved and the longevity of the edits they have made.

“The hypothesis is that pages with significant contributions from authoritative contributors are likely to be high-quality pages,” they say. Given this information, visitors to Wikipedia should be able to judge the quality of any article much more accurately.

Various groups have studied the quality of Wikipedia articles and how to measure this in the past. The novelty of this work is in combining existing measures in a new way.

Qin and Cunningham begin with a standard way of measuring the longevity of an edit. The idea here is that a high-quality edit is more likely to survive future revision. They computed this by combining the size of an edit performed by a given author and how long this edit lasts after other revisions.

Vandalism is a common problem on Wikipedia. To get around this, Qin and Cunningham ignore all anonymous contributions and also take an average measure of quality which tends to reduce the impact of malicious edits.

Next, they measure the authority of each editor. Wikipedia is well known for having a relatively small number of dedicated editors who play a fundamental role in the community. These people help to maintain various editorial standards and spread this knowledge throughout the community.

In this community, Qin and Cunningham assume a link exists between two editors if they have both co-authored an article. So inevitably, more experienced editors tend to be better connected in the network.

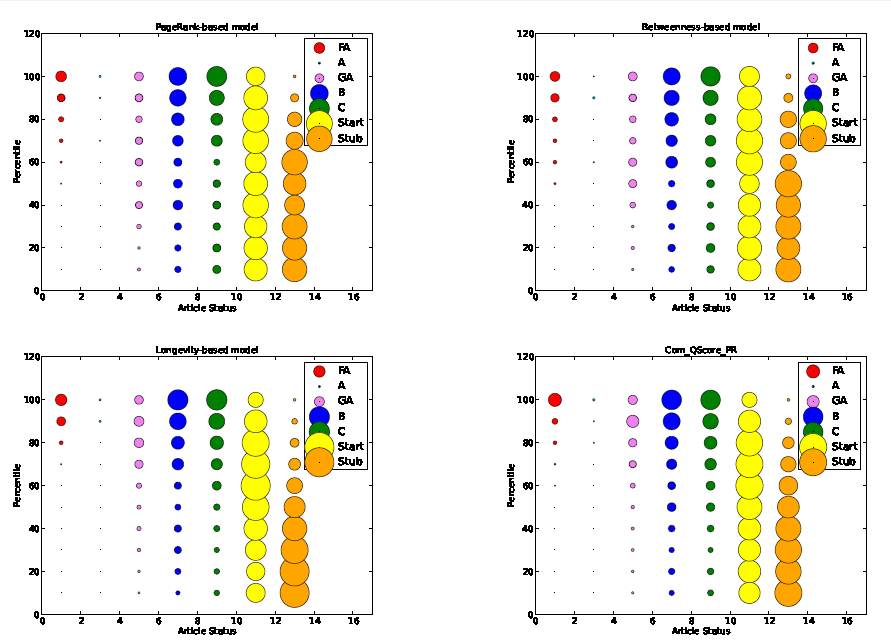

There are various ways of measuring authority. Qin and Cunningham look at the number of other editors a given editor is linked to. They assess the proportion of shortest paths across the network that pass through a given editor. And they use an iterative Pagerank-type algorithm to measure authority. (Pagerank is the Google algorithm in which a webpage is considered important if other important webpages point to it.)

Finally, Qin and Cunningham combine these metrics of longevity and authority to produce a measure of the article quality.

To test the effectiveness of their assessment, they used their algorithm to assess the quality of over 9,000 articles that have already been assessed by Wikipedia editors. They say that the longevity of an edit by itself is already a good indicator of the quality of an article. However taking into account the authority of the editors generally improves the assessment.

“Articles with significant contributions from authoritative contributors are likely to be of high quality, and that high-quality articles generally involve more communication and interaction between contributors,” they conclude.

There are some limitations, of course. A common type of edit, known as a revert, changes an article to its previous version, thereby entirely removing an edit. This is often used to get rid of vandalism. “At present, we do not have any special treatment to deal with reverted edits that do not introduce new content to a page,” admit Qin and Cunningham. So there’s work to be done in future.

However, the new approach could be a useful tool in a Wikipedia editor’s armory. Qin and Cunningham suggest that it could help to identify new articles that are of relatively good quality and also identify existing articles that are of particularly low quality and so require further attention.

With the well-documented decline in Wikipedia’s volunteer workforce, automated editorial tools are clearly of value in reducing the workload for those that remain. A broader question is how good these tools can become and how they should be used in these kinds of crowd-sourced endeavors.

Ref: arxiv.org/abs/1206.2517: Assessing The Quality Of Wikipedia Pages Using Edit Longevity And Contributor Centrality

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.