Cyborg Astrobiologist Put Through its Paces in West Virginian Coalfields

The search for life on other planets is hotting up. The seemingly endless train of Mars rovers have found convincing evidence of a warmer and wetter climate on Mars. The Huygens and Cassini spacecraft have found lakes, beaches, rivers and rain on Titan (albeit of the the oily variety). And Europa’s dark, warm ocean looks increasingly inviting for astrobiologists.

Then there are the ever-increasing hordes of exoplanets in the habitable zones around other stars.It’s never been a better time to be an astrobiologist.

One problem that this new breed of scientist faces is data overload. Each image from Mars has to be pored over by a human expert before the rover’s next move can be planned and executed.

And since these images are increasingly numerous, this is a time consuming task. So a way to automate the classification of these images, at least partially, would be hugely useful.

Step forward Patrick McGuire at the Freie Universität in Berlin, Germany and a few pals who have built and tested an automated system that does just this. They call their new system the cyborg astrobiologist.

The new system is relatively simple. It consists of a Samsung Propel smartphone, which has a camera capable of taking 1280 x 960 pixel images, connected by bluetooth to a Dell Inspiron 9300 laptop. For the moment, it requires a human helper to carry and point the camera but it’s not hard to imagine how the system could be fitted to an autonomous rover.

The phone takes photos of the terrain as it moves around, sending them it to the laptop for analysis. This where the clever part takes place.

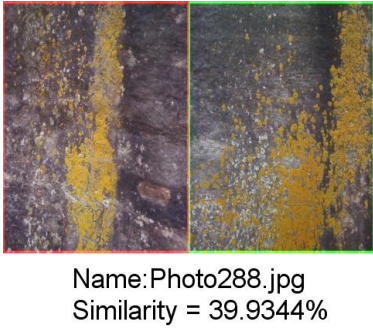

The laptop analyses each photo by comparing it earlier images it has received and looking for similarities between them. It analyses the colour of the scene and the texture to calculate a similarity score.

In that way, it classifies images of similar rocks and groups them together. This same process also reveals when the images differ significantly, indicating that terrain has changed or that an object of interest has appeared in the scene. At this point, the system alerts a human astrobiologist who can take over and analyse the novel features in more detail.

That’s handy because the system does not need to know what type of rock it is looking at but is still able to spot when things get interesting.

These guys tested the system on rocky outcrops in the coalfields of West Virginia and say it is impressive. “The image-matching procedure of this system performed very well…giving a 91% accuracy for similarity detection,” say McGuire and co.

That’s a potentially useful device that could make life much easier for astrobiologists both on Earth and further afield. For example, it could significantly reduce the amount of data a Mars rover would have to send to Earth for analysis and therefore dramatically speed up a rover’s work.

“This image-compression technique could be useful in giving more scientific autonomy to robotic planetary rovers, and in assisting human astronauts in their geological exploration and assessment,” they say.

Astrobiologists have never had it so good. But with systems like this in the works, they could soon have it even better.

Ref: arxiv.org/abs/1309.4024: The Cyborg Astrobiologist: Matching of Prior Textures by Image Compression for Geological Mapping and Novelty Detection

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.