We Need a Moore’s Law for Medicine

Moore’s Law predicts that every two years the cost of computing will fall by half. That is why we can be sure that tomorrow’s gadgets will be better, and cheaper, too. But in American hospitals and doctors’ offices, a very different law seems to hold sway: every 13 years, spending on U.S. health care doubles.

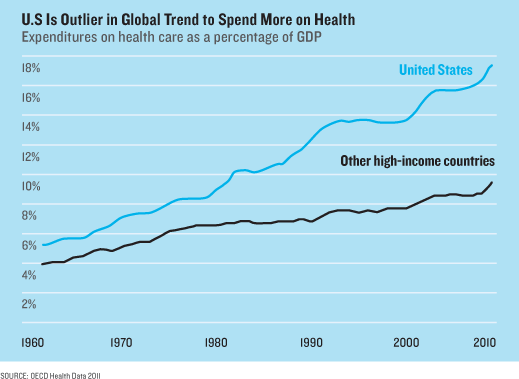

Health care accounts for one in five dollars spent in the United States. It’s 17.9 percent of the gross domestic product, up from 4 percent in 1950. And technology has been the main driver of this spending: new drugs that cost more, new tests that find more diseases to treat, new surgical implants and techniques. “Computers make things better and cheaper. In health care, new technology makes things better, but more expensive,” says Jonathan Gruber, an economist at MIT who leads a heath-care group at the National Bureau of Economic Research.

Much of the spending has been worth it. While the U.S. spends the most of any country by far, health care is becoming a larger part of nearly every economy. That makes sense. Better medicine is buying longer lives. Yet medical spending is so high in the U.S. that the White House now projects that if it keeps growing, it could, in 25 years, reach a third of the economy and devour 30 percent of the federal budget. That will mean higher taxes. If we can’t accept that, says Gruber, we’re going to need different technology. “Essentially, it’s how do we move from cost-increasing to cost-reducing technology? That is the challenge of the 21st century,” he says.

That is the big question in this month’s MIT Technology Review Business Report. What technologies can save money in health care? As we headed off to find them, Jonathan Skinner, a health economist at Dartmouth College, warned us that they are “as rare as hen’s teeth.”

In an essay we’ll publish this week, Skinner explains why: our system of public and private insurance provides almost no incentive to use cost-effective medicine (see “The Costly Paradox of Health-Care Technology”). In fact, unfettered access to high-cost technology is politically sacrosanct. As part of Obamacare, the government’s restructuring of insurance benefits, the White House established a new federal research institute that will spend $650 million a year studying what medicine works, and which doesn’t. But just try finding out if any of it will be any cheaper.

According to the law that created the institute, its employees can’t tell you. The institute, a spokesperson told me, is forbidden from considering “costs or cost savings.” It’s not cynical to speculate why. Five of the seven largest lobbying organizations in Washington, D.C., are run by doctors, insurance companies, and drug firms. Slashing spending isn’t high on the agenda.

For cost-saving ideas, you have to look outside the mainstream of the health-care industry, or at least to its edges. In this report we profile Eric Topol, a cardiologist and researcher who is director of the Scripps Translational Science Institute in San Diego and who once blew the whistle on the dangers of the $2.5 billion pain drug Vioxx. These days, Topol is agitating again, this time to topple medicine’s entire economic model using low-cost electronic gadgets, like an electrocardiogram reader that attaches to a smartphone.

By brandishing his iPhone around the hospital, Topol is making a statement: one way to fix the health-cost curve is to harness it to Moore’s Law itself. The more medicine becomes digital, the idea goes, the more productive it will become (see “This Doctor Will Save You Money”).

That’s also the thinking behind the U.S. government’s largest strategic intervention in health-care technology to date. In 2009, it set aside $27 billion to pay doctors and hospitals to switch from paper archives to electronic health records. The aim of the switchover—now about half finished—is to create a kind of Internet for medical information.

That may bring transformation. Hospitals are delving into “big data,” patients are using social networks to wrest control over their health, and entrepreneurs are trying to invent killer apps. Vinod Khosla, a prominent Silicon Valley investor who has called what doctors do “witchcraft,” predicts that machines might replace 80 percent of their work. And he’s putting money behind the talk. One company he’s backing, EyeNetra, uses a phone to measure what eyeglasses prescription you need, no doctor required (see “When Smartphones do a Doctor’s Job”).

What’s still missing are strong financial incentives for cost-saving technology. John Backus, a partner at New Atlantic Ventures, believes the trigger will be the growing cash market for medical services. Deductibles are rising and, under Obamacare, some people will get fixed sums from their employers or the government to shop for insurance online. Backus gives the example of a parent who e-mails a picture of a child’s rash and wants a diagnosis. Few doctors even respond to e-mail, since they can’t bill insurance for it. “But in a cash market, people will demand it, and doctors will do it.”

Medicine is so far behind other industries that some of the ideas entrepreneurs are pitching feel transported from the late 1990s. One app called PokitDok—funded with about $5 million, including from Backus’s firm—is an online bidding site that lets consumers learn how much doctors intend to charge. Such pricing engines are how we buy airline tickets. Yet in U.S. health care, it’s still almost impossible to know what anything will cost.

The wider problem facing these kinds of innovations—including records systems, mobile gadgets, and Internet-style business models—is that claims they will cut costs, while plausible and appealing, haven’t been proved. And it could take many years to find out if they actually bend medicine’s cost curve. Micky Tripathi, CEO of the Massachusetts eHealth Collaborative, notes that it took a decade before productivity gains from personal computers were first detected in the wider economy in the late 1990s. “It’s too early to know,” says Tripathi. “We are at Version 1.0 of health information technology.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.