Trained on Jeopardy, Watson Is Headed for Your Pocket

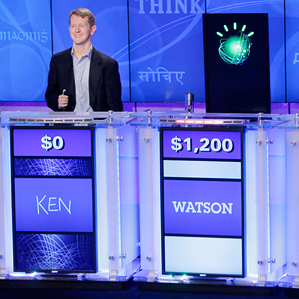

Watson, the IBM computer system that attracted millions of viewers when it defeated two Jeopardy champions handily in 2011, is finally going to meet its public.

Last week, IBM announced that a version of the artificially intelligent software that gave Watson its smarts is to be rented out to companies as a customer service agent. It will be able to respond to questions posed by people, and sustain a basic conversation by keeping track of context and history if a person asks further questions. An “Ask Watson” button on websites or mobile apps will open a text-based dialogue with the retired Jeopardy champion on topics such as product buying decisions and troubleshooting guidance.

This new version of Watson, somewhat opaquely called “Watson Engagement Advisor,” will be the Jeopardy champ’s first truly public test. Over the past two years, IBM has engaged in several trials of Watson intended to test its worth in the workplace—for example, as an aide to medical staff or financial workers (see “Watson Goes to Work in the Hospital”)—but it has not released a general product based on the technology. Even so, several companies have committed to rolling out Watson-based conversation assistants, including the Australian bank ANZ, Royal Bank of Canada, Nielsen, and the publishing and research company IHS.

Although Watson is designed to work on text, it can also use the output of speech recognition software, meaning that some implementations of Ask Watson may end up like a subject-specific, more knowledgeable version of Apple’s Siri. Some IBM partners are already experimenting with connecting voice recognition to Watson, says Rob High, an IBM fellow and chief technology officer of the group commercializing Watson. “It’s going to be common.”

The Ask Watson software is significantly different from the Watson that triumphed on TV two years ago, says High, with upgrades to its intelligence and design. “The hardware that was built for Jeopardy was a single-user system,” he says, and it was designed to be run on a single, very powerful computer system, which made computational efficiency a minor concern.

The new software has been adapted to run inside an IBM data center, and to spawn new, independent instances of Watson for each IBM client. “We’ve now massively parallelized Watson and reduced the footprint,” says High, making the software 75 percent smaller and 25 percent faster. Companies can use tools provided by IBM to connect a cloud-based Watson to a chat interface on their website or mobile app, or allow it to answer customer e-mails.

Watson prepped for Jeopardy by ingesting four terabytes of data, including the entirety of Wikipedia, but the Engagement Advisor version is provided to companies as a blank slate, albeit one with an understanding of human language. Watson must first feed on documents about the products and topics it is to discuss; then it’s fed tens of sample questions and answers to learn the type of language used by customers to refer to the knowledge it just ingested.

The result of that preparation is a customized version of Watson, says High. “Just like on Jeopardy, Watson can deeply understand the questions asked and knowledge relevant to the topic,” he says, enabling a person to have a relatively natural, detailed conversation with the artificial helper. Watson responds uniquely to each question it gets asked rather than repeating past responses to similar questions. “That the context frequently changes is a hallmark of human conversations,” says High.

Watson’s intelligence has also received an upgrade. It can process information laid out in tables, and also understand when a document describes a procedure with different steps and branches. The latter ability is crucial in many customer support scenarios, says High. “We can look at that procedure and recognize that there are different paths you would take depending on the context, and use that to start a conversation and guide you appropriately.”

Deborah Dahl, who consults with startup companies making use of speech recognition and intelligent agent technology, says that IBM is well-placed to prove that artificial assistants can display extensive knowledge. “It’s great to have something like Siri with wide, shallow knowledge, but there are a lot of areas where a system with deep knowledge of a particular topic could help people, make a lot of money, or save a lot of money,” says Dahl. “I think Watson is probably at the forefront of having [both] deep knowledge and natural language recognition.”

Dahl says IBM’s new offering will likely make more sense to people when it’s experienced via their mobile phone, where it is difficult to survey, explore, and digest large volumes of information at one time. Medical and financial services are likely to be the first areas the approach could be used profitably, says Dahl, because their services are both valuable and involve a lot of information.

However, Dahl notes, it is unclear how well companies and their customers will adjust to this new technology. “It’s going to take some engineering ability to take the data and put it into a form that Watson can use,” she says. It will also be challenging to deploy the end result in a way that customers like. Although IBM’s assistant is better at conversation than its predecessors, its shortcomings in comparison to a human could prove irksome for customers.

High acknowledges those challenges, and says that, so far, not even IBM has a firm idea of how customers will react to Watson as a service agent. “We’re spending a lot of time on use-case analysis and user experience,” he says.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.