How to Avoid Another Flash Crash

Today marks the three-year anniversary of the 2010 Flash Crash, when the U.S. stock market lost 1,000 points in a matter of minutes before recovering most of these losses a few minutes later.

The crash occurred when high-frequency trading algorithms got into a vicious, high-speed selling spiral, wiping out billions of dollars of value before anyone knew what was happening. Some observers argue that a trading system dominated by machines rather than human beings could be increasingly prone to such calamitous crashes in future.

Perhaps algorithms can also help make the financial system more secure, though.

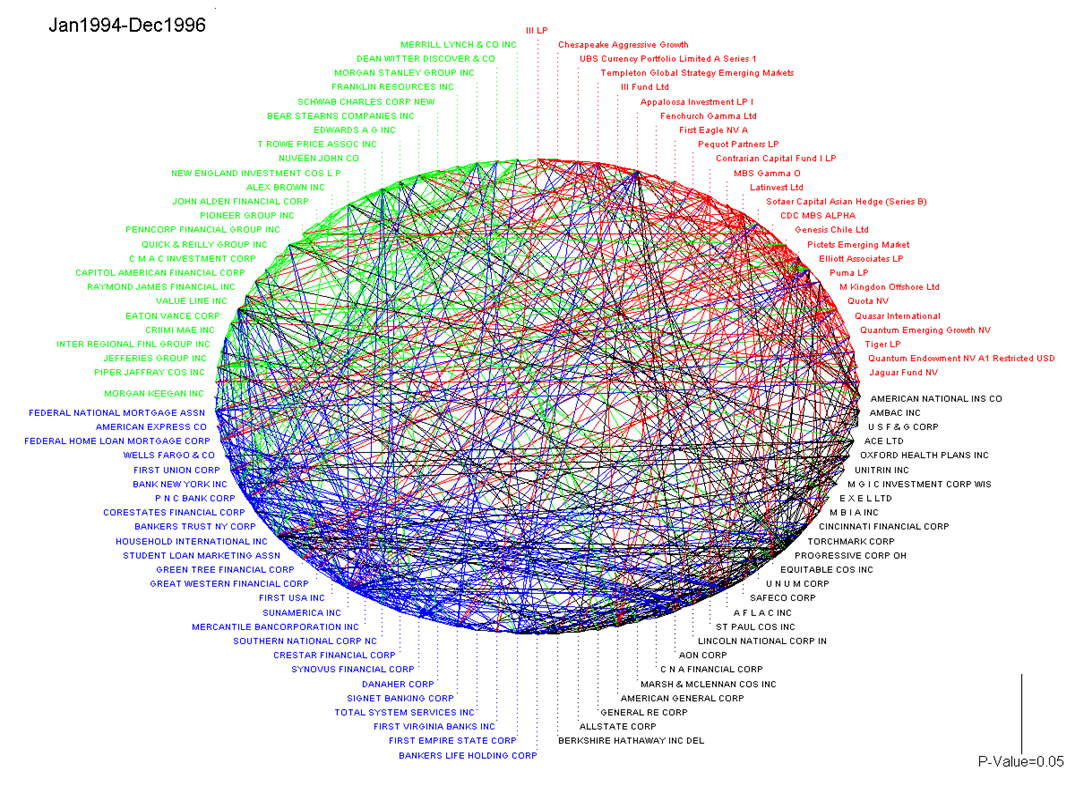

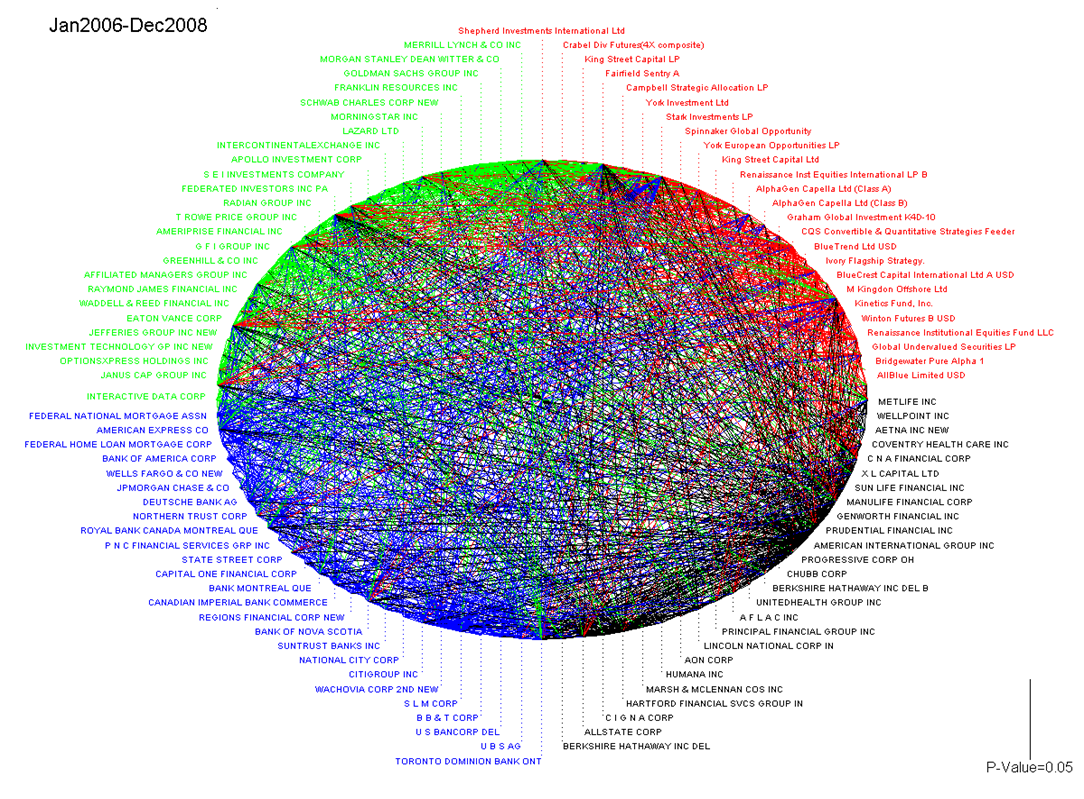

I recently attended a fascinating talk describing a mathematical approach that just might help financial regulators spot early signs of distress in a financial system that’s become increasingly complex and impenetrable. Andrew Lo, a professor at Sloan Business School and director of MIT’s Laboratory for Financial Engineering, kicked off his talk, called “Measuring and Managing the Complexity of the Financial System,” by showing two charts that neatly illustrate the complexity and interdependency of the current financial system.

The first shows relationships between various major financial institutions roughly 20 years ago:

The second shows the same relationships just 10 years later:

These giant rubber-band balls of complexity illustrate how just how precarious the financial system is; Lo went on to note that these financial institutions are under no obligation to disclose their activities, and would object to doing so for fear of handing their rivals a competitive advantage.

Lo then talked about an idea that could help give regulators a way to monitor activity without requiring financial firms to lay their cards on the table. His solution is an algorithm that lets participants hand-encrypt the details of their financial activities in such a way that the details remain secret but computational functions can be performed on the collective data to reveal potentially troublesome activity in the overall system.

The approach is quite similar to homomorphic encryption, a mathematical technique that is being explored as a way to provide access to very sensitive data stored in cloud computing databases (see “A Cloud That Can’t Leak”).

You can read about the details in this paper, coauthored with colleagues from the EPFL School of Communication and Computer Science in Switzerland and AlphaSimplex Group, a trading company founded by Lo.

After the talk I asked Lo if this might have really helped prevent the financial meltdown 2007. Here’s what he said:

There’s a big difference between having a lot of warning signs and having an official government source akin to the National Weather Service informing you that a hurricane is building. Yes, there were plenty of warning signs, but it’s virtually impossible for regulators to take action based on warning signs. Imagine asking the folks in New Jersey to evacuate because you’ve got a bad feeling about the weather.

I also asked whether this approach would help with high-frequency trading and related emergent behavior (see “Watch High-Frequency Trading Bots Go Berserk”).

Our approach could actually be quite useful for high-frequency trading in allowing investors to measure how “crowded” a market is at a given point in time without asking for individual traders to disclose their positions.

It seems like a pretty ingenious solution to an enormously important problem.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.