Service Blackouts Threaten Cloud Users

For all the unprecedented scalability and convenience of cloud computing, there’s one way it falls short: reliability.

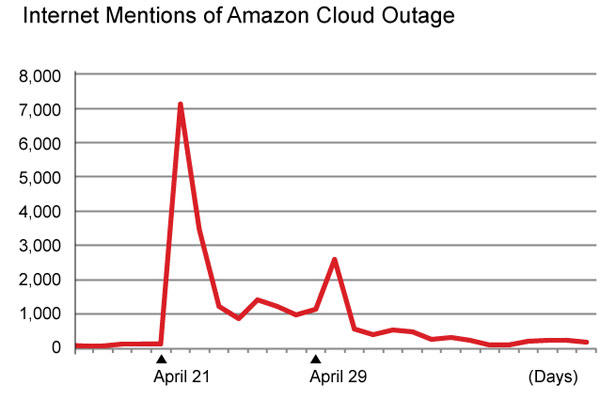

Just ask Jeff Malek, cofounder of BigDoor, a Seattle company whose game software is hosted on the public servers of Amazon. Last April, problems in a Northern Virginia data center crippled Amazon’s northeast operations, affecting many cloud-based businesses. Spotty service over four days left BigDoor scrambling to find technical solutions and issuing a steady stream of apologies to its 250 clients.

Since then, BigDoor has joined a growing number of companies that are seeking new ways of building outage-resistant systems in the cloud, often at additional expense and inconvenience.

Major cloud providers such as Salesforce.com, Microsoft, and Google have all experienced outages. During a 30-day period in August and September, for instance, Google’s cloud-based apps experienced six service disruptions, according to the company’s Apps Status Dashboard, including one hour-long outage on September 7 that shut out several million users of Google Docs. Some outages have been caused by unpredictable events like lightning strikes, but software updates are frequently the culprit. Google said changes to its software caused Google Docs to go down, and an attempt to run updated code was also blamed for a massive e-mail outage affecting users of BlackBerry phones in Europe this month. (RIM, like Google, operates its own servers instead of relying on public cloud providers.)

Even though outages put businesses at immense risk, public cloud providers still don’t offer ironclad guarantees. In its so-called “service-level agreement,” Amazon says that if its services are unavailable for more than 0.05 percent of a year (around four hours) it will give the clients a credit “equal to 10% of their bill.” Some in the industry believe public clouds like Amazon should aim for 99.999 percent availability, or downtime of only around five minutes a year.

Until then, businesses must cope with the reality of imperfect service. “For me, [service agreements] are created by bureaucrats and lawyers,” says Malek. “What I care about is how dependable the cloud service is, and what a provider has done to prepare for outages.”

Amazon has computing data centers in five regions around the world. Companies can opt to run applications in multiple regions as a precautionary measure—the same way you’d back up your computer to an external hard drive. Immediately after the big outage in April, BigDoor upgraded to host its services in multiple Amazon regions. However, adding that layer of protection led to a 5 percent increase in the company’s monthly cloud bills. And getting applications to communicate seamlessly across isolated regions requires “extra resources, money, and time,” says Malek.

Netflix, which uses Amazon’s cloud to stream videos, is another company that is putting engineering effort into protecting against cloud failures. Following the problems at Amazon, the company developed Chaos Gorilla, internal software that allows its engineers simulate how effectively Netflix’s systems can reroute data when a network zone goes down.

One company that says it wasn’t affected by recent cloud problems is SimpleGeo, a San Francisco startup that uses Amazon to serve location-awareness tools to its clients. SimpleGeo emerged unscathed from the Amazon outage thanks to its use of a technique called back-pressure routing, which avoids network areas suffering slow response times. Cofounder and chief technology officer Joe Stump compares the technology to a driver’s adjusting speed or switching lanes on a clogged highway. Although SimpleGeo may have dodged network outages, doing so came at a substantial cost. “We have three engineers dedicated full time to building internal tools to manage our infrastructure,” he says.

Indeed, Stump says only one thing is 100 percent certain when it comes to the cloud: “You always have to architect your systems under an assumption of failure.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.