A Robot that Navigates Like a Person

European researchers have developed a robot capable of moving autonomously using humanlike visual processing. The robot is helping the researchers explore how the brain responds to its environment while the body is in motion. What they discover could lead to machines that are better able to navigate through cluttered environments.

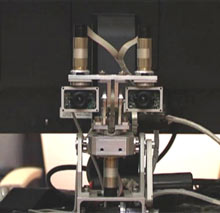

The robot consists of a wheeled platform with a robotic “head” that uses two cameras to capture stereoscopic vision. The robot can turn its head and shift its gaze up and down or sideways to gauge its surroundings, and can quickly measure its own speed relative to its environment.

The machine is controlled by algorithms designed to mimic different parts of the human visual system. Rather than capturing and mapping its surroundings over and over in order to plan its route–the way most robots do–the European machine uses a simulated neural network to update its position relative to the environment, continually adjusting to each new input. This mimics human visual processing and movement planning.

Mark Greenlee, the chair for experimental psychology at Germany’s University of Regensburg and the coordinator of the project, says that computer models of the human brain need to be validated by experiment. The robot mimics several different functions of the human brain–object recognition, motion estimation, and decision making–to navigate around a room, heading for specific targets while avoiding obstacles and walls.

Ten different European research groups, each with expertise in fields including neuroscience, computer science, and robotics, designed and built the robot through a project called Decisions in Motion. The group’s challenge was to pull together traditionally disparate fields of neuroscience and integrate them into a “coherent model architecture,” says Heiko Neumann, a professor at the Vision and Perception Lab at the University of Ulm, in Germany, who helped develop the algorithms that control the robot’s motion.

Normally, Neumann says, neuroscientists focus on a particular aspect of vision and motion. For example, some study the “ventral stream” of the visual cortex, which is related to object recognition, while others study the “dorsal stream,” related to motion estimation from the environment, or “optic flow.” Still others study how the brain decides to move based on input from both the dorsal and ventral streams. But to develop a real, humanlike computer model for navigation, the researchers needed to incorporate all these aspects into one system.

To do this, Greenlee’s team started by studying fMRI brain images captured while people were moving around obstacles. The researchers passed on the findings to Neumann’s group, which developed algorithms designed to mimic the brain’s ability to detect the body’s motion, as well as that of objects moving through in the surrounding environment. The researchers also incorporated algorithms developed by participant Simon Thorpe of the French National Center for Scientific Research (CNRS). Thorpe’s SpikeNet software uses the order of neuron firing in a neural network to simulate how the brain quickly recognizes objects, rather than a more traditional approach based on neuron firing rate.

Once the robot had been given the software, the researchers found that it did indeed move like a human. When moving slowly, it passed close to an obstacle, because it knew that it could recalculate its path without changing course too much. When moving more quickly toward the target, the robot gave obstacles a wider berth since it had less time to calculate a new trajectory.

Robots that navigate using more conventional methods may be more efficient and reliable, says Antonio Frisoli of the PERCRO Laboratory at Scuola Superiore Sant’Anna, in Pisa, Italy, who led the team that built the robot’s head. For example, a robot guided by laser range-finding and conventional route-planning algorithms would take the most direct path from point A to point B. But the team’s goal was not to compete with the fastest, most efficient robot. Rather, the researchers wanted to understand how humans navigate. We, too, says Frisoli, “adapt our trajectory according to our speed of walking.”

Applications of the technology could include “smart” wheelchairs that can navigate easily indoors, says Greenlee. A few members of the consortium have applied for a grant to follow up on this application, while one of the original partners, Cambridge Research Systems, in the U.K., is developing a head-mounted device based on the technology that could aid the visually impaired by detecting obstacles and dangers and communicating them to the wearer.

Tomaso Poggio, who heads MIT’s Center for Biological and Computational Learning and studies visual learning and scene recognition, says of the EU project, “It seems to be a trend, from neuroscience to computer science, to look at the brain for designing new systems.” He adds that we are “on the cusp of a new stage where artificial intelligence is getting information from neuroscience,” and says that there are “definitely areas of intelligence like vision, or speech understanding, or sensory-motor control, where our algorithms are vastly inferior to what the brain can do.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.