How to Build a 100-Million-Image Database

We take some 80 billion photographs each year which would require around 400 petabytes to store if they were all saved. Finding your cherished shot of Aunt Marjory’s 80th birthday party among that lot is going to take some special kind of search algorithm. And of course, various groups are working on just how to solve this problem.

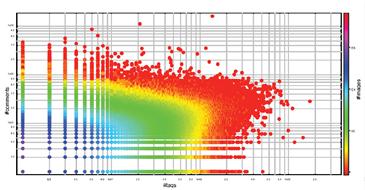

But if you want to build the next generation of image search algorithms, you need a database on which to test it, say Andrea Esuli and pals at the Institute of Information Science and Technologies in Pisa, Italy. And they have one: a database of 100 million high quality digital images taken from Flickr. For each image they have extracted five descriptive features such as colours, shape, and texture, as defined by the MPEG-7 image standard.

That’s no mean feat. Esuli and co point out that such an image database would normally require the download and processing of up to 50 TB of data, something that would take take about 12 years on a standard PC and about 2 years using a high-end multi-core PC. Instead, they simply decided to crawl the Flickr site, where the pictures are already stories, taking what data they need as descripitors. This paper describes the trials and tribulations of building such a database.

Elusi and co also announce that the resulting collection is now open to the research community for experiments and comparisons. So if you’re testing the next generation of image search algorithm, this is the database you need to set it loose on.

Finding Aunt Marjory may not be the lost cause we had thought.

Ref: http://arxiv.org/abs/0905.4627 :CoPhIR: a Test Collection for Content-Based Image Retrieval

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.