Pranav Mistry

A simple, wearable device enhances the real world with digital information.

Retrieving information from the Web when you’re on the go can be a challenge. To make it easier, graduate student Pranav Mistry has developed SixthSense, a device that is worn like a pendant and superimposes digital information on the physical world. Unlike previous “augmented reality” systems, Mistry’s consists of inexpensive, off-the-shelf hardware. Two cables connect an LED projector and webcam to a Web-enabled mobile phone, but the system can easily be made wireless, says Mistry.

Users control SixthSense with simple hand gestures; putting your fingers and thumbs together to create a picture frame tells the camera to snap a photo, while drawing an @ symbol in the air allows you to check your e-mail. It is also designed to automatically recognize objects and retrieve relevant information: hold up a book, for instance, and the device projects reader ratings from sites like Amazon.com onto its cover. With text-to-speech software and a Bluetooth headset, it can “whisper” the information to you instead.

Remarkably, Mistry developed SixthSense in less than five months, and it costs under $350 to build (not including the phone). Users must currently wear colored “markers” on their fingers so that the system can track their hand gestures, but he is designing algorithms that will enable the phone to recognize them directly.

Andrea Armani

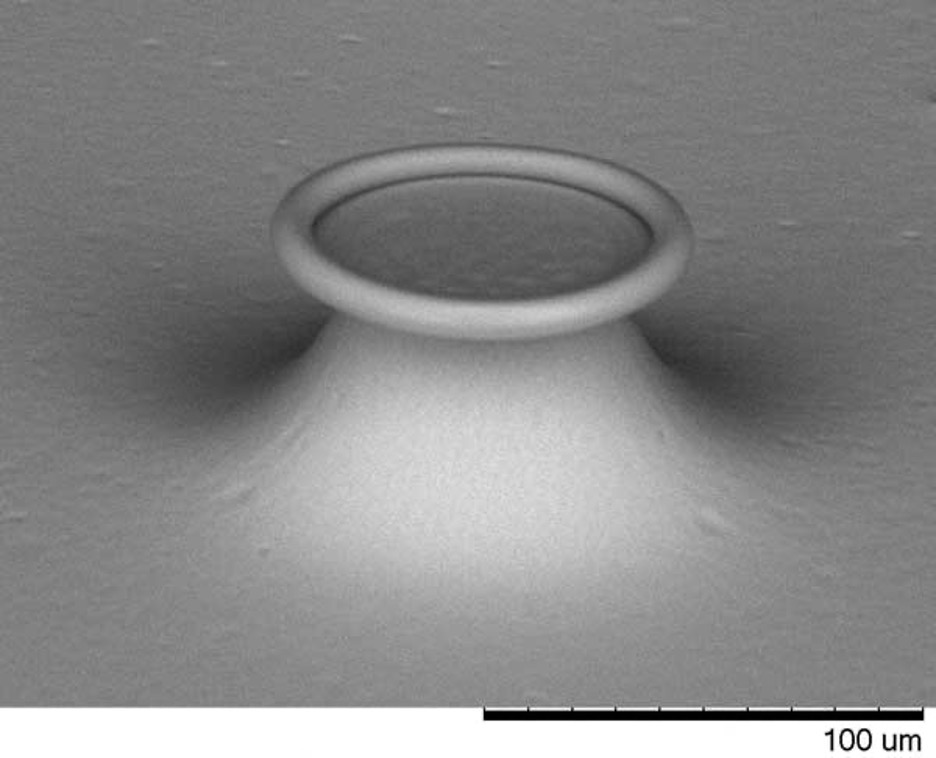

Sensitive optical sensors detect single molecules.

Andrea Armani, an assistant professor of chemical engineering and materials science, has developed the first optical sensor that can detect single molecules without the use of labels such as fluorescent tags. No label-free detector previously developed has been sensitive enough to distinguish a single molecule.

Armani’s sensor consists of a microscopic silica ring that sits on a pedestal atop a silicon wafer. “It’s this little, tiny doughnut-shaped device,” she says. The ring captures photons from a laser and holds them in orbit. Its surface is chemically treated to snag molecules of the target substance from the surrounding environment. As soon as even one molecule of the compound is ensnared, it creates a detectable change in the ring’s optical properties.

Because it works in liquids, including blood, the sensor could be an ideal diagnostic device. Armani envisions, for instance, incorporating one into intravenous catheters that would monitor a patient for infection, picking up telltale molecules in minuscule quantities long before symptoms appeared.

James Carey

Using “black silicon” to build inexpensive, super-sensitive light detectors.

Problem: Silicon has limitations as an optical material. While devices from digital cameras to x-ray detectors take advantage of its ability to absorb electromagnetic radiation, longer wavelengths of light fly right through it. If engineers could make silicon light detectors that “see” more thoroughly into the visible and infrared spectra, relatively inexpensive silicon could replace the costlier, more exotic materials often used in optoelectronics.

Solution: As a graduate student at Harvard, James Carey made thin, super-sensitive light detectors out of “black silicon”–a material discovered accidentally when his colleagues fired a laser at a silicon wafer in the presence of a sulfur-containing gas. Carey demonstrated that the process did more than turn silicon black: it also gave the material the ability to absorb the longer wavelengths of visible and infrared light that thin layers of traditional silicon can’t. What’s more, it absorbed every wavelength more efficiently than conventional silicon does.

Carey cofounded SiOnyx in Beverly, MA, to manufacture black-silicon chips for devices such as inexpensive night-vision equipment and infrared surveillance systems. Other potential applications include better cell-phone cameras and cheaper, more sensitive detectors that could lower the x-ray dose needed for advanced medical imaging.

Adam Dunkels

Minimal wireless-networking protocols allow almost any device to communicate over the Internet.

Adam Dunkels, a senior scientist at the Swedish Institute of Computer Science, has developed software that’s used to network devices as diverse as satellites, pipelines, electric meters, and race-car engines. Such devices often incorporate tiny computers that need to relay data to a central server. Using the Internet Protocol (IP) would allow them to communicate with any other device or computer by means of existing infrastructure. But until Dunkels proved otherwise, many computer scientists believed that these “embedded systems” had too little memory and power to use IP.

In 2000, Dunkels shrank the protocol so that wireless sensors could use it to report hockey players’ vital signs to fans. He continued condensing it so that ever more limited sensors could use it, eventually writing a version that uses only 100 bytes of RAM. This miniature version of IP is now used by hundreds of companies.

He went on to incorporate it into a complete operating system for embedded systems; called Contiki, the freely downloadable open-source system was first released in 2003. Dunkels is still improving Contiki and finding new ways of using it to build and enhance wireless sensor networks.

Kevin Fu

Defeating would-be hackers of radio frequency chips in objects from credit cards to pacemakers.

Could implanted medical devices that use wireless communication, such as pacemakers, be maliciously hacked to threaten patients’ lives? Kevin Fu is no stranger to such overblown scenarios based on his research, though he prefers to stick to talking about technical details. But Fu, a software engineer and assistant professor of computer science, is a security guy. And security people think differently.

“Anyone who works in the world of security–they always have an adversary in mind,” Fu explains, sitting behind his desk on the second floor of the UMass Amherst computer science building. “That’s how you can best design your systems to defend against it.”

The threats Fu researches are chiefly those connected to the security of radio frequency identification, or RFID. RFID is an increasingly common technology, used in everything from tags for shipping containers to electronic key cards, from ExxonMobil’s Speedpass key-chain wands to Chase’s no-swipe “Blink” credit cards. It allows billing and personal information to be shared quickly and wirelessly. But not, Fu realized back in 2006, very securely.

After testing more than 20 such “smart” or no-swipe credit cards from MasterCard, Visa, and American Express, Fu and his colleagues found that they could lift account numbers and expiration dates from several of the cards–even cards inside a wallet–just by walking past them with a homemade scanner.

Criminals troll mailboxes, shopping malls, and airports, harvesting nearby RFID information for use in identity-theft scams. Basically, they pick your pocket without ever touching your pocket. Making these cards truly secure would require good encryption software–Fu’s specialty. But encryption requires a steady supply of energy, something that the passive, externally powered RFID chips used in these applications don’t have. “The inspiration was about the programming,” Fu explains. “But the programming won’t work without an RFID computer to program. And the RFID computer won’t work without solving the energy issues.” He breaks a weary smile. “So, thus far, it’s been something like a two-year sideline.”

The only way for Fu to resolve this catch-22 is to invent new technology–a project he’s working on with a team led by Wayne Burleson, a professor of electrical and computer engineering. But even as he wrestled with this problem, Fu found himself wondering, as only a security guy can: if financial information is vulnerable, what about seemingly more obscure targets with far bigger consequences?

This is what first brought him to the heart-attack machine.

At his desk, Fu clicks through a PowerPoint slide show of bad-guy examples, from the madman who put cyanide-laced Tylenol on Chicago drugstore shelves in 1982 to the hacker who posted seizure-inducing animations on an Internet message board for epileptics.

“It might seem paranoid,” Fu admits, “but from a security standpoint, you need to start with the fact that bad people do exist.” And there seemed no better place to hunt such misanthropes than the world of medicine.

Fu began wondering about the security of medical devices that use RF communication, such as pacemakers and defibrillators. He discussed the problem with his longtime colleague Tadayoshi Kohno, assistant professor of computer science and engineering at the University of Washington and a veteran investigator into the vulnerabilities of computer networks and voting machines (see TR35, September/October 2007).

$$PAGE$$

“Kevin is a fantastic researcher,” Kohno says. “His research is now covered in almost every undergraduate computer-security course that I know of. And his insights are exceptionally deep.” Together, Fu and Kohno took their questions about defibrillators far from the computer science lab–into the world of cardiologist William H. Maisel, director of the Medical Device Safety Institute at Boston’s Beth Israel Deaconess Medical Center.

The two explained to Maisel’s wide-eyed staff how security people think. In turn, the medical professionals introduced the security researchers to Cardiology 101–starting with pacemakers and defibrillators, devices that are implanted in some half-million people around the world every year. Basically, a pacemaker regulates aberrant heartbeats with gentle metronomic pulses of electricity, while a defibrillator provides a big shock to “reboot” a failing heart. Merged, they form an implantable cardioverter defibrillator, or ICD. The ICD is designed to stop a heart attack in a cardiac patient. But, Fu and Kohno wondered, could it create one instead?

In his UMass office, Fu pulls out a shoebox containing the works of an ICD. It looks the way the Tin Man’s heart might: padlock-sized and encased in hard, silvery surgical steel, now peeled away can opener-style. I instinctively reach in, drawn like a magpie to the shiny objects. Fu quickly jerks the box away. “Um, you don’t want to touch that,” he says. “The coil in these things delivers 700 volts”–enough juice to stop your heart.

He points out the matchbook-sized microchip and antenna coil–technology that connects the latest-generation ICDs with the Internet, allowing doctors to reprogram a device without surgery. From the perspective of cardiologists and patients, this wireless programming is a godsend. But from Fu’s viewpoint, it represents a new security risk. And so he wondered: Could black-hat hackers listen in on the wireless communication between an ICD and its programming computer? Could they make sense of what they heard and use it to inflict harm?

“Most people who make these devices don’t think like this,” Fu says. “But this is how the adversary thinks. He doesn’t play your game; he makes his own game.” To assess the security threat, the researchers needed to play the hacker’s game.

Fu’s team set out to create a technique to eavesdrop on defibrillator chatter. The hardware was just off-the-shelf stuff–a platform designed to allow researchers and serious hobbyists to build their own software radios. It has been made into FM radios, GPS receivers, digital television decoders–and RFID readers. All that was left was to write the software, rip the antenna coil out of an old pacemaker, solder it into the radio–and voilà, they had a transmitter.

“It worked pretty well–amazingly well,” Fu says. After “nine months of blood and sweat,” they could intercept digital bits from an ICD–but they had no idea what those bits meant. His students trudged back to the lab to figure out how to interpret them. Using differential analysis–basically, changing one letter of a patient’s name and then listening to how the corresponding radio transmission changed–they were able to painstakingly build up a code book.

Now their homemade software radio could listen in on and record ICD programming commands. The device could also rebroadcast those recordings, as fresh commands, to any nearby ICD. It had become dangerously capable of playing doctor.

Fu discovered one set of commands that would keep an ICD in a constant “awake” state, surreptitiously draining the battery to devastating effect. “We did a back-of-the-envelope calculation on this,” he explains. “A battery designed to last a couple years could be drained in a couple weeks. That alone was a notable risk.”

$$PAGE$$

Even more notable, Fu’s software radio was capable of completely reprogramming a patient’s ICD while it was in his or her body. The researchers were able to instruct the device not to respond to a cardiac event, such as an abnormal heart rhythm or a heart attack. They also found a way to instruct the defibrillator to initiate its test sequence–effectively delivering 700 volts to the heart–whenever they wanted.

Fu doesn’t like to think of himself as having built a heart-attack machine, or even of discovering that such a thing could be built. Though he is an academic who doesn’t shy away from pursuing real-world applications for his theoretical technologies, that “real world” is usually at least 10 years in the future. But the ramifications of the ICD-programming radio were both immediate and chilling: the device could be easily miniaturized to the size of an iPhone and carried through a crowded mall or subway, sending its heart-attack command to random victims.

A heart-attack machine? Really? It would be foolish, Fu says, not to recognize that there are depraved people out there, more than capable of building and using such a machine to inflict harm on random innocents “just for kicks.” To this extent, the issue of protecting remote programming access to ICDs is directly related to the issue of protecting RFIDs. Encrypting the communication is the only way to shield millions of people from random risks. It doesn’t take a Fu to come up with practical solutions, but by exposing the security dangers he has provided a valuable, perhaps even life-saving, alert to manufacturers.

Fu is too smart to engage in speculation about how the technology could be abused, except to say that he’d be very surprised if there weren’t “people already working on this.” In the best case, we’ll never know how foresighted he was; medical-device makers will eliminate the threat before hackers ever exploit it. “Kevin is a computer scientist who also has the ability to look at problems like a medical doctor and like a patient,” says Maisel. “The work Kevin is doing now–relating to medical-device security and privacy–has the potential to impact millions of people.”

How about the more dramatic scenarios? Imagine a spy agency using printed circuitry to put a heart-attack machine into a newspaper, delivered with morning coffee to a foreign leader with a pacemaker. Or a Lex Luthor-like supervillain who retrofits a radio tower to broadcast his death ray to entire populations.

Kevin Fu–professor, researcher, scientist–rolls his eyes. “All I can say about that one,” he says with a laugh, “is it might make a pretty good movie.”

Andrew Houck

Preserving information for practical quantum computing.

Among the most promising approaches to building a quantum computer is using superconducting circuits as quantum bits, or qubits. But controlling the qubit without destroying the information tucked inside it is a major challenge.

Andrew Houck, an assistant professor of electrical engineering, developed a superconducting qubit called a transmon that helps keep quantum information intact.

The data in a qubit–0, 1, or a quantum superposition of the two–is represented using different energy and phase states in the circuit, but stray electrical fields can easily destroy these states during readout. Instead of targeting the source of interference, as other researchers have, Houck armored the qubit, adding a capacitor that makes it difficult for stray electrons to interfere.

Getting data from the transmon is the next hurdle. Usually the qubit is read directly, by measuring changes in charge, but that’s not possible with the transmon. So Houck coupled it to a microwave photon, which interacts differently with the qubit depending on its state. By measuring the photon, it’s possible to infer the qubit’s state and thus extract its information.

While the quantum data in transmons lasts a few microseconds–an order of magnitude longer than in previous qubits–there’s still a way to go before millions of qubits can be used to make a large-scale quantum computer.

Shahram Izadi

An intuitive 3-D interface helps people manage layers of data.

Shahram Izadi wants to make interacting with computers more natural. For one of his touch-based interfaces, the research scientist has improved on Microsoft’s already impressive touch table, Surface, to present information in a completely new way.

Surface projects infrared light and detects its reflection from fingers or other objects that are on or above a screen, enabling users to work with data displayed on the screen. Izadi’s variation, called SecondLight, uses a second projector and a switchable diffuser to add another physical layer of data.

The system projects one image on the table’s surface and a second, hidden image above it; passing a semiopaque object over the table reveals the second image. For instance, a user who holds a sheet of paper over an image of a human body might see the bones of the skeleton. Ultimately, Izadi envisions specialized tablets that could interact with SecondLight to facilitate collaboration; doctors working on the same patient, for example, could each add or view new data.

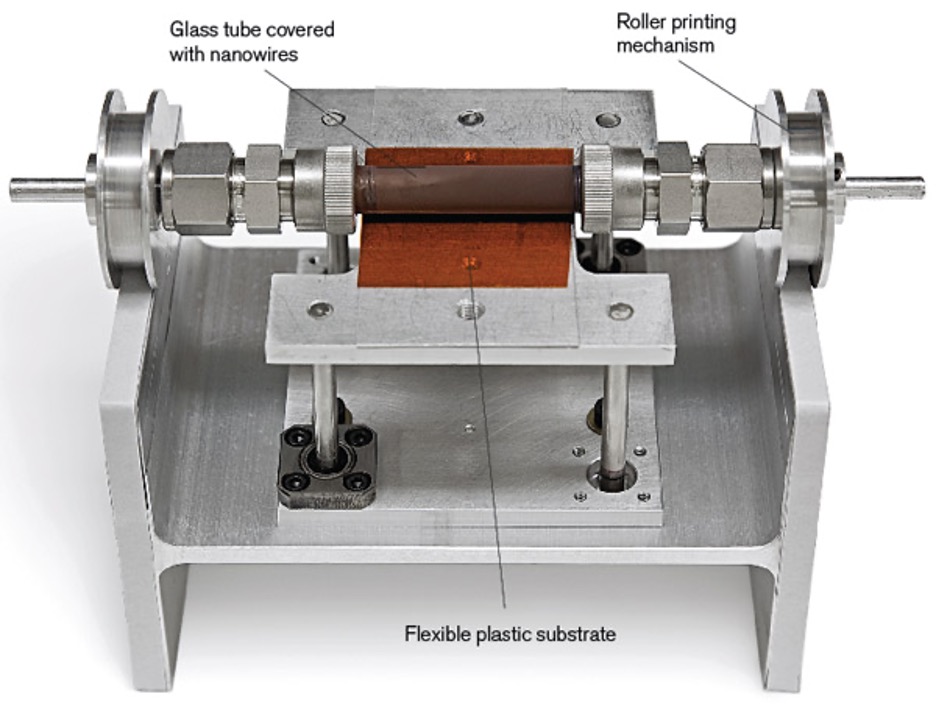

Ali Javey

“Painting” nanowires into electronic circuits.

Nanowires could be the basis of tomorrow’s advanced electronics, from cheap solar cells to high-resolution displays. But it’s been difficult to arrange the tiny strands precisely. Ali Javey, an assistant professor of electrical engineering and computer science, has become a master at doing so. His latest tool for making high-quality circuits: a roller printer. He coats a glass cylinder with a catalyst and puts it in a chemical-vapor deposition chamber, where its surface sprouts nanowires. When the cylinder is pressed against a flexible piece of plastic or a silicon wafer, the tips of the nanowires cling to the flat surface; as the tube rolls, the wires are dragged and combed into straight rows before detaching from the roller. So far, Javey has used the technique to print transistors based on germanium, silicon, and indium arsenide nanowires. He has also printed arrays of light-sensing cadmium selenide nanowires, which can be used as photosensors for imaging applications.

Anat Levin

New cameras and algorithms capture the potential of digital images.

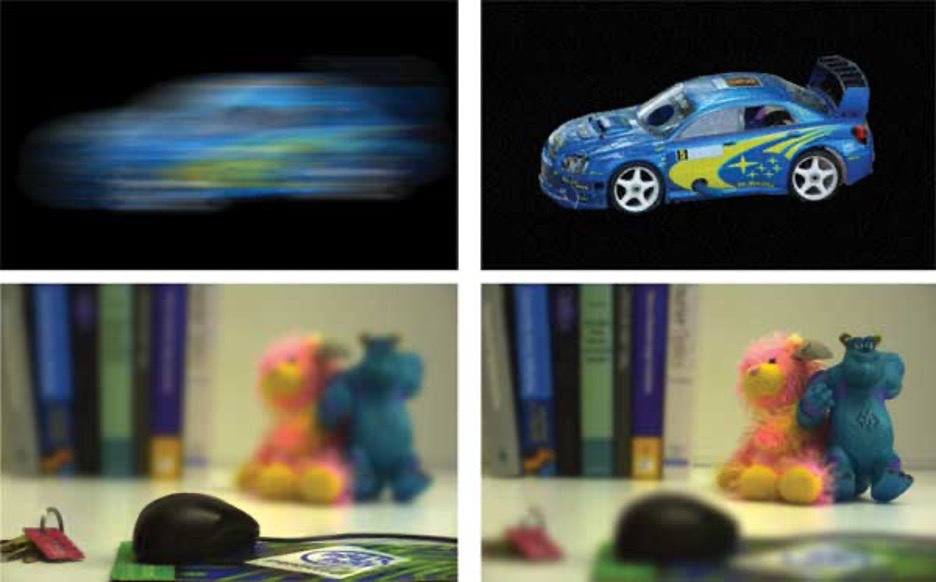

Although a digital camera is an impressive piece of equipment, it’s the same in its basic design as the old-fashioned film camera: a lens focuses an image on a plane. The digital camera simply captures that image with a light-sensing chip instead of film. Anat Levin thinks we can do more.

Levin, a senior scientist at the Weizmann Institute in Rehovot, Israel, is at the forefront of computational photography. She develops ways to manipulate digital images, both inside the camera and on computers. And increasingly, she is exploring new camera designs. “Before digital photography, we would capture images onto a film, and the film was more or less the end of the story,” she says. “Now, with digital photography, what we have on the camera is not the end of the process.”

Last year, Levin invented a camera and algorithm that, together, remove motion blur from an image. Paradoxically, the camera moves its sensor horizontally at a varying speed while the image is being exposed, which of course makes the whole image blurry. However, the camera’s movement is specially designed to blur the moving and static parts of a scene equally, and by a known amount. Thus, she can use a relatively simple algorithm to remove the blur from all objects. A separate computer processes the image today, but a production model of the camera could eventually do the processing onboard.

Working with colleagues at MIT, Levin has also proposed a lens design that would give a camera greater depth of field, increasing the amount of a scene–near and far–that can be brought into focus at the same time. Square pieces cut from lenses with different focal lengths are superimposed over the regular lens. Each square focuses on an area a different distance from the camera. Using the information from all the lenses, Levin can recalculate the entire image to increase the depth of field, or even refocus on objects that are closer or farther away after the picture has been taken.

Source: "Motion-Invariant Photography" by Levin, Sand, Cho, Durand, Freeman.

A new focus: Levin and colleagues designed a lattice of different lenses that can be placed over a camera's regular lens. Each lens focuses on an area a different distance from the camera. Using data from all the lenses, Levin can choose which part of the photo is in focus. In the image at left, the mouse is in the plane of focus and looks sharp. On the right, she has moved the plane of focus to the figurines in back.

Source: "4D Frequency Analysis of Computational Cameras for Depth of Field Extension" by Levin, Hasinoff, Green, Durand, Freeman.

Aydogan Ozcan

Inexpensive chips and sophisticated software could make microscope lenses obsolete.

Expensive, bulky lenses have been the basis of imaging technology for centuries. Now, says Aydogan Ozcan, an assistant professor of electrical engineering, “it’s time to change our thinking.” By writing sophisticated image-processing software and taking advantage of the inexpensive light sensors now ubiquitous in cell phones, he may have made lenses obsolete. The lensless imaging devices that Ozcan has built achieve roughly the same resolution as standard bench-top microscopes (about a micrometer), so they can be used to count, identify, and even image living cells.

He’s made prototypes mounted in cell phones to demonstrate the technology and has started a company called Microskia to develop it. The first products are likely to be simple microscopes that plug into a cell phone or laptop through a USB cord and display the magnified images on their screens; the first uses will probably be in remote medical centers, to diagnose anemia, cancer, and infectious diseases such as malaria. According to Ozcan, though, his prototypes are actually good enough to replace the large, expensive cell counters used in U.S. hospitals.

Vera Sazonova

World’s smallest resonator could lead to tiny mechanical devices.

Microelectromechanical systems, or MEMS, play a key role in gyroscopes, tiny chemical sensors, optical switches used in the telecom industry, and more. An even smaller version of the technology–nanoelectromechanical systems, or NEMS–could likewise have broad technological importance. Vera Sazonova has made the world’s smallest NEMS device: a tiny resonator that consists of a single carbon nanotube suspended over a silicon gate. A voltage at the gate makes the nanotube vibrate, creating a high-frequency current. Since the current is hard to detect, Sazonova applied another voltage at a slightly different frequency; the two signals mix to create a third, low-frequency current that is easier to pick up. Potential applications include ultrasensitive motion detectors, sensors that can detect the mass of molecules, and even devices for detecting gravitational waves.

Elena Shevchenko

Assembling nanocrystals to create made-to-order materials.

Elena Shevchenko is a master at making nanoparticles and assembling them into precise structures with useful properties. Materials made from the nanocrystals created with her methods could lead to ultra-efficient solar cells, tiny but powerful magnets, super-dense hard disks, and faster computers.

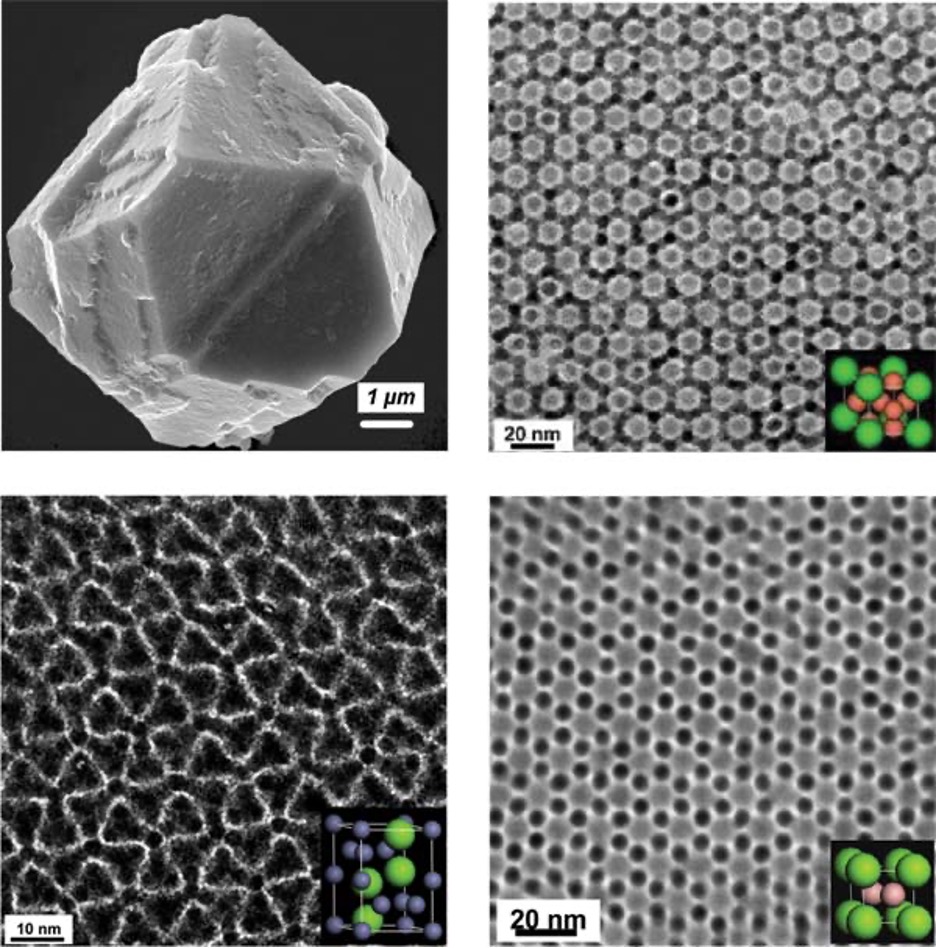

Trained as a chemist in Belarus, the University of Hamburg in Germany, and Columbia University in New York, Shevchenko has found better ways to make nanoparticles out of metallic compounds; she’s produced lead telluride, cadmium selenide, and cobalt-platinum particles, among others. She has also developed a technique for assembling these nanoparticles into “superlattices,” orderly crystal structures. Paul Alivisatos, a nanotech pioneer and interim director of the Lawrence Berkeley National Laboratory, calls Shevchenko “the best grower of nanocrystals in the world today.”

Mixing and matching these nanoscale building blocks offers endless possibilities for engineering structures with desired optical, electrical, and magnetic properties. A nanoparticle array of lead telluride and silver telluride, for example, is 100 times as conductive as arrays made of either particle alone. So far, Shevchenko has created dozens of new materials.

Dawn Song

Defeating malware through automated software analysis.

For years, says Dawn Song, computer defenders have been reacting to each new virus, worm, or other piece of malware after it appears, developing and deploying filters that detect known patterns in malicious code in order to stop its spread. Instead of stopping malicious programs one by one, Song, an associate professor of computer science, aims to protect computers at a deeper level.

Source code for both malware and commercial software is often not available, which slows the hunt for vulnerabilities. Song figured out how to find security flaws by examining only the 1s and 0s that the computer runs. Her platform, BitBlaze, analyzes malware and automatically generates a filter to protect against it until a security patch is released. It can also analyze those patches and produce new malware that exploits any vulnerabilities; this allows programmers to make security patches as sound as possible.

Such tasks “were previously relegated to highly specialized manual labor,” says Avi Rubin, technical director of the Johns Hopkins University Information Security Institute; he calls BitBlaze “a giant step forward in the battle against those who wish harm against computer systems.” For example, if a worm tried to infiltrate a computer, BitBlaze’s response could fend off a variety of future attacks targeting the same vulnerability. Technology spun out of Song’s research has already been incorporated into Google’s Chrome browser, and she has collaborated with security software companies such as Symantec.

Andrea Thomaz

Robots that learn new skills the way people do.

Before robots can be truly useful in homes, schools, and hospitals, they must become capable of learning new skills. Andrea Thomaz, an assistant professor of interactive computing, wants them to learn from their users, so that experts don’t have to program every task. She aims to make robots that not only understand a human teacher’s verbal instructions and social signals but give social feedback of their own, using gestures, expressions, and other cues to let the person know whether they have correctly understood the directions.

Thomaz has designed machine learning algorithms based on human learning mechanisms and built them into her robots Junior and Simon, which have faces that make basic expressions and hands that can grasp simple objects. In experiments with people untrained in formal teaching, Junior has quickly learned enough about things in its environment to catch on to tasks such as opening and closing a box.

Adrien Treuille

Complex physics simulations that can run on everyday PCs.

Adrien Treuille creates simulations of physical processes ranging from the flow of people in a crowd to the motion of proteins in a cell. And while his models are stunningly realistic, what’s truly amazing is that they run not on supercomputers but on ordinary PCs. “I want to place curling smoke in the palm of your hand,” he says.

To make this possible, Treuille, an assistant professor of computer science, streamlines the mathematical representation of a scenario, removing unlikely outcomes. For example, he says, a full simulation of how a shirt might be folded would include fantastic origami-style shapes. In most cases, a simulation would need to cover only ordinary creases.

Treuille’s simulations have attracted commercial interest. For example, ESPN used his techniques to simulate the airflow around NASCAR vehicles on live TV. And Electronic Arts has licensed his crowd-simulation techniques for its games, where they’re replacing more processing-intensive artificial-intelligence methods.

But Treuille’s work has applications beyond entertainment. He and colleague Seth Cooper designed a downloadable game called Foldit that allows players to fold and tug on simulations of known proteins to design new molecules. More than 90,000 users have registered and played since the game’s launch in May 2008. Treuille wonders if someone–perhaps even an amateur–might someday use Foldit to discover a protein that cures cancer.