These impossible instruments could change the future of music

A research project to make digital instruments sound more realistic takes a hard left turn.

When Gadi Sassoon met Michele Ducceschi backstage at a rock concert in Milan in 2016, the idea of making music with mile-long trumpets blown by dragon fire, or guitars strummed by needle-thin alien fingers, wasn’t yet on his mind. At the time, Sassoon was simply blown away by the everyday sounds of the classical instruments that Ducceschi and his colleagues were re-creating.

“When I first heard it, I couldn’t believe the realism. I could not believe that these sounds were made by a computer,” says Sassoon, a musician and composer based in Italy. “This was completely groundbreaking, next-level stuff.”

What Sassoon had heard were the early results of a curious project at the University of Edinburgh in Scotland, where Ducceschi was a researcher at the time. The Next Generation Sound Synthesis, or NESS, team had pulled together mathematicians, physicists, and computer scientists to produce the most lifelike digital music ever created, by running hyper-realistic simulations of trumpets, guitars, violins, and more on a supercomputer.

Sassoon, who works with both orchestral and digital music, “trying to smash the two together,” was hooked. He became a resident composer with NESS, traveling back and forth between Milan and Edinburgh for the next few years.

It was a steep learning curve. “I would say the first year was spent just learning. They were very patient with me,” says Sassoon. But it paid off. At the end of 2020, Sassoon released Multiverse, an album created using sounds he came up with during many long nights hacking away in the university lab.

One downside is that fewer people will learn to play physical instruments. On the other hand, computers could start to sound more like real musicians—or something different altogether.

Computers have been making music for as long as there have been computers. “It predates graphics,” says Stefan Bilbao, lead researcher on the NESS project. “So it was really the first type of artistic activity to happen with a computer.”

But to well-tuned ears like Sassoon’s, there has always been a gulf between sounds generated by a computer and those made by acoustic instruments in physical space. One way to bridge that gap is to re-create the physics, simulating the vibrations produced by real materials.

The NESS team didn’t sample any actual instruments. Instead they developed software that simulated the precise physical properties of virtual instruments, tracking things like the changing air pressure in a trumpet as the air moves through tubes of different diameters and lengths, the precise movement of plucked guitar strings, or the friction of a bow on a violin. They even simulated the air pressure inside the virtual room in which the virtual instruments were played, down to the square centimeter.

Tackling the problem this way let them capture nuances that other approaches miss. For example, they could re-create the sound of brass instruments played with their valves held down only part of the way, which is a technique jazz musicians use to get a particular sound. “You get a huge variety of weird stuff coming out that would be pretty much impossible to nail otherwise,” says Bilbao.

Sassoon was one of 10 musicians who were invited to try out what the NESS team was building. It didn’t take long for them to start tinkering with the code to stretch the boundaries of what was possible: trumpets that required multiple hands to play, drum kits with 300 interconnected parts.

At first the NESS team was taken aback, says Sassoon. They had spent years making the most realistic virtual instruments ever, and these musicians weren’t even using them properly. The results often sounded terrible, says Bilbao.

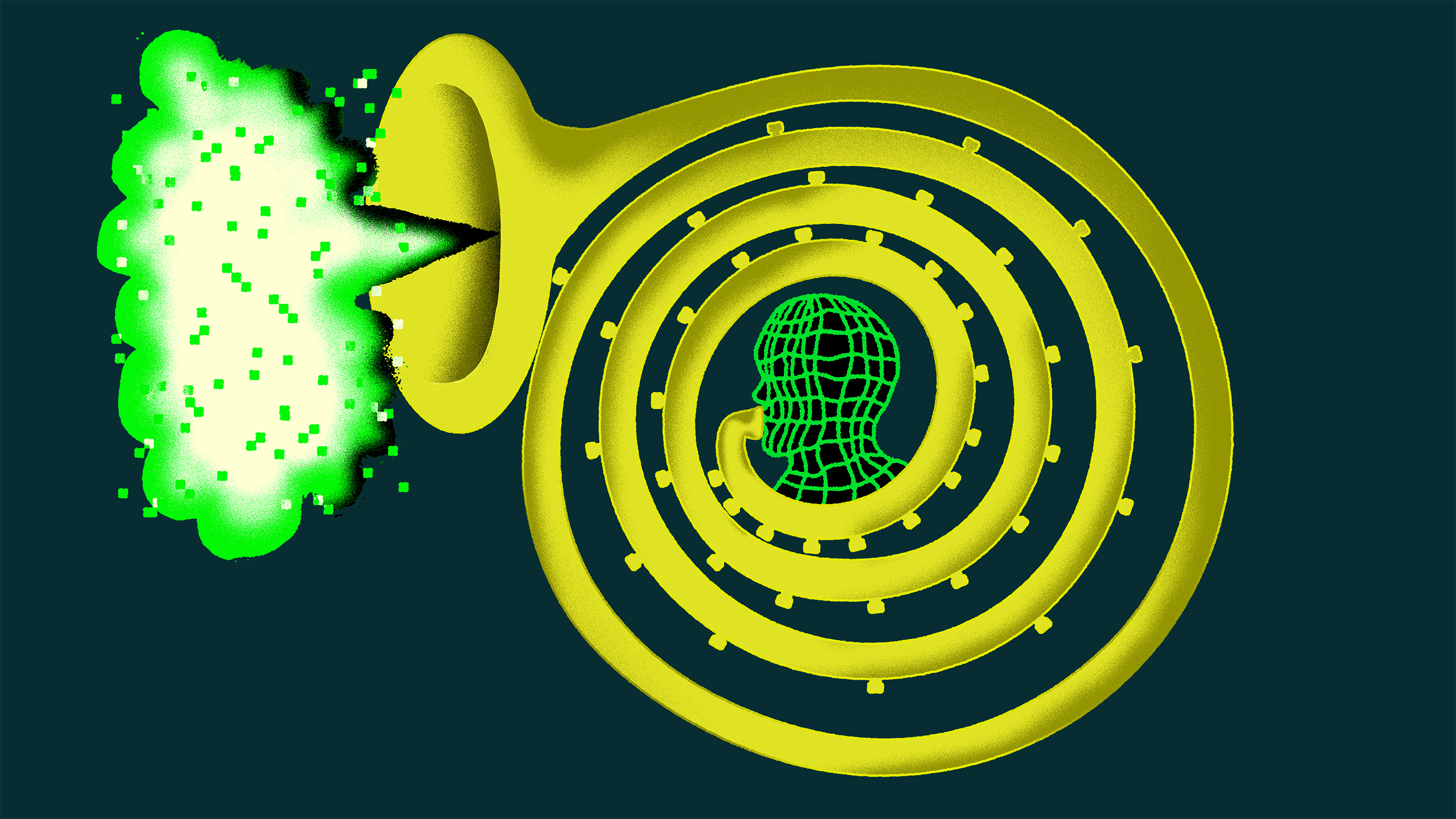

Sassoon had as much fun as anyone, coding up a mile-long trumpet into which he forced massive volumes of air heated to 1,000 °K—a.k.a. “dragon fire.” He used this instrument on Multiverse, but Sassoon soon became more interested in more subtle impossibilities.

By tweaking variables in the simulation, he was able to change the physical rules governing energy loss, creating conditions that don’t exist in our universe. Playing a guitar in this alien world, barely touching the fretboard with needle-tip fingers, he could make the strings vibrate without losing energy. “You get these harmonics that just fizzle forever,” he says.

The software developed by NESS continues to improve. Their algorithms have sped up with the help of the university’s parallel computing center, which operates the UK’s supercomputer Archer. And Ducceschi, Bilbao, and others have spun off a startup called Physical Audio, which sells plug-ins that can run on laptops.

Sassoon thinks this new generation of digital sound will change the future of music. One downside is that fewer people will learn to play physical instruments, he says. On the other hand, computers could start to sound more like real musicians—or something different altogether. “And that’s empowering,” he says. “It opens up new kinds of creativity.”

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.