Automatic for the robots

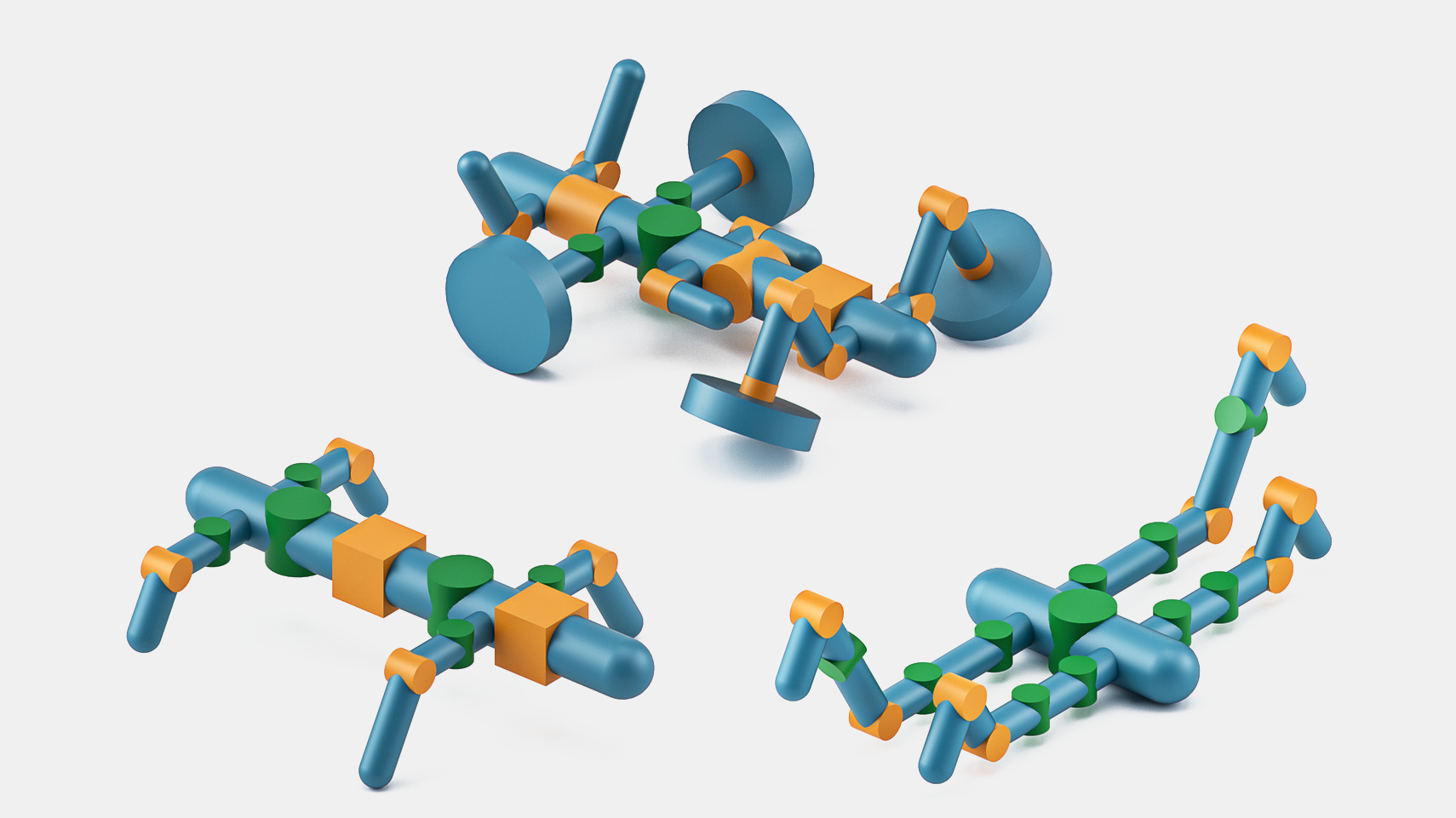

A computer model inspired by animal anatomy generates and tests hundreds of robot designs.

Robot design is usually a painstaking process, but MIT researchers have developed a system that helps automate the task. Once it’s told which parts you have—such as wheels, joints, and body segments—and what terrain the robot will need to navigate, RoboGrammar is on the case, generating optimized structures and control programs.

To rule out “nonsensical” designs, the researchers developed an animal-inspired “graph grammar”—a set of rules for how parts can be connected, says Allan Zhao, a PhD student in the Computer Science and Artificial Intelligence Laboratory. The rules were particularly informed by the anatomy of arthropods such as insects and lobsters, which all have a central body with a variable number of segments that may have legs attached. (The grammar also allows wheels.)

RoboGrammar can generate thousands of potential structures based on these rules. Choosing among them requires simulating each robot’s performance with a controller—the instructions governing the movement sequence of a robot’s motors. Using an algorithm that prioritizes rapid forward movement, the researchers developed an individual controller for each robot. Then they turned the simulated robots loose and let a neural network figure out which designs moved most efficiently.

Zhao, whose team plans to test some of the winning designs in the real world, describes RoboGrammar as a “tool for robot designers to expand the space of robot structures they draw upon.” To his surprise, though, most of the structures it came up with were four-legged, just like the majority designed by humans.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.