What the complex math of fire modeling tells us about the future of California’s forests

California’s fires are getting bigger and harder to predict. The only way to tame them may be to remake the landscape itself.

At the height of California’s worst wildfire season on record, Geoff Marshall looked down at his computer and realized that an enormous blaze was about to take firefighters by surprise.

Marshall runs the fire prediction team at the California Department of Forestry and Fire Protection (known as Cal Fire), headquartered in Sacramento, which gives him an increasingly difficult job: anticipating the behavior of wildfires that become less predictable every year.

The problem was obvious from where Marshall sat: California’s forests were caught between a management regime devoted to growing thick stands of trees—and eradicating the low-intensity fire that had once cleared them—and a rapidly warming, increasingly unstable climate.

As a result, more and more fires were crossing a poorly understood threshold from typical wildfires—part of a normal burn cycle for a landscape like California’s—to monstrous, highly destructive blazes. Sometimes called “megafires” (a scientifically meaningless term that loosely refers to fires that burn more than 100,000 acres), these massive blazes are occurring more often around the world, blasting across huge swaths of California, Chile, Australia, the Amazon, and the Mediterranean region.

At that particular moment in California last September, several unprecedented fires were burning simultaneously. Together, they would double the record-setting acreage of the 2018 wildfire season in less than a month. But just as concerning to Marshall as their size was that the biggest fires often behaved in unexpected ways, making it harder to forecast their movements.

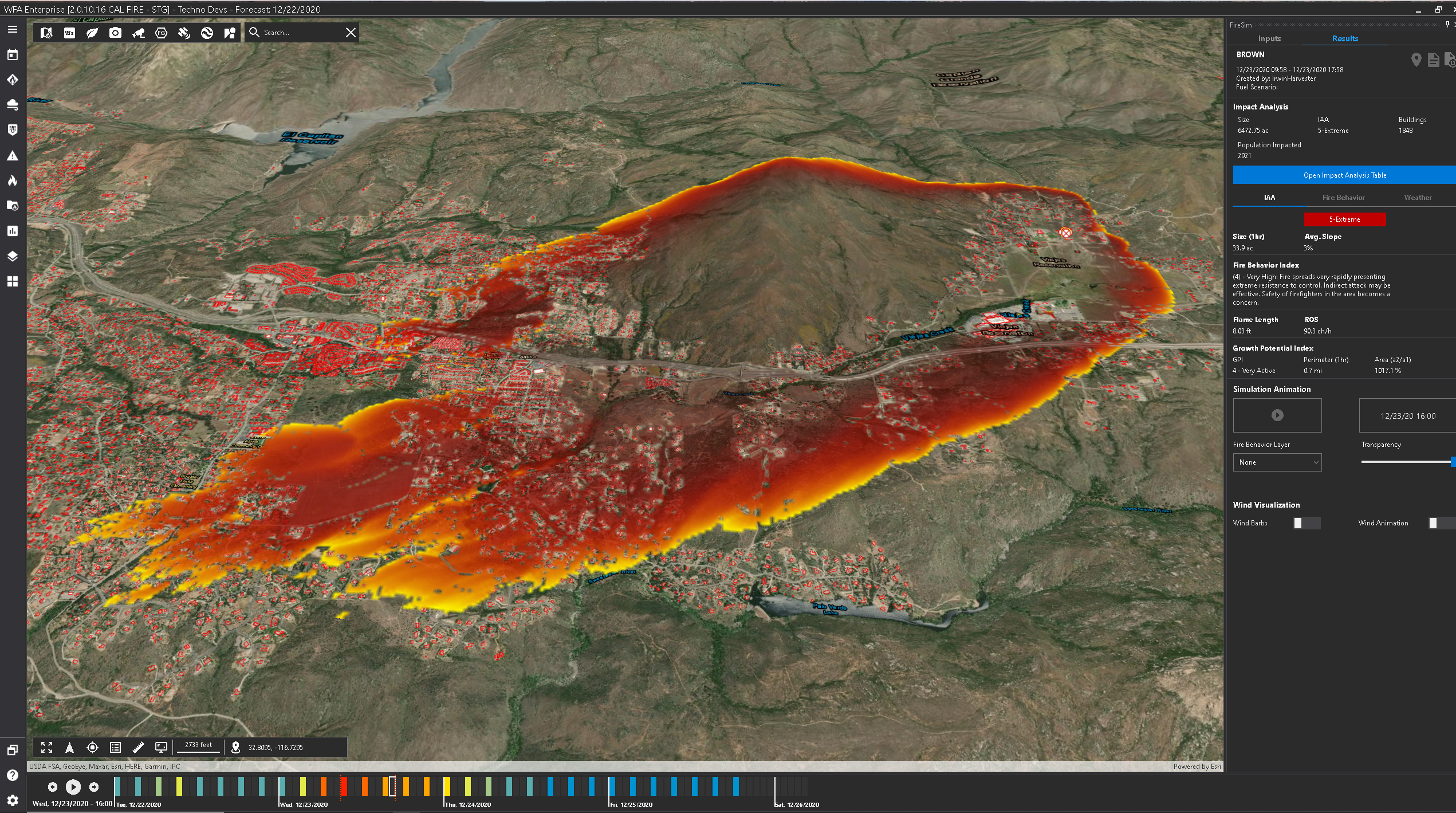

To face this new era, Marshall had a new tool at his disposal: Wildfire Analyst, a real-time fire prediction and modeling program that Cal Fire first licensed from a California-based firm called TechnoSylva in 2019.

The work of predicting how fires spread had long been a matter of hand-drawn ellipses and models so slow analysts set them before bed and hoped they were done in the morning. Wildfire Analyst, on the other hand, funnels data from dozens of distinct feeds: weather forecasts, satellite images, and measures of moisture in a given area. Then it projects all that on an elegant graphic overlay of fires burning across California.

Every night, while fire crews sleep, Wildfire Analyst seeds those digital forests with millions of test burns, pre-calculating their spread so that human analysts like Marshall can do simulations in a matter of seconds, creating “runs” they can port to Google Maps to show their superiors where the biggest risks are. But this particular risk, Marshall suddenly realized, had slipped past the program.

The display now showed a cluster of bright pink and green polygons creeping over the east flank of the Sierras, near the town of Big Creek. The polygons, one of the many feeds ported directly into Wildfire Analyst, were from FireGuard, a real-time feed from the US Department of Defense that estimates all wildfires’ current locations. They were spreading, far faster than they should have been, up the Big Creek drainage.

In its calculations, Wildfire Analyst had made a number of assumptions. It “saw,” on the other side of Big Creek, a dense stand of heavy timber. Such stands were traditionally thought to impede the rapid spread of fire, which models attribute largely to fine fuels like pine straw.

But Marshall suddenly realized, as the algorithms driving Wildfire Analyst had not, that the drainage held all the ingredients for a perfect firestorm. That “heavy timber,” he knew, was in fact a huge patch of dead trees weakened by beetles, killed by drought, and baked by two weeks of 100 °F heat into picture-perfect firewood. And the Big Creek valley would focus the wind onto the fire like a bellows. With no weather station at the mouth of the creek, the program couldn’t see all that.

Marshall went back to his computer and re-ran some numbers with the new variables factored in. He watched on his screen as the fire spread at frightening speed across the Sierra. “I went to the operation trailer and told my uppers: I think it’s going to jump the San Joaquin River,” he recalls. “And if it does, it’s going to run big.”

This was, at that moment, a far-fetched claim—no California fire had ever made a nine-mile run in heavy timber, no matter how dry. But in this case, the trees’ combustion created powerful plumes of superheated air that drove the fire on. It jumped the river and raced through the timber to a reservoir known as Mammoth Pool, where a last-minute airlift saved 200 campers from fiery death.

The Creek Fire was a case study in the challenge facing today’s fire analysts, who are trying to predict the movements of fires that are far more severe than those seen just a decade ago. Since we understand so little about how fire works, they’re using mathematical tools built on outdated assumptions, as well as technological platforms that fail to capture the uncertainty in their work. Programs like Wildfire Analyst, while useful, give an impression of precision and accuracy that can be misleading.

Getting ahead of the most destructive fires will require not simply new computational tools but a sweeping change in how forests are managed. Along with climate change, generations of land and environmental management decisions—intended to preserve the forests that many Californians feel a duty to protect—have inadvertently created this new age of hyper-destructive fire.

But if these massive fires continue, California could see the forests of the Sierra erased as thoroughly as those of Australia’s Blue Mountains. Avoiding this nightmare scenario will require a paradigm shift. Residents, fire commanders, and political leaders must switch from a mindset of preventing or controlling wildfire to learning to live with it. That will mean embracing fire management techniques that encourage more frequent burns—and ultimately allowing fires to forever transform the landscapes that they love.

Shaky assumptions

In late October, Marshall shared his screen and took me on a tour in Wildfire Analyst. We watched the fluorescent FireGuard polygons of a new flame “finger” break out from the smoldering August Complex. With a few clicks, he laid four tiny virtual fires along the real fire’s edge, on the far side of the fire line that had blocked its progress. A few seconds later, fire blossomed across the simulated landscape. Under current conditions, the model estimated, a fire that broke out at those points could “blow out” to 8,000 acres—a nearly three-mile run—within 24 hours.

For Marshall and the rest of Cal Fire’s analysts, Wildfire Analyst provides a standardized platform on which to share data from fires they’re watching, projections about the runs they might make, and hacks to make a simulated fire approximate the behavior of a real one. With that information, they try to anticipate where a fire is going to go next, which in theory can drive decisions about where to send crews or which regions to evacuate.

Like any model, Wildfire Analyst is only as good as the data that feeds it—and that data is only as good as our scientific understanding of the phenomenon in question. When it comes to the mechanics of wildland fire, that understanding is “medieval,” says Mark Finney, director of the US Forest Service’s Missoula Fire Lab.

Our current approach to fire modeling, which powers every real-time analytic platform including TechnoSylva’s Wildfire Analyst, is built on a particular set of equations that a researcher named Richard Rothermel derived at the Fire Lab nearly half a century ago to calculate how fast fire would move, with given wind conditions, through given fuels.

Rothermel’s key assumption—perhaps a necessary one, given the computational tools available at the time, but one we now know to be false—was that fires spread only through radiation as the front of the flame catches fine fuels (pine straw, leaf litter, twigs) on the ground.

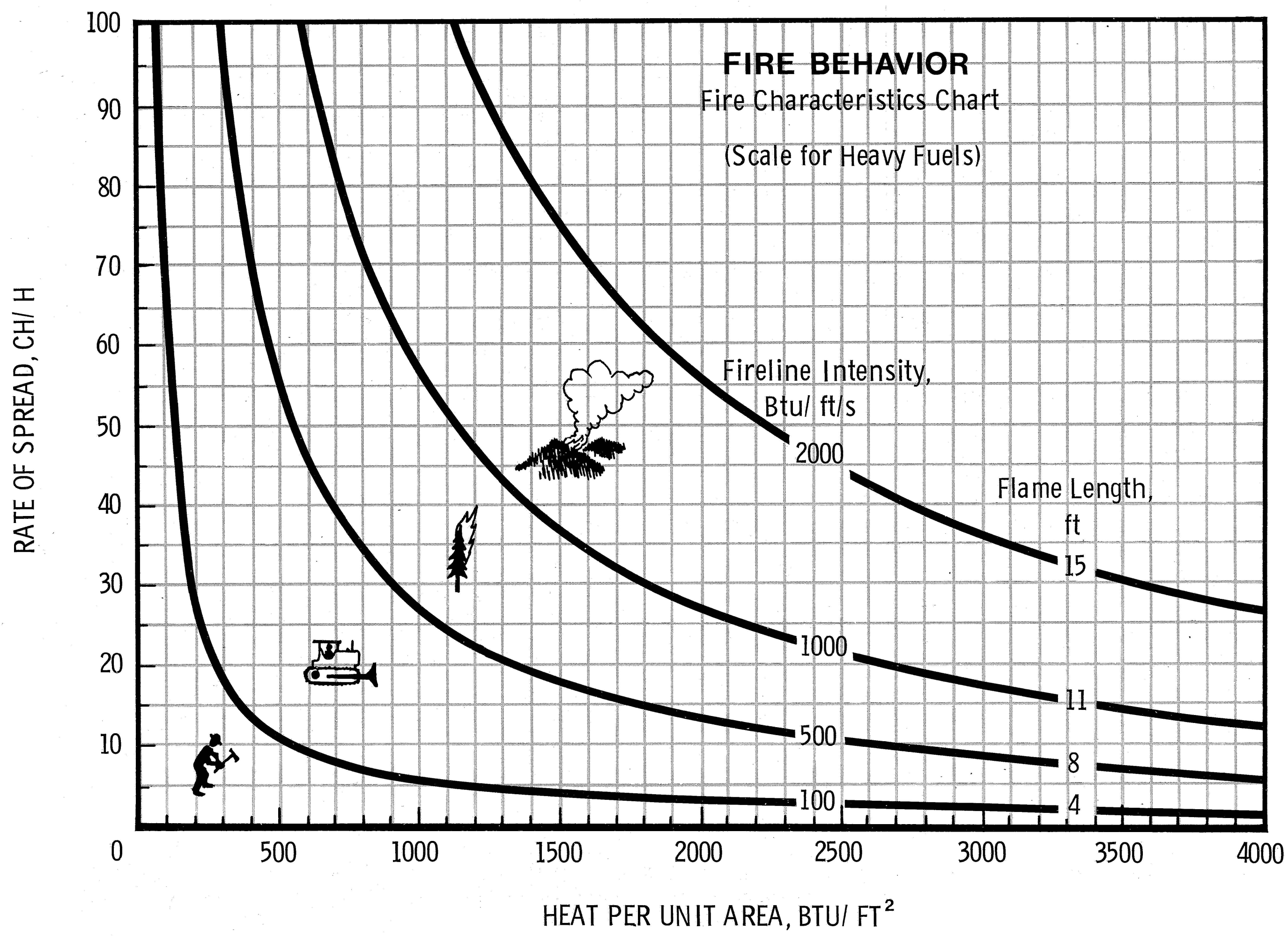

That spread, Rothermel found, drove outward in a thin, expanding edge along an ellipse. To figure out how a fire would grow, firefighters in the field used “nomograms”: premade graphs that assigned specific values for wind speed, slope, and fuel conditions to reveal an average speed of spread.

In his early days in the field, Finney says, “you would spread your folder of nomograms on the hood of your pickup and make your projections in thick pencil,” charting on a topo map where the fire would be in an hour, or two, or three. Rothermel’s equations allowed analysts to model fire like a game of Go, across homogenous cells of a two-dimensional landscape.

This is where things have stood for decades. Wildfire Analyst and similar tools represent a repackaging of this approach more than a fundamental improvement on it. (TechnoSylva did not respond to multiple interview requests.) What’s needed now is less a technique for real-time prediction than a fundamental reappraisal of how fire works—and a concerted effort to restore California’s landscapes to something approaching a natural equilibrium.

Complications

The problem for products like Wildfire Analyst, and for analysts like Marshall, is easy to state and hard to solve. A fire is not a linear system, proceeding from cause to effect. It is a “coupled” system in which cause and effect are tangled up. Even on the scale of a candle, ignition kicks off a self-sustaining reaction that deforms the environment around it, changing the entire system further—fuel decaying into flame, sucking in more wind, which stokes the fire further and breaks down more fuel.

Such systems are notoriously sensitive to even small changes, which makes them fiendishly difficult to model. A small variance in the starting data can lead, as with the Creek Fire calculations, to an answer that is exponentially wrong. In terms of this kind of nonlinear complexity, fire is a lot like weather—but the computational fluid dynamic models that are used to build forecasts for, say, the National Weather Service require supercomputers. The models that try to capture the complexity of a wildland blaze are typically hundreds of times simpler.

Pioneering scientists like Rothermel dealt with this intractable problem by ignoring it. Instead, they searched for factors, such as wind speed and slope, that could help them predict a fire’s next move in real time.

Looking back, Finney says, it’s a miracle that Rothermel’s equations work for wildfires at all. There’s the sheer difference in scale—Rothermel derived his equations from tiny, controlled fires set in 18-inch fuel beds. But there are also more fundamental errors. Most glaring was Rothermel’s assumption that fire spreads only by radiation, instead of through the convection currents that you see when a campfire flickers.

This assumption isn’t true, and yet for some fires, even huge ones like 2017’s Northwest Oklahoma Complex, which burned more than 780,000 acres, Rothermel’s spread equations still seem to work. But at certain scales, and under certain conditions, fire creates a new kind of system that defies any such attempt to describe it.

The Creek Fire in California, for example, didn’t just go big. It created a plume of hot air that pooled under the stratosphere, like steam against the lid of a pressure cooker. Then it popped through to 50,000 feet, sucking in air from below that drove the flames on, creating a storm system—complete with lightning and fire tornadoes—where no storm should have been.

Other huge, destructive fires appear to ricochet off the weather, or each other, in chaotic ways. Fires usually quiet down at night, but in 2020, two of the biggest runs in California broke out at night. Since heat rises, fires usually burn uphill, but in the Bear Fire, two enormous flame heads raced 22 miles downhill, a line of tornadic plumes spinning between them.

Finney says we don’t know if the intensity caused the strange behaviors or vice versa, or if both rose from some deeper dynamic. One measure of our ignorance, in his view, is that we can’t even rely on it: “It would be really nice to know when our current models will work and when they won’t,” he says.

Illusions

To Finney and other fire scientists, the danger with products like Wildfire Analyst is not necessarily that they’re inaccurate. All models are. It’s that they hide solutions inside a black box, and—far more important—focus on the wrong problem.

Unlike Wildfire Analyst, the older generation of tools required analysts to know precisely what hedges and assumptions they were making. The new tools leave all that to the computer. Such products play into the field’s obsession with modeling, scientist after scientist told me, despite the fact that no model can predict what fire will do.

“You can always calibrate the system afterward to match your observations,” says Brandon Collins, a wildfire research scientist at UC Berkeley. “But can you predict it beforehand?”

Doing so is a question of science rather than technology: it would require primary research to develop and test a new theory of flame. But such work is expensive, and most wildfire research money is awarded to solve specific technical problems. The Missoula Fire Lab survives on the remnants of a Great Society–era budget; its sister facility, the Macon Fire Lab in Georgia, was shut down in the 1990s.

Collins and Finney are doing what they can with the funds available to them. They’re both part of a public-private fire science working group called Pyregence that’s converting a grain silo into a furnace to see how large logs, like the fallen timber on Big Creek, spread fire.

Meanwhile, Finney’s team at the Missoula Fire Lab is working to develop a data set that answers fundamental questions about fire—a potential basis for new models. They aim to describe how wind on smoldering logs drives new flame fronts; quantify the likelihood that embers cast by a flame will “spot,” or ignite, new fires; and study the role that pine forests seem to play in encouraging their own burning.

The point of those models is less to see where a particular fire will go once it’s broken out, and more to serve as a planning tool to help Californians better manage the fire-prone, fire-suppressed landscape they live in.

Like ecosystems in Chile, Portugal, Greece, and Australia—all regions that have recently seen more megafires—California’s conifer forests evolved over thousands of years in which natural and human-caused fires periodically cleared out excess fuel and created the space and nutrients for new growth.

Before the 19th century, Native Americans are thought to have deliberately burned about as much of California every year as burned there in 2020. Similar practices survived until as recently as the 1970s—ranchers in the Sierra foothills would burn brush to encourage new growth for their animals to eat. Loggers pulled tons of timber from forests groomed to produce huge volumes of it, burning the debris in place.

Then, as ranchers went bust and sold their land to developers, pastureland became residential communities. Clean-air regulations discouraged the remaining ranchers from burning. And decades of conflict between environmental organizations and logging companies ended, in the 1990s, with loggers deserting the forests they had once clear-cut.

In the Sierra—as in these other regions now prone to huge, destructive fires—a heavily altered landscape that was long ago torn from any natural equilibrium was largely abandoned. Millions of acres of pine grew in, packed and thirsty. Eventually many were killed by drought and bark beetles, accumulating into a preponderance of fuel. Fires that could have cleared the land and reset the forest were extinguished by the US Forest Service and Cal Fire, whose primary objective had become wholesale fire suppression.

Breaking free of this legacy won’t be easy. The future Finney is working toward is one where people can compare various models and decide which will work best for a given situation. He and his team hope better data will lead to better planning models that, he says, “could give us the confidence to let some fires burn and do our work for us.”

Still, he says, focusing too much on models risks missing a more important question: “What if we are ignoring the basic aspect of wildfire—that we need more fire, proper fire, so that we don’t let wildfire surprise and destroy us?”

Living with wildfires

In 2014, the King Fire raged across the California Sierra, leaving a burn scar where trees have still not regrown. Instead, says Forest Service silviculturist Dana Walsh, they’ve been replaced by thick mats of chaparral, a fire-prone shrub that has squeezed out the forest’s return.

“People ask what happens if we just let nature take its course after a big fire,” Walsh says. “You get 30,000 acres of chaparral.”

This is the danger that landscapes from the Pyrenees to California Sierra to Australia’s Blue Mountains now face, says Marc Castellnou, a Catalan fire scientist who is a consultant to TechnoSylva. Over the last two decades, he’s studied the rise of megafires around the world, watching as they smashed records for length or speed of runs.

For too long, he says, California’s fire and forest policy has resisted an inevitable change in the landscape. The state doesn’t need flawless predictive tools to see where its forests are headed, he says: “The fuel is building up, the energy is building up, the atmosphere is getting hotter.” The landscape will rebalance itself.

California’s choice—as in Catalonia, where Castellnou is chief scientist for the autonomous province’s 4,000-person fire corps—is to either move with that change and have some chance of influencing it, or be bowled over by megafires.

The goal is less to regenerate native forests in these areas—which Castellnou believes have been made obsolete by climate change—than to work with the landscape to develop a new type of forest where wildfires are less likely to blow out into massive blazes.

In large measure, his approach lies in returning to old land management techniques. Rural people in his region once controlled destructive fires by starting or allowing frequent, low-intensity fires, and using livestock to eat down brush in the interim. They planted stands of fire-resistant hardwood species that stood like sentinels, blocking waves of flame.

For Castellnou, though, this also means making politically difficult choices. In July 2019, just outside of Tivissa, Spain, I watched him explain to a group of rural Catalan mayors and olive farmers why he had let the area around their towns burn.

He’d worried that if crews slowed the Catalan fires, they might cause it to form a pyrocumulonimbus—a violent cloud of fire, thunder, and wind like the one that formed over the Creek Fire. Such a phenomenon could have spurred the fire on until it took the towns anyway. Now, he says, gesturing to the burn scar, the towns had a fire defense in place of a liability. It was another tile in a mosaic landscape of pasture, forest, and old fire scars that could interrupt wildfire.

As tough as planned burns are for many to swallow, letting wildfires burn through towns—even evacuated ones—is an even tougher sell. And replacing pristine Sierra Nevada forests with a landscape able to survive both drought and the most destructive fires—say, open stands of ponderosa pine punctuated by fields of grass, picked over by goats or cattle—might feel like a loss.

Doing any of this well means adopting a change in philosophy as big as any change in predictive tech or science—one that would welcome fire back as a natural part of the environment. “We are not trying to save the landscape,” Castellnou says. “We are trying to help create the next landscape. We are not here to fight flames. We are here to make sure we have a forest tomorrow.”

Deep Dive

Climate change and energy

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Harvard has halted its long-planned atmospheric geoengineering experiment

The decision follows years of controversy and the departure of one of the program’s key researchers.

Why hydrogen is losing the race to power cleaner cars

Batteries are dominating zero-emissions vehicles, and the fuel has better uses elsewhere.

Decarbonizing production of energy is a quick win

Clean technologies, including carbon management platforms, enable the global energy industry to play a crucial role in the transition to net zero.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.