The true dangers of AI are closer than we think

Forget superintelligent AI: algorithms are already creating real harm. The good news: the fight back has begun.

As long as humans have built machines, we’ve feared the day they could destroy us. Stephen Hawking famously warned that AI could spell an end to civilization. But to many AI researchers, these conversations feel unmoored. It’s not that they don’t fear AI running amok—it’s that they see it already happening, just not in the ways most people would expect.

AI is now screening job candidates, diagnosing disease, and identifying criminal suspects. But instead of making these decisions more efficient or fair, it’s often perpetuating the biases of the humans on whose decisions it was trained.

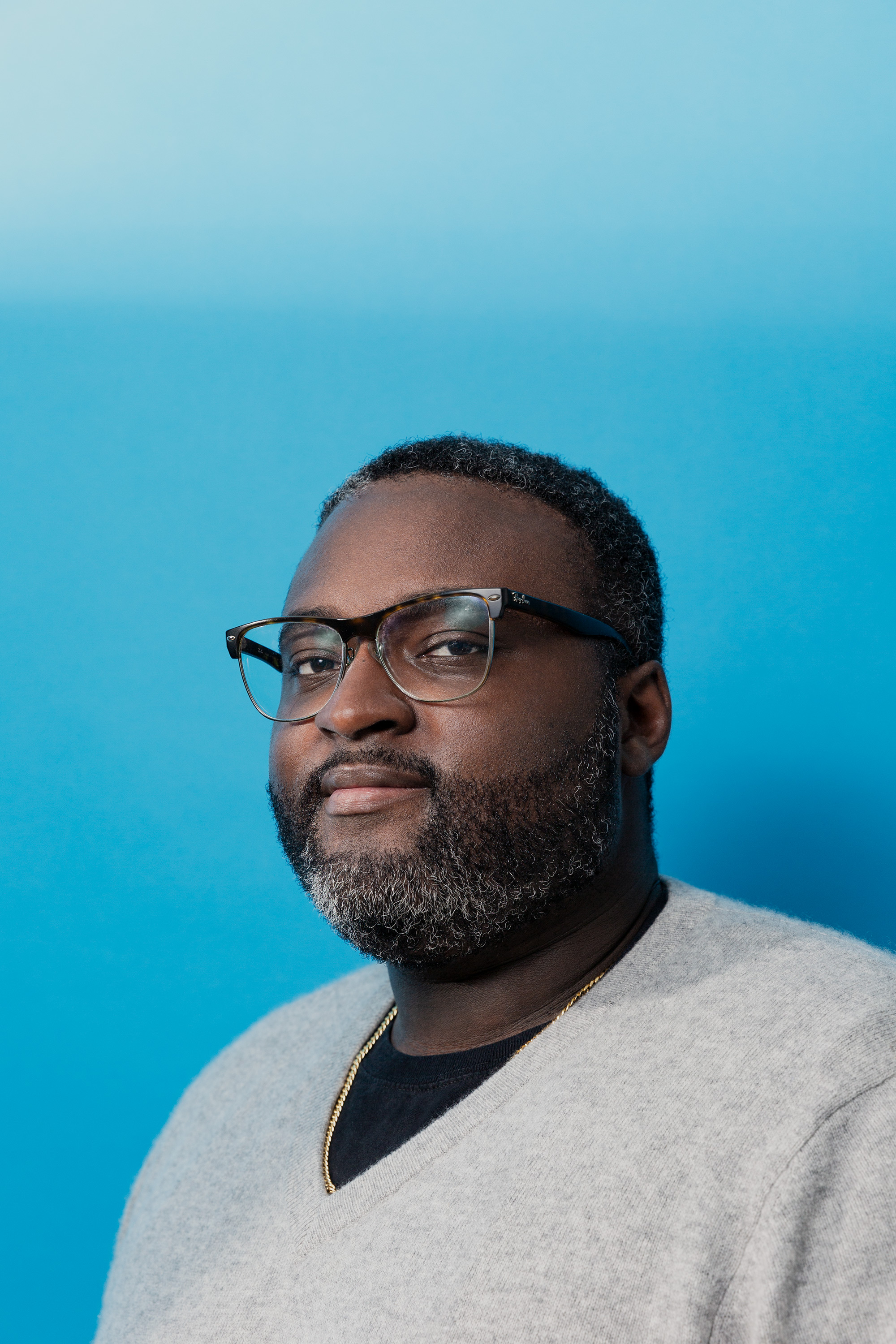

William Isaac is a senior research scientist on the ethics and society team at DeepMind, an AI startup that Google acquired in 2014. He also co-chairs the Fairness, Accountability, and Transparency conference—the premier annual gathering of AI experts, social scientists, and lawyers working in this area. I asked him about the current and potential challenges facing AI development—as well as the solutions.

Q: Should we be worried about superintelligent AI?

A: I want to shift the question. The threats overlap, whether it’s predictive policing and risk assessment in the near term, or more scaled and advanced systems in the longer term. Many of these issues also have a basis in history. So potential risks and ways to approach them are not as abstract as we think.

There are three areas that I want to flag. Probably the most pressing one is this question about value alignment: how do you actually design a system that can understand and implement the various forms of preferences and values of a population? In the past few years we’ve seen attempts by policymakers, industry, and others to try to embed values into technical systems at scale—in areas like predictive policing, risk assessments, hiring, etc. It’s clear that they exhibit some form of bias that reflects society. The ideal system would balance out all the needs of many stakeholders and many people in the population. But how does society reconcile their own history with aspiration? We’re still struggling with the answers, and that question is going to get exponentially more complicated. Getting that problem right is not just something for the future, but for the here and now.

The second one would be achieving demonstrable social benefit. Up to this point there are still few pieces of empirical evidence that validate that AI technologies will achieve the broad-based social benefit that we aspire to.

Lastly, I think the biggest one that anyone who works in the space is concerned about is: what are the robust mechanisms of oversight and accountability.

Q: How do we overcome these risks and challenges?

A: Three areas would go a long way. The first is to build a collective muscle for responsible innovation and oversight. Make sure you’re thinking about where the forms of misalignment or bias or harm exist. Make sure you develop good processes for how you ensure that all groups are engaged in the process of technological design. Groups that have been historically marginalized are often not the ones that get their needs met. So how we design processes to actually do that is important.

The second one is accelerating the development of the sociotechnical tools to actually do this work. We don’t have a whole lot of tools.

The last one is providing more funding and training for researchers and practitioners—particularly researchers and practitioners of color—to conduct this work. Not just in machine learning, but also in STS [science, technology, and society] and the social sciences. We want to not just have a few individuals but a community of researchers to really understand the range of potential harms that AI systems pose, and how to successfully mitigate them.

Q: How far have AI researchers come in thinking about these challenges, and how far do they still have to go?

A: In 2016, I remember, the White House had just come out with a big data report, and there was a strong sense of optimism that we could use data and machine learning to solve some intractable social problems. Simultaneously, there were researchers in the academic community who had been flagging in a very abstract sense: “Hey, there are some potential harms that could be done through these systems.” But they largely had not interacted at all. They existed in unique silos.

Since then, we’ve just had a lot more research targeting this intersection between known flaws within machine-learning systems and their application to society. And once people began to see that interplay, they realized: “Okay, this is not just a hypothetical risk. It is a real threat.” So if you view the field in phases, phase one was very much highlighting and surfacing that these concerns are real. The second phase now is beginning to grapple with broader systemic questions.

Q: So are you optimistic about achieving broad-based beneficial AI?

A: I am. The past few years have given me a lot of hope. Look at facial recognition as an example. There was the great work by Joy Buolamwini, Timnit Gebru, and Deb Raji in surfacing intersectional disparities in accuracies across facial recognition systems [i.e., showing these systems were far less accurate on Black female faces than white male ones]. There’s the advocacy that happened in civil society to mount a rigorous defense of human rights against misapplication of facial recognition. And also the great work that policymakers, regulators, and community groups from the grassroots up were doing to communicate exactly what facial recognition systems were and what potential risks they posed, and to demand clarity on what the benefits to society would be. That’s a model of how we could imagine engaging with other advances in AI.

But the challenge with facial recognition is we had to adjudicate these ethical and values questions while we were publicly deploying the technology. In the future, I hope that some of these conversations happen before the potential harms emerge.

Q: What do you dream about when you dream about the future of AI?

A: It could be a great equalizer. Like if you had AI teachers or tutors that could be available to students and communities where access to education and resources is very limited, that’d be very empowering. And that’s a nontrivial thing to want from this technology. How do you know it’s empowering? How do you know it’s socially beneficial?

I went to graduate school in Michigan during the Flint water crisis. When the initial incidences of lead pipes emerged, the records they had for where the piping systems were located were on index cards at the bottom of an administrative building. The lack of access to technologies had put them at a significant disadvantage. It means the people who grew up in those communities, over 50% of whom are African-American, grew up in an environment where they don’t get basic services and resources.

So the question is: If done appropriately, could these technologies improve their standard of living? Machine learning was able to identify and predict where the lead pipes were, so it reduced the actual repair costs for the city. But that was a huge undertaking, and it was rare. And as we know, Flint still hasn’t gotten all the pipes removed, so there are political and social challenges as well—machine learning will not solve all of them. But the hope is we develop tools that empower these communities and provide meaningful change in their lives. That’s what I think about when we talk about what we’re building. That’s what I want to see.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.