Create your own moody quarantine music with Google’s AI

The Google Magenta team, which makes machine-learning tools for the creative process, has made models that help you compose melodies, and tools that help you sketch cats. Mostly because it’s fun, but also to explore how AI can make creation more accessible. Its latest project now gives anyone a chance to make quarantine tunes to vibe to—no music training necessary.

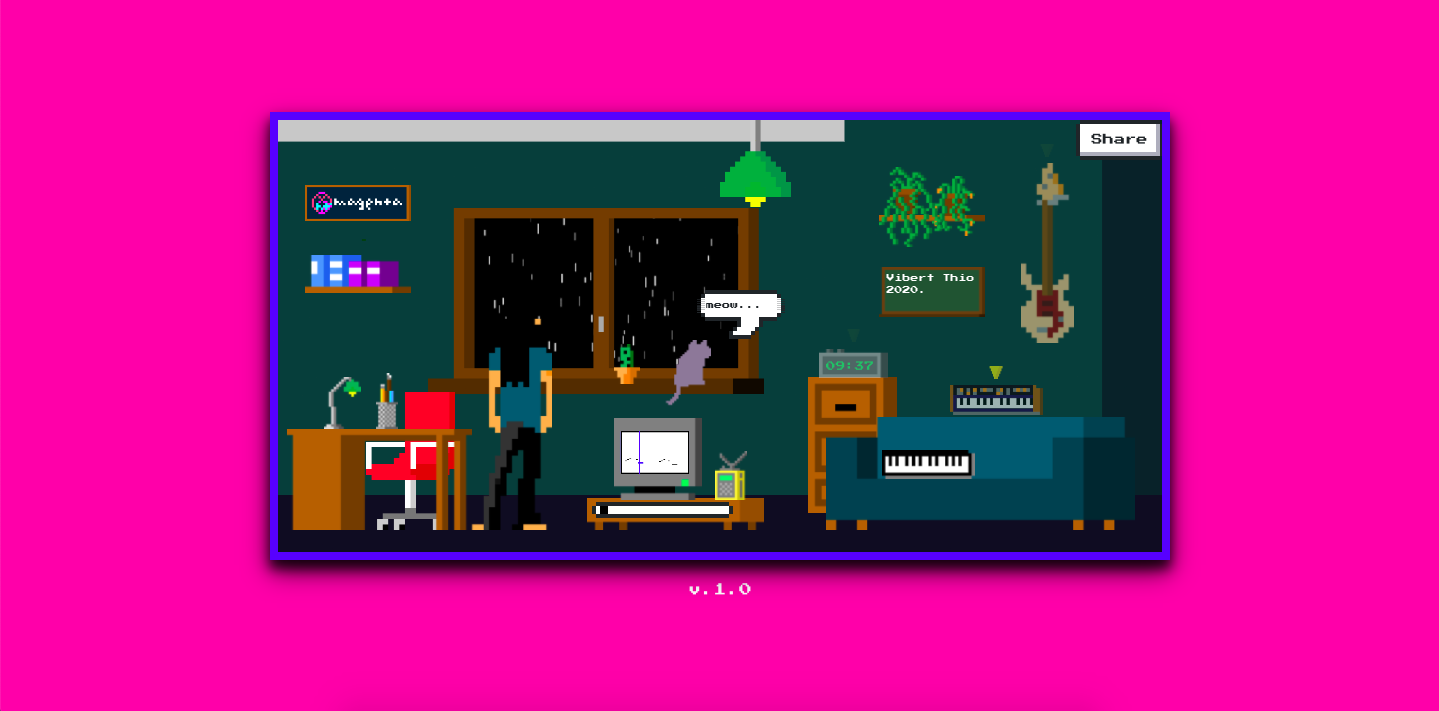

Lo-Fi Player, designed by Vibert Thio, a technologist and artist who interned with the team this summer, lets users interact with objects in a virtual room to mix their own lo-fi hip-hop soundtracks. The goal is to make the music-mixing experience as simple and friendly as possible. The room is a two-dimensional, pixelated drawing displayed in a web browser. Clicking on different objects, like the clock and the piano, prompts the user to adjust different tracks, like the drum line and melody.

There are two machine-learning models at work in the background. One, tucked away in the radio, generates new melodies when clicked on; the other, hidden in the TV, interpolates between two melodies to create something that sounds a little bit like both.

Most of the sounds in the room, however, are not generated by machine learning—and that’s kind of the point. Throughout the process, Thio worked with lo-fi producers to curate bass lines, drum lines, and background ambience that exemplify the genre and sound good. He also wrote four melody options that users can choose from. The machine learning adds just enough of a wild card on top of the scripted tracks to give each user a unique mix.

The initial launch of Lo-Fi Player also includes an interactive YouTube livestream, where users can type commands into the chat window to change the music. The idea is to make music creation a more collective experience, with quarantine in mind. “What a tiny, small thing to bring us together during covid,” says Doug Eck, a research scientist who supervised the project.

Right now the project is in its first version, but Thio already sees more possibilities. His dream project is to make a kind of TikTok for music creation—an interface that makes it really easy for non-musicians to play with music editing, share their creations, and express themselves.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.