The long, complicated history of “people analytics”

If you work for Bank of America, or the US Army, you might have used technology developed by Humanyze. The company grew out of research at MIT’s cross-disciplinary Media Lab and describes its products as “science-backed analytics to drive adaptability.”

If that sounds vague, it might be deliberate. Among the things Humanyze sells to businesses are devices for snooping on employees, such as ID badges with embedded RFID tags, near-field-communication sensors, and built-in microphones that track in granular detail the tone and volume (though not the actual words) of people’s conversations throughout the day. Humanyze has trademarked its “Organizational Health Score,” which it calculates on the basis of the employee data its badges collect and which it promises is “a proven formula to accelerate change and drive improvement.”

Or perhaps you work for one of the health-care, retail, or financial-services companies that use software developed by Receptiviti. The Toronto-based company’s mission is to “help machines understand people” by scanning emails and Slack messages for linguistic hints of unhappiness. “We worry about the perception of Big Brother,” Receptiviti’s CEO recently told the Wall Street Journal. He prefers calling employee surveillance “corporate mindfulness.” (Orwell would have had something to say about that euphemism, too.)

Such efforts at what its creators call “people analytics” are usually justified on the grounds of improving efficiency or the customer experience. In recent months, some governments and public health experts have advocated tracking and tracing applications as a means of stopping the spread of covid-19.

But in embracing these technologies, businesses and governments often avoid answering crucial questions: Who should know what about you? Is what they know accurate? What should they be able to do with that information? And is it ever possible to devise a “proven formula” for assessing human behavior?

By Jill Lepore

W.W. NORTON

2020, $28.95

Such questions have a history, but today’s technologists don’t seem to know it. They prefer to focus on the novel and ingenious ways their inventions can improve the human experience (or the corporate bottom line) rather than the ways people in previous eras have tried and failed to do the same. Each new algorithm or app is, in their view, an implicit rebuke of the past.

But that past can offer some much-needed guidance and humility. Despite faster computers and more sophisticated algorithms, today’s “people analytics” is fueled by an age-old reductive conceit: the notion that human nature in all its complexities can be reduced to a formula. We know enough about human behavior to exploit each other’s weaknesses, but not enough to significantly change it, except perhaps on the margins.

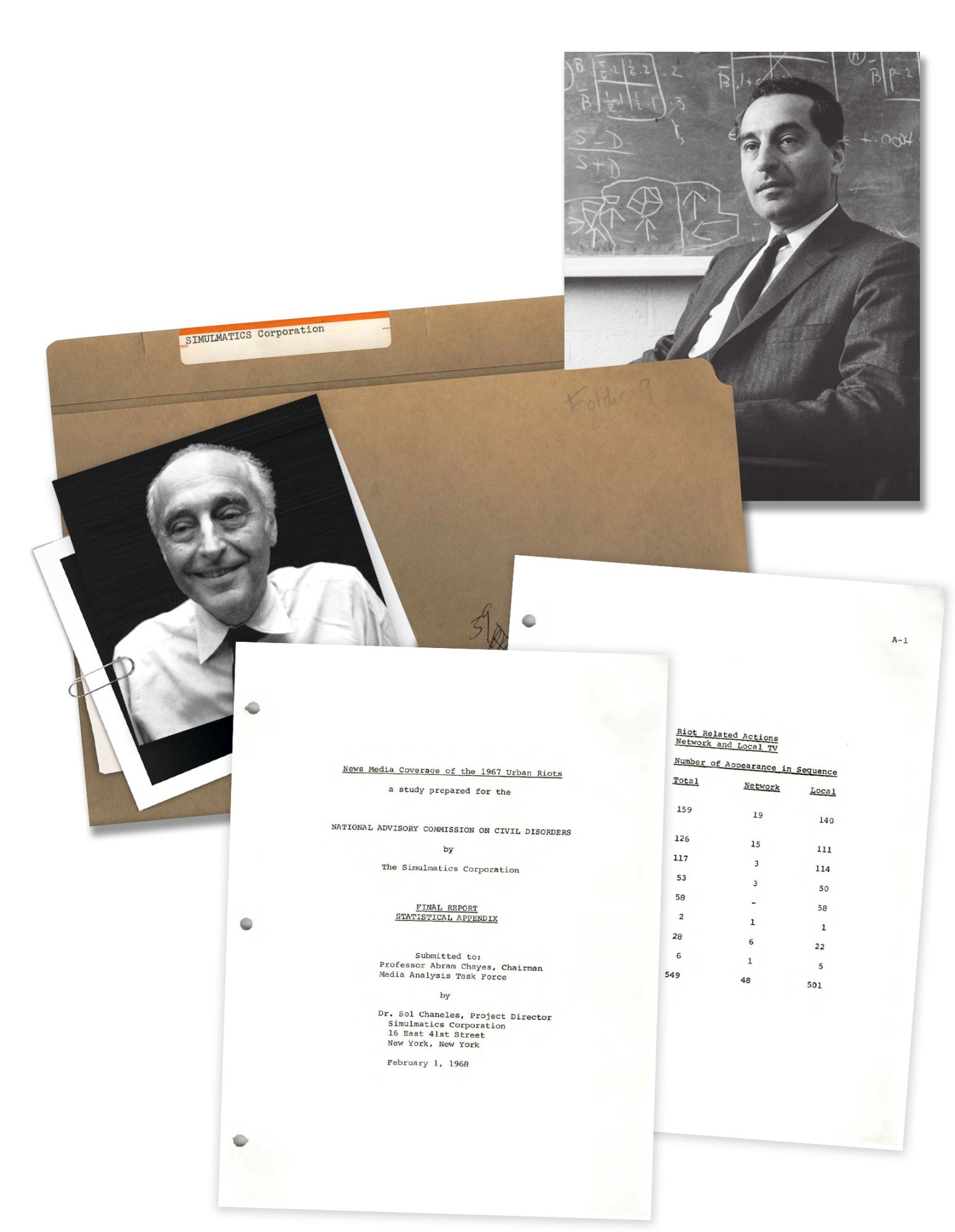

If Then, a new book by Jill Lepore, a historian at Harvard University and staff writer at the New Yorker, tells the story of a forgotten mid-20th-century technology company called the Simulmatics Corporation. Founded by a motley group of scientists and advertising men in 1959, it was, Lepore claims, “Cold War America’s Cambridge Analytica.”

A more accurate description might be that it was an effort by Democrats to compete with the Republican Party’s embrace of the techniques of advertising. By midcentury, Republicans were selling politicians to the public as though they were toilet paper or coffee. Simulmatics, which set up shop in New York City (but had to rent time on IBM’s computers to run its calculations), promised to predict the outcome of elections nearly in real time—a practice now so common it is mundane, but one then seen as groundbreaking, if not impossible.

The company’s name, a portmanteau of “simulation” and “automatic,” was a measure of its creators’ ambition: “to automate the simulation of human behavior.” Its main tool was the People Machine, which Lepore describes as “a computer program designed to predict and manipulate human behavior, all sorts of human behavior, from buying a dishwasher to countering an insurgency to casting a vote.” It worked by developing categories of people (such as white working-class Catholic or suburban Republican mother) and simulating their likely decision-making. (Targeted advertising and political campaigning today use broadly similar techniques.)

The company’s key players were drawn from a range of backgrounds. Advertising man Ed Greenfield was one of the first to glimpse how the new technology of television would revolutionize politics and became convinced that the earliest computers would exercise a similarly disruptive force on democracy. Ithiel de Sola Pool, an ambitious social scientist eager to work with the government to uncover the secrets of human behavior, eventually became one of the first, prescient theorists of social networks.

More than any other Simulmatics man, Pool embodied both the idealistic fervor and the heedlessness about norm-breaking that characterize technological innovators today. The son of radical parents who himself dabbled in socialism as a young man, he spent the rest of his life proving himself a committed Cold War patriot, and he once described his Simulmatics work as “a kind of Manhattan Project gamble in politics.”

One of the company’s first big clients was John F. Kennedy’s presidential campaign in 1960. When Kennedy won, the company claimed credit. But it also faced fears that the machine it had built could be turned to nefarious purposes. As one scientist said in a Harper’s magazine exposé of the company published shortly after the election, “You can’t simulate the consequences of simulation.” The public feared that companies like Simulmatics could have a corrupting influence on the democratic process. This, remember, was nearly half a century before Facebook was even founded.

One branch of government, though, was enthusiastic about the company’s predictive capabilities: the Department of Defense. As Lepore reminds readers, close partnerships between technologists and the Pentagon were viewed as necessary, patriotic efforts to stem the tide of Communism during the Cold War.

By 1966, Pool had accepted a contract to oversee a large-scale behavioral-science project for the Department of Defense in Saigon. “Vietnam is the greatest social-science laboratory we have ever had!” he enthused. Like Secretary of Defense Robert McNamara (whom Barry Goldwater once referred to as “an IBM machine with legs” and who commissioned the research), Pool believed the war would be won in the “hearts and minds” of the Vietnamese, and that it required behavioral-science modeling and simulation to win. As Lepore writes, “Pool argued that while statesmen in times past had consulted philosophy, literature, and history, statesmen of the Cold War were obligated to consult the behavioral sciences.”

Their efforts at computer-enabled counterinsurgency were a disastrous failure, in large part because Simulmatics’ data about the Vietnamese was partial and its simulations based more on wishful thinking than realities on the ground. But this didn’t prevent the federal government from coming back to Pool and Simulmatics for help understanding—and predicting—civil unrest back home.

The Kerner Commission, convened by President Lyndon Johnson in 1967 to study the race riots that had broken out across the country, paid Simulmatics’ Urban Studies Division to devise a predictive formula for riots to alert authorities to brewing unrest before it devolved into disorder. Like the predictions for Vietnam, these too proved dubious. By the 1970s, Simulmatics had declared bankruptcy, and “the automated computer simulation of human behavior had fallen into disrepute,” according to Lepore.

“The profit-motivated collection and use of data about human behavior, unregulated by any governmental body, has wreaked havoc on human societies.”

Simulmatics “lurks behind the screen of every device” we use, Lepore argues, and she claims its creators, the “long-dead, white-whiskered grandfathers of Mark Zuckerberg and Sergey Brin and Jeff Bezos and Peter Thiel and Marc Andreessen and Elon Musk,” are a “missing link” in the history of technology. But this is an overreach. The dream of sorting and categorizing and analyzing people has been a constant throughout history. Simulmatics’ effort was merely one of many, and hardly revolutionary.

Far more historically significant (and harmful) were 19th-century projects to categorize criminals, or early 20th-century campaigns to predict behavior based on pseudoscientific categories of race and ethnicity during the height of the eugenics movement. All these projects, too, relied on data collection and systematization and on partnerships with local and state governments for their success, but they also garnered significant enthusiasm from large swaths of the public, something Simulmatics never did.

What is true is that Simulmatics’ combination of idealism and hubris resembles that of many contemporary Silicon Valley companies. Like them, it viewed itself as the leading edge of a new Enlightenment, led by the people best suited to solve society’s problems, even as they failed to grasp the complexity and diversity of that society. “It would be easier, more comforting, less unsettling, if the scientists of Simulmatics were villains,” Lepore writes. “But they weren’t. They were midcentury white liberals in an era when white liberals were not expected to understand people who weren’t white or liberal.” Where the Simulmatics Corporation believed that the same formula could understand populations as distinct as American voters and Vietnamese villagers, today’s predictive technologies often make similarly grandiose promises. Fueled by far more sophisticated data gathering and analysis, they still fail to account for the full range and richness of human complexity and variation.

So although Simulmatics did not, as Lepore’s subtitle claims, invent the future, its attempts to categorize and forecast human behavior raised questions about the ethics of data that are still with us today. Lepore describes congressional hearings about data privacy in 1966, when a scientist from RAND outlined for Congress the questions it should be asking: “What is data? To whom does data belong? What obligation does the collector, or holder, or analyst of data hold over the subject of the data? Can data be shared? Can it be sold?”

Lepore laments a previous era’s failure to tackle such questions head-on. “If, then, in the 1960s, things had gone differently, this future might have been saved,” she writes, adding that “plenty of people believed at the time that a people machine was entirely and utterly amoral.” But it is also oddly reassuring to learn that even when our technologies were in their rudimentary stages, people were thinking through the likely consequences of our use of them.

As Lepore writes, Simulmatics was hobbled by the technological limitations of the 1960s: “Data was scarce. Models were weak. Computers were slow. The machine faltered, and the men who built it could not repair it.” But though today’s people machines are “sleeker, faster, and seemingly unstoppable,” they are not fundamentally different from that of Simulmatics. Both are based on a belief that mathematical laws of human nature are real, in the way that the laws of physics are—a false belief, Lepore notes:

The study of human behavior is not the same as the study of the spread of viruses and the density of clouds and the movement of the stars. Human behavior does not follow laws like the law of gravity, and to believe that it does is to take an oath to a new religion. Predestination can be a dangerous gospel. The profit-motivated collection and use of data about human behavior, unregulated by any governmental body, has wreaked havoc on human societies, especially on the spheres in which Simulmatics engaged: politics, advertising, journalism, counterinsurgency, and race relations.

While Simulmatics failed because it was ahead of its time, its modern counterparts are more powerful and more profitable. But remembering its story can help clarify the deficiencies of a society built on reductive beliefs about the power of data, and illuminate a path toward a dignified, vibrant, human future.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.