Our weird behavior during the pandemic is messing with AI models

In the week of April 12-18, the top 10 search terms on Amazon.com were: toilet paper, face mask, hand sanitizer, paper towels, Lysol spray, Clorox wipes, mask, Lysol, masks for germ protection, and N95 mask. People weren’t just searching, they were buying too—and in bulk. The majority of people looking for masks ended up buying the new Amazon #1 Best Seller, “Face Mask, Pack of 50”.

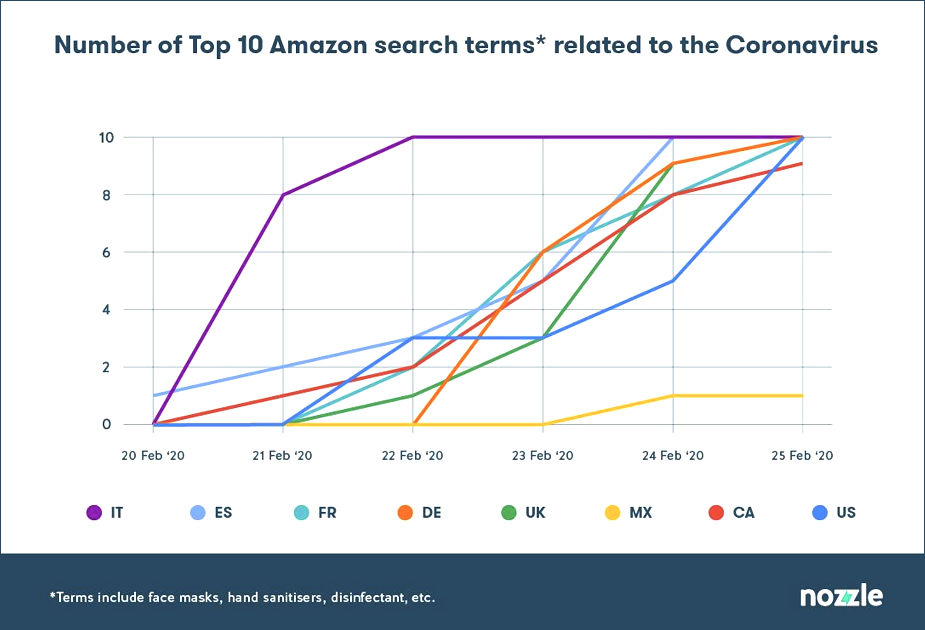

When covid-19 hit, we started buying things we’d never bought before. The shift was sudden: the mainstays of Amazon’s top ten—phone cases, phone chargers, Lego—were knocked off the charts in just a few days. Nozzle, a London-based consultancy specializing in algorithmic advertising for Amazon sellers, captured the rapid change in this simple graph.

It took less than a week at the end of February for the top 10 Amazon search terms in multiple countries to fill up with products related to covid-19. You can track the spread of the pandemic by what we shopped for: the items peaked first in Italy, followed by Spain, France, Canada, and the US. The UK and Germany lag slightly behind. “It’s an incredible transition in the space of five days,” says Rael Cline, Nozzle’s CEO. The ripple effects have been seen across retail supply chains.

But they have also affected artificial intelligence, causing hiccups for the algorithms that run behind the scenes in inventory management, fraud detection, marketing, and more. Machine-learning models trained on normal human behavior are now finding that normal has changed, and some are no longer working as they should.

How bad the situation is depends on whom you talk to. According to Pactera Edge, a global AI consultancy, “automation is in tailspin.” Others say they are keeping a cautious eye on automated systems that are just about holding up, stepping in with a manual correction when needed.

What’s clear is that the pandemic has revealed how intertwined our lives are with AI, exposing a delicate codependence in which changes to our behavior change how AI works, and changes to how AI works change our behavior. This is also a reminder that human involvement in automated systems remains key. “You can never sit and forget when you’re in such extraordinary circumstances,” says Cline.

More on coronavirus

Our most essential coverage of covid-19 is free, including:

How does the coronavirus work?

What are the potential treatments?

What's the right way to do social distancing?

Other frequently asked questions about coronavirus

---

Newsletter: Coronavirus Tech Report

Zoom show: Radio Corona

See also:

Please click here to subscribe and support our non-profit journalism.

Machine-learning models are designed to respond to changes. But most are also fragile; they perform badly when input data differs too much from the data they were trained on. It is a mistake to assume you can set up an AI system and walk away, says Rajeev Sharma, global vice president at Pactera Edge: “AI is a living, breathing engine.”

Sharma has been talking to several companies struggling with wayward AI. One company that supplies sauces and condiments to retailers in India needed help fixing its automated inventory management system when bulk orders broke its predictive algorithms. The system's sales forecasts that the company relied on to reorder stock no longer matched up with what was actually selling. “It was never trained on a spike like this, so the system was out of whack,” says Sharma.

Another firm uses an AI to assess the sentiment of news articles and provides daily investment recommendations based on the results. But with the news at the moment being gloomier than usual, the advice is going to be very skewed, says Sharma. And a large streaming firm that has had a sudden influx of content-hungry subscribers is also having problems with its recommendation algorithms, he says. The company uses machine learning to suggest relevant and personalized content to viewers so that they keep coming back. But the sudden change in subscriber data was making its system's recommendations less accurate.

Many of these problems with models arise because more businesses are buying machine-learning systems but lack the in-house know-how needed to maintain them. Retraining a model can require expert human intervention.

The current crisis has also shown that things can get worse than the fairly vanilla worst-case scenarios included in training sets. Sharma thinks more AIs should be trained not just on the ups and downs of the last few years, but also on freak events like the Great Depression of the 1930s, the Black Monday stock market crash in 1987, and the 2007-2008 financial crisis. “A pandemic like this is a perfect trigger to build better machine-learning models,” he says.

Even so, you can’t prepare for everything. In general, if a machine-learning system doesn’t see what it’s expecting to see, then you will have problems, says David Excell, founder of Featurespace, a behavior analytics company that uses AI to detect credit card fraud. Perhaps surprisingly, Featurespace has not seen its AI hit too badly. People are still buying things on Amazon and subscribing to Netflix the way they were before, but they are not buying big-ticket items or spending in new places, which are the behaviors that can raise suspicions. “People’s spending behavior is a contraction of their old habits,” says Excell.

The firm’s engineers only had to step in to adjust for a surge in people buying garden equipment and power tools, says Excell. These are the kinds of mid-price anomalous purchases that fraud-detection algorithms might pick up on. “I think there is certainly more oversight,” says Excell. “The world has changed, and the data has changed.”

Getting the tone right

London-based Phrasee is another AI company that is being hands-on. It uses natural-language processing and machine learning to generate email marketing copy or Facebook ads on behalf of its clients. Making sure that it gets the tone right is part of its job. Its AI works by generating lots of possible phrases and then running them through a neural network that picks the best ones. But because natural-language generation can go very wrong, Phrasee always has humans check what goes into and comes out of its AI.

When covid-19 hit, Phrasee realized that more sensitivity than usual might be required and started filtering out additional language. The company has banned specific phrases, such as “going viral,” and doesn’t allow language that refers to discouraged activities, such as “party wear.” It has even culled emojis that may be read as too happy or too alarming. And it has also dropped terms that may stoke anxiety, such as “OMG,” “be prepared,” “stock up,” and “brace yourself.” “People don’t want marketing to make them feel anxious and fearful—you know, like, this deal is about to run out, pressure pressure pressure,” says Parry Malm, the firm’s CEO.

As a microcosm for the retail industry as a whole, however, you can’t beat Amazon. It’s also where some of the most subtle behind-the-scenes adjustments are being made. As Amazon and the 2.5 million third-party sellers it supports struggle to meet demand, it is making tiny tweaks to its algorithms to help spread the load.

Most Amazon sellers rely on Amazon to fulfill their orders. Sellers store their items in an Amazon warehouse and Amazon takes care of all the logistics, delivering to people’s homes and handling returns. It then promotes sellers whose orders it fulfills itself. For example, if you search for a specific item, such as a Nintendo Switch, the result that appears at the top, next to the prominent “Add to Basket” button, is more likely to be from a vendor that uses Amazon’s logistics than one that doesn’t.

But in the last few weeks Amazon has flipped that around, says Cline. To ease demand on its own warehouses, its algorithms now appear more likely to promote sellers that handle their own deliveries.

Volatile markets

This kind of adjustment would be hard to do without manual intervention. “The situation is so volatile,” says Cline. “You're trying to optimize for toilet paper last week, and this week everyone wants to buy puzzles or gym equipment.”

The tweaks Amazon makes to its algorithms then have knock-on effects on the algorithms that sellers use to decide what to spend on online advertising. Every time a web page with ads loads, a super-fast auction takes place where automated bidders decide between themselves who gets to fill each ad box. The amount these algorithms decide to spend for an ad depends on a myriad of variables, but ultimately the decision is based on an estimate of how much you, the eyeballs on the page, are worth to them. There are lots of ways to predict customer behavior, including not only data about your past purchases but also the pigeonhole that ad companies have placed you in on the basis of your online activity.

But now one of the best predictors of whether someone who clicks an ad will buy your product is how long you say it will take to deliver it, says Cline. So Nozzle is talking to customers about adjusting their algorithms to take this into account. For example, if you think you can’t deliver faster than a competitor, it might not be worth trying to outbid them in an ad auction. On the other hand, if you know your competitor has run out of stock, then you can go in cheap, gambling that they won’t bid.

All of this is possible only with a dedicated team keeping tabs on things, says Cline. He thinks the current situation is an eye-opener for a lot of people who assumed all automated systems could run themselves. “You need a data science team who can connect what’s going on in the world to what’s going on the algorithms,” he says. “An algorithm would never pick some of this stuff up.”

With everything connected, the impact of a pandemic has been felt far and wide, touching mechanisms that in more typical times remain hidden. If we are looking for a silver lining, then now is a time to take stock of those newly exposed systems and ask how they might be designed better, made more resilient. If machines are to be trusted, we need to watch over them.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.