Kids are surrounded by AI. They should know how it works.

A student recaps how he would describe artificial intelligence to a friend: “It’s kind of like a baby or a human brain because it has to learn,” he says in a video, “and it stores [...] and uses that information to figure things out.”

Most adults would struggle to put together such a cogent definition of a complex subject. This student was just 10 years old.

The student was one of 28 middle schoolers, ages 9 to 14, who participated in a pilot program this summer designed to teach them about AI. The curriculum, developed by Blakeley Payne, a graduate research assistant at the MIT Media Lab, is part of a broader initiative to make these concepts an integral part of middle school classrooms. She has since open-sourced the curriculum, which includes several interactive activities that help students discover how algorithms are developed and how those processes go on to affect people’s lives.

Children today are growing up in a world surrounded by AI: algorithms determine what information they see, help select the videos they watch, and shape how they learn to talk. The hope is that by better understanding how algorithms are created and how they influence society, children could become more critical consumers of such technology. It could even motivate them to help shape its future.

“It’s essential for them to understand how these technologies work so they can best navigate and consume them,” Payne says. “We want them to feel empowered.”

Why kids?

There are several reasons to teach children about AI. First, there’s the economic argument: studies have shown that exposing children to technical concepts stimulates their problem-solving and critical-thinking skills. It can prime them to learn computational skills more quickly later on in life.

Second, there’s the societal argument. The middle school years, in particular, are critical to a child’s identity formation and development. Teaching girls about technology at this age may make them more likely to study it later or have a career in technology, says Jennifer Jipson, a professor of psychology and child development at California Polytechnic State University. This could help diversify the AI—and broader tech—industry. Learning to grapple with the ethics and societal impacts of technology early on can also help children grow into more conscious creators and developers, as well as more informed citizens.

Finally, there’s the vulnerability argument. Young people are more malleable and impressionable, so the ethical risks that come with tracking people’s behavior and using it to design more addictive experiences are heightened for them, says Rose Luckin, a professor of learner-centered design at University College London. Making children passive consumers could harm their agency, privacy, and long-term development.

“Ten to 12 years old is the average age when a child receives his or her first cell phone, or his or her first social-media account,” Payne says. “We want to have them really understand that technology has opinions and has goals that might not necessarily align with their own before they become even bigger consumers of technology.”

Algorithms as opinion

Payne’s curriculum includes a series of activities that prompt students to think about the subjectivity of algorithms. They begin by learning about algorithms as recipes, with inputs, a set of instructions, and outputs. The kids are then asked to “build,” or write down instructions, for an algorithm that outputs the best peanut butter and jelly sandwich.

Very quickly, the kids in the summer pilot started to grasp the underlying lesson. “A student pulled me aside and asked, ‘Is this supposed to be opinion or fact?’” she says. Through their own discovery process, the students realized how they had unintentionally built their own preferences into their algorithms.

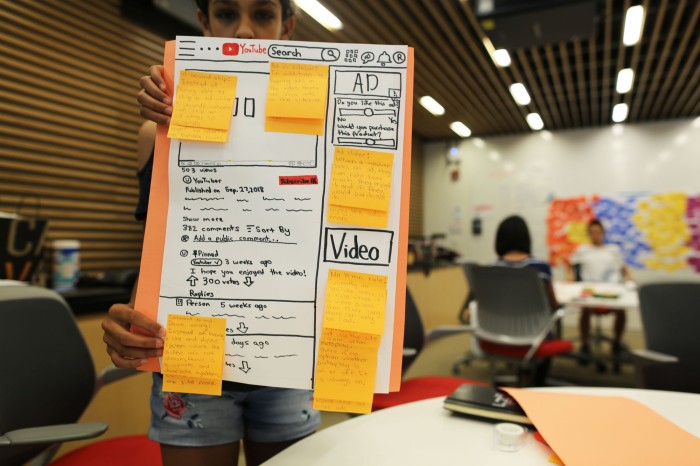

The next activity then builds on this concept: students draw what Payne calls an “ethical matrix” to think through how different stakeholders and their values might also affect the design of a sandwich algorithm. During the pilot, Payne then tied the lessons to current events. Together, the students read an abridged Wall Street Journal article on how YouTube executives were considering whether to create a separate kids-only version of the app with a modified recommendation algorithm. The students were able to see how investor demands, parental pressures, or children’s preferences could send the company down completely different algorithm redesign paths.

Another set of activities introduces students to the concept of AI bias. They use Google’s Teachable Machine tool, an interactive code-free platform for training basic machine-learning models, to build a cat-dog classifier—but, without their knowledge, are given a biased data set. Through a process of experimentation and discussion, they learn how the data set leads the classifier to be more accurate for cats. They then have an opportunity to correct the problem.

Payne once again connected the exercise to a real-world example during the pilot by showing the students footage of Joy Buolamwini, a fellow Media Lab researcher, testifying to Congress about biases in face recognition. “They were able to see how the kind of thought process they had gone through could change the way these systems are built in the world,” Payne says.

The future of education

Payne plans to keep tweaking the program, taking public feedback into account, and is exploring various avenues for expanding its reach. Her goal is to integrate some version of it into public education.

Beyond that, she hopes it will serve as an example for how to teach children about technology, society, and ethics. Both Luckin and Jipson agree that it offers a promising template for how education could evolve to meet the demands of an increasingly technology-driven world.

“AI as we see it in society right now is not a great equalizer,” says Payne. “Education is, or at least, we hope it to be. So this is a foundational step to move toward a fairer and more just society.”

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.