The key to more powerful quantum computers could be to build them like Legos

Visit any startup or university lab where quantum computers are being built, and it’s like entering a time warp to the 1960s—the heyday of mainframe computing, when small armies of technicians ministered to machines that could fill entire rooms.

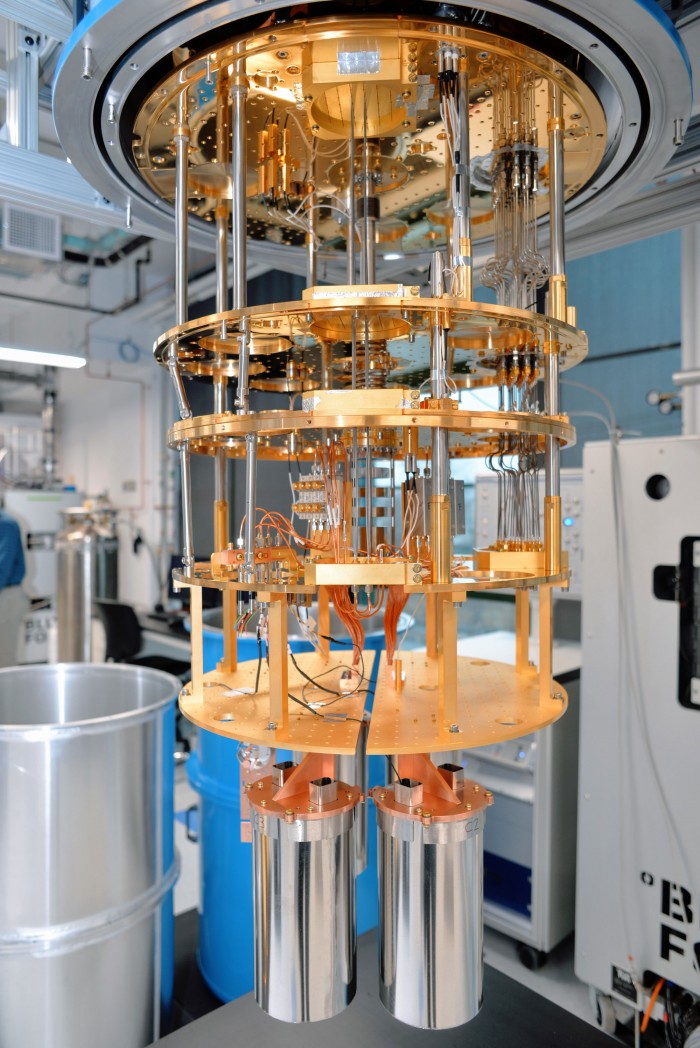

All manner of equipment, from super-accurate lasers to supercooled refrigerators, is needed to harness the exotic forces of quantum mechanics for the task of processing data. Cables connecting various bits of gear form multicolored spaghetti that spills over floors and runs across ceilings. Physicists and engineers swarm around banks of screens, constantly monitoring and tweaking the performance of the computers.

Mainframes ushered in the information revolution, and the hope is that quantum computers will prove game-changers too. Their immense processing power promises to outstrip that of even the most capable conventional supercomputers, potentially delivering advances in everything from drug discovery to materials science and artificial intelligence.

The big challenge facing the nascent industry is to create machines that can be scaled up both reliably and relatively cheaply. Generating and managing the quantum bits, or qubits, that carry information in the computers is hard. Even the tiniest vibrations or changes in temperature—phenomena known as “noise” in quantum jargon—can cause qubits to lose their fragile quantum state. And when that happens, errors creep into calculations.

The most common response has been to create quantum computers with as many qubits as possible on a single chip. If some qubits misfire, others holding copies of the information can be called upon as backups by algorithms developed to detect and minimize errors. The strategy, which has been championed by large companies such as IBM and Google, as well as by high-profile startups like Rigetti Computing, has spawned complex machines evocative of those room-sized mainframes.

The problem is, the error rates are extreme. Today’s largest chips have fewer than a hundred qubits, but thousands or even tens of thousands may be needed to produce the same result as a single error-free qubit. Each qubit needs its own control wiring, so the more that are added, the more complex a system becomes to manage. More gear will also be needed to monitor and manage rapidly expanding qubit counts. That could drive up the complexity and cost of the computers dramatically, limiting their appeal.

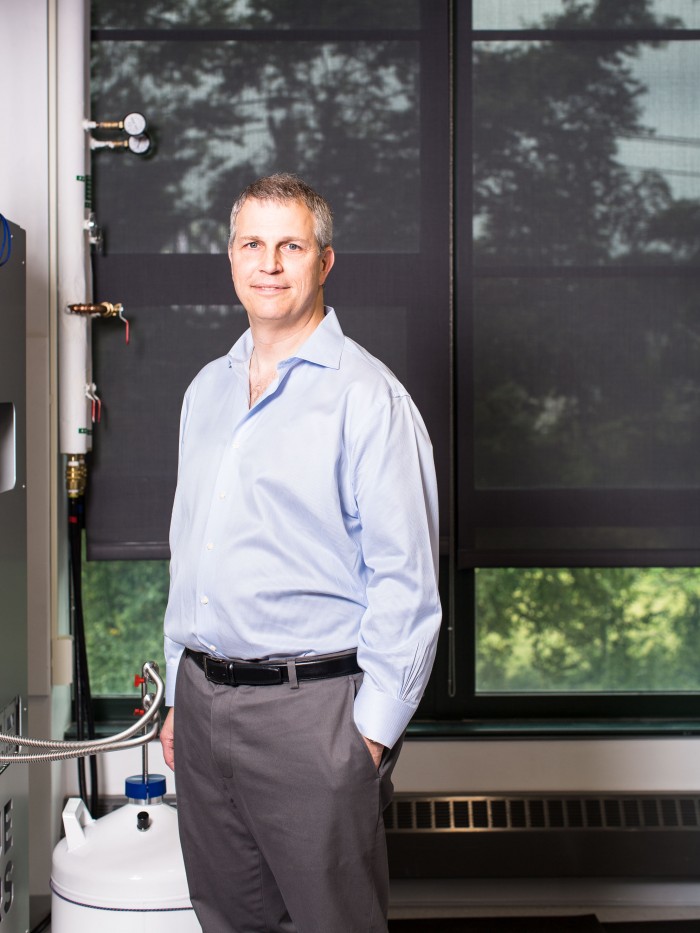

Robert Schoelkopf, a professor at Yale, thinks there’s a better way forward. Instead of trying to cram ever more qubits onto a single chip, Quantum Circuits, a startup he cofounded in 2017, is developing what amount to mini quantum machines. These can be networked together via specialized interfaces, a bit like very high-tech Lego bricks. Schoelkopf says this approach helps produce lower error rates, so fewer qubits—and therefore less supporting hardware—will be needed to create powerful quantum machines.

Skeptics point out that unlike rivals such as IBM, Quantum Circuits has yet to publicly unveil a working computer. But if it can deliver one that lives up to Schoelkopf’s claims, it could help bring quantum computing out of labs and into the commercial world much faster.

The drive to create longer-lasting qubits

The idea of bolting together smaller quantum building blocks to create bigger computers has been around for years, but it’s never quite caught on. “There’s not been a great, fault-tolerant machine that’s been built yet using the modular approach,” explains Jerry Chow, who manages the experimental quantum computing team at IBM Research. Still, adds Chow, if anyone can pull it off it will be Schoelkopf and his colleagues.

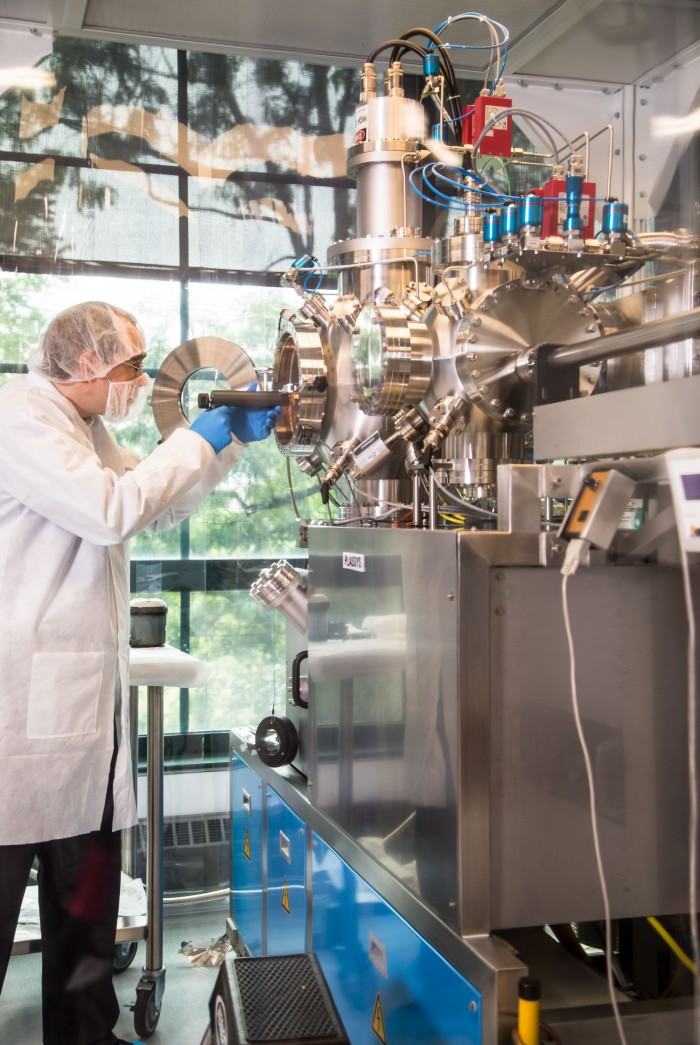

After training as an engineer and a physicist, including stints at NASA and Caltech, Schoelkopf joined Yale’s faculty in 1998 and began to work on quantum computing. He and his colleagues pioneered the use of superconducting circuits on a chip to create qubits. By pumping electrical current through specialized microchips held inside fridges that are colder than deep space, they are able to coax particles into the quantum states that are key to the computers’ immense power.

Unlike bits in ordinary computers, which are streams of electrical or optical pulses representing either a 1 or a 0, qubits are subatomic particles such as photons or electrons that can be in a kind of combination of both 1 and 0—a phenomenon known as “superposition.” Qubits can also become entangled with one another, which means that a change in the state of one can instantaneously change the state of others even when there’s no physical connection between them.

There’s more background on this in our quantum computing explainer. The main thing to know, though, is that this allows qubits to act as if they are performing many calculations simultaneously that an ordinary computer would have to perform sequentially. Which means that adding additional qubits to a quantum machine boosts its processing capacity exponentially.

Schoelkopf has also won plaudits for his work on the problem of noise. The coherence times of qubits—that is, how long they can run calculations before noise disrupts their delicate quantum state—have been improving by a factor of 10 roughly every three years. (Researchers have dubbed this trend “Schoelkopf’s Law” in a nod to classical computing’s “Moore’s Law,” which holds that the number of transistors on a silicon chip doubles roughly every two years.) Brendan Dickinson of Canaan Partners, one of Quantum Circuits’ investors, says Schoelkopf’s impressive track record in superconducting qubits is one of the main reasons it decided to back the business, which has raised $18 million so far.

Ironically, some of the students mentored by Schoelkopf and his cofounders from Yale, Michel Devoret and Luigi Frunzio, are now at companies like IBM and Rigetti that compete with their startup. Schoelkopf is clearly proud of the quantum diaspora that’s come out of the Yale lab. He told me that a few years ago he had looked at all the organizations around the world working on superconducting qubits and found that more than half of them were run by people who had spent time there. But he also believes a kind of groupthink has set in.

The advantages of modular machines

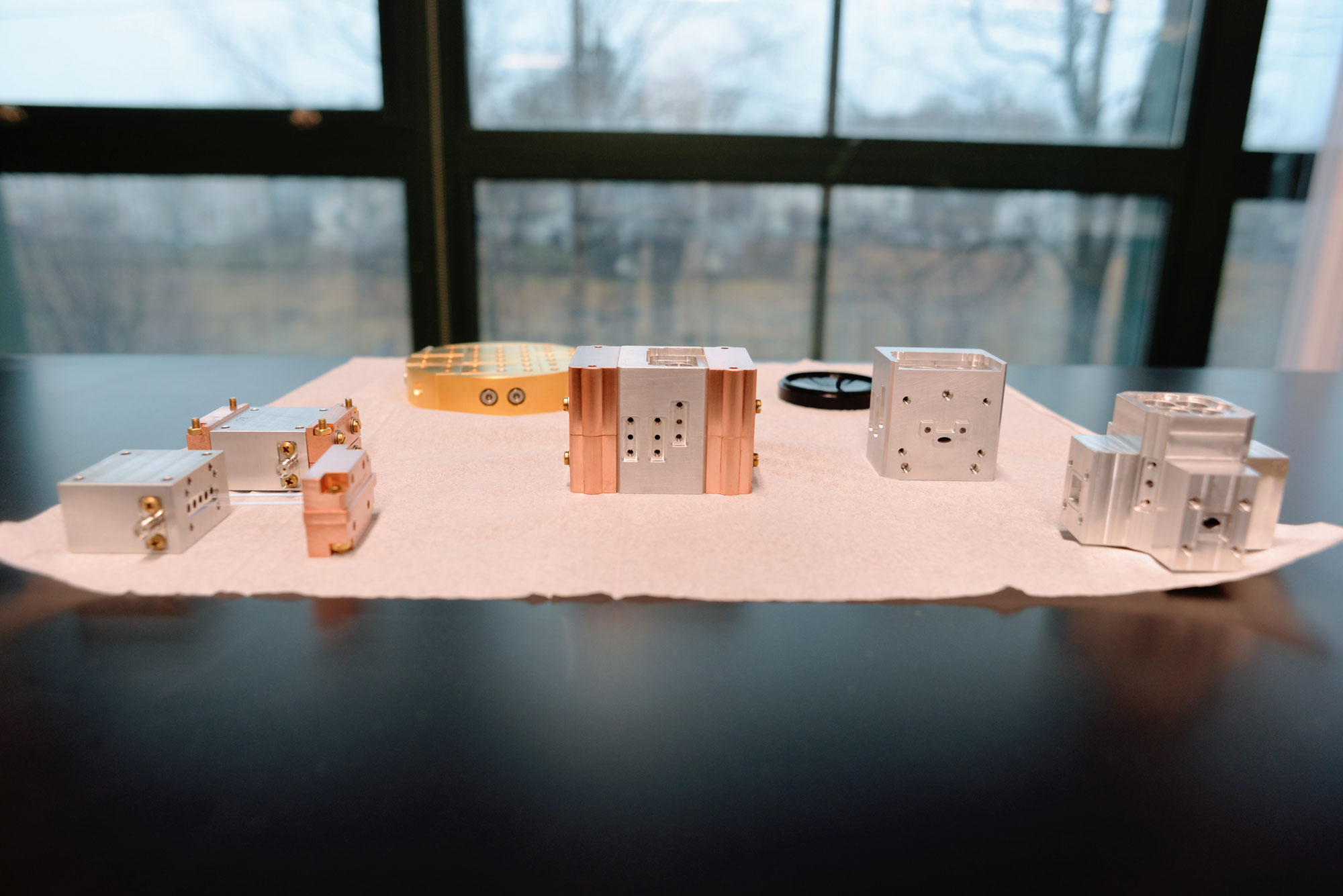

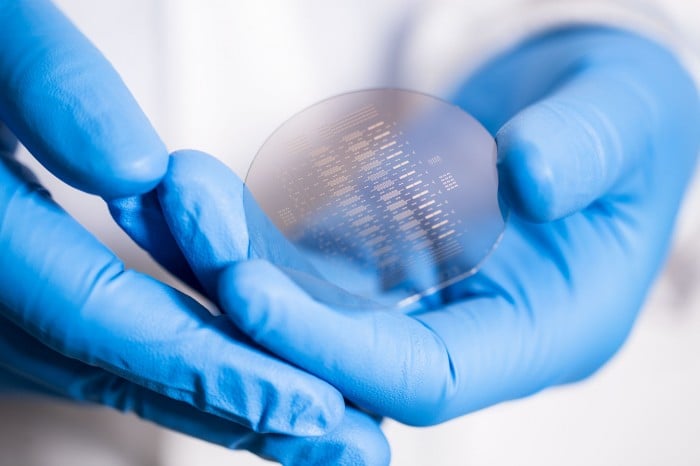

Most researchers working on superconducting machines focus on creating as many qubits as possible on a single chip. Quantum Circuits’ approach is very different from that standard. The core of its system is a small aluminum module containing superconducting circuits that are made on silicon or sapphire chips. Each module contains what amounts to five to 10 qubits.

To network these modules together into larger computers, the company uses what sounds like something out of Star Trek—quantum teleportation. It’s a method that’s been developed for shipping data across things like telecom networks. The basic idea involves entangling a microwave photon in one module with a photon in another one and then using the link between them as a bridge for transferring data. (We’ve got a quantum teleportation explainer too.) Quantum Circuits has used this approach to teleport a quantum version of a logic gate between its modules.

Schoelkopf says there are several reasons that networking modules together is better than cramming as many qubits as possible onto a single chip. The smaller scale of each unit makes it easier to control the system and to apply error correction techniques. Moreover, if some qubits go haywire in an individual module, the unit can be removed or isolated without affecting others networked with it; if they’re all on a single chip, the entire thing may have to be scrapped.

Looking ahead, Quantum Circuits’ modular machines will still need some of the same gear as rival ones, including the supercooling refrigerators and monitoring gear. But as they scale, they shouldn’t require anywhere near the same kind of control wiring and other paraphernalia needed to master individual qubits. So while rival devices could look ever more like those massive early mainframes, the startup’s machines should remain akin to the slimmed-down ones that appeared as conventional computing advanced into the 1970s and beyond.

Listening to Schoelkopf talk through the technology, an image crept into my head: my kids playing with plastic Lego bricks when they were young, bolting them together to build castles and forts.

When I suggested the comparison, Schoelkopf was initially a little wary but then became quite enthusiastic. “In general, every complex device I know,” he said, “is based on having the equivalent of Lego blocks, and you define the interfaces and how they fit together …[Lego bricks] are really cheap. They can be mass-produced. And they always plug together the right way.”

Schoelkopf’s quantum modules have another key advantage. Each contains a three-dimensional cavity that traps a number of microwave photons. These form what are known as “qudits,” and they’re like qubits, except they store more information. While a qubit represents a combination of 1 and 0, a qudit can exist in more than two states—say, 0, 1, and 2 at the same time. Quantum computers with qudits can crunch through even more information simultaneously.

Scientists have been experimenting with qudits for some time, but they are tricky to generate and control. Schoelkopf says Quantum Circuits has found ways to create high-quality ones consistently and to reduce errors significantly. (The company claims it’s achieved coherence times using its cavities that are ten to 100 times longer than for superconducting qubits, which makes it easier to correct errors.) Some qubits are still needed to perform operations on the qudits, and to extract information from them, but his approach requires fewer of these qubits. That, in turn, means less hardware is needed overall.

Quantum computing is a wide-open field

Quantum Circuits’ approach sounds compelling, but Schoelkopf refuses to say exactly when the company will unveil a fully functioning computer. Nor will he disclose how many qubits and qudits his team has managed to get working together in total.

The longer it takes, the more his startup risks being overshadowed by its rivals. IBM and Rigetti are already giving companies and researchers access to their quantum computers via the computing cloud, and Google is rumored to be close to being the first to achieve “quantum supremacy”—or the point at which a quantum computer can perform a task beyond the reach of even the most powerful conventional supercomputer.

Schoelkopf says organizations that want to try out algorithms on Quantum Circuits’ system will be able to do so “very soon,” and that at some point it will connect machines to the cloud as IBM and Rigetti have done. The startup isn’t just building computers; it’s also working on software that will help users get the most out of the underlying hardware.

Besides, it’s early days. The quantum algorithms being run on cloud services like IBM’s today are still pretty basic, Schoelkopf notes. The field is wide open for quantum computers and associated software that can really make a difference in a broad range of areas, from turbocharging artificial-intelligence applications to modeling molecules for chemists.

Lots of questions remain. Will Quantum Circuits be able to keep producing robust qubits and qudits as it builds much bigger machines? Can it get its quantum teleportation method to work reliably as it connects more modules together? And will its systems, when they are rolled out for sale, be more cost-effective to operate than those of rivals? Significant physics and engineering challenges still lie ahead. But if Schoelkopf and his colleagues can overcome them, they could prove that the key to getting very big in quantum computing is to think small.

Deep Dive

Computing

Inside the hunt for new physics at the world’s largest particle collider

The Large Hadron Collider hasn’t seen any new particles since the discovery of the Higgs boson in 2012. Here’s what researchers are trying to do about it.

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Algorithms are everywhere

Three new books warn against turning into the person the algorithm thinks you are.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.