Yes, FaceApp could use your face—but not for face recognition

There’s a lot that the viral photo-editing app could do with a giant database of faces.

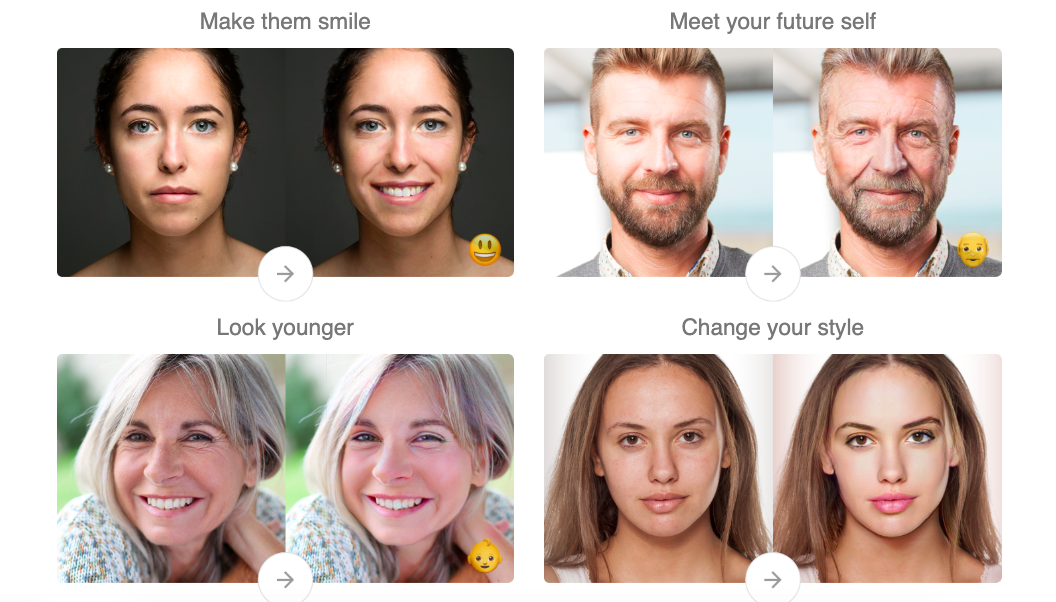

The context: FaceApp, the photo-editing app that uses AI to touch up your face, has come under scrutiny since going viral. It’s been around since 2017, but a newly added feature that allows users to see what they might look like when they age has catapulted it back into popularity. Now the fact that it’s owned by the Russia-based company Wireless Lab has people spooked.

The concern: According to some reports, the app has amassed more than 150 million photos of people’s faces since launch—and its terms of service stipulate that the company can use the photos however it wants, in perpetuity. The company has already said in a statement that it deletes most images from its servers within 48 hours of upload, and doesn’t share data to third parties. Despite this, some Democratic members of the US Congress are now calling for an FBI investigation into the company. Users are also worried that their face could be used to track them in the future through face recognition.

The reality: Okay, so let’s imagine FaceApp did decide to use the photos they’ve gathered beyond the users’ reason for uploading them. What could they actually do? It’s highly unlikely they would use them to train algorithms for identifying your face. First, the majority of users don’t give FaceApp their name or other identifying information, which would be required for recognition. Second, although it is technically possible for a system to learn to recognize someone from a single photo, the accuracy would be poor. There would also be much easier means for obtaining the specific photos of a target individual, such as through their social-media profiles and Flickr uploads.

Down the rabbit hole: There are other ways to use a giant database of faces, however. Here are just a few:

- Face modification: Perhaps the most obvious use would be for FaceApp to improve its own algorithms. The app’s ability to tweak and alter an image of a face is based on a neural network already trained on tons of face photos. It would make sense for the company to continue feeding it more images to fine-tune its capabilities. Such a database could also be used to build more face modification features that the app doesn’t already have.

- Face analysis: While face recognition identifies specific individuals, face analysis simply involves predicting features about them, such as their gender or age. Many commercial face analysis systems are trained on open-source databases that look a lot like the one FaceApp could have retained.

- Face detection: Similarly, face detection is about identifying whether there’s a face in a photo, and where it is. Again, these systems could be built or improved with more face photos.

- Deepfake generation: And finally, such a database could be used to create faces of people who don’t exist, which would come with a whole host of issues. Fake face generation has allegedly already been used by spies to spoof identities, for example.

Does it matter? While these use cases raise major privacy concerns, it’s worth noting that there are many other open-source databases of face photos and people’s videos that may or may not already include your likeness.

Such databases, made of public media scraped from the internet, have long been a basis of AI research. Even if FaceApp didn’t have its own stockpile of images, it would be easy to find others from the plethora of options out there. Perhaps that’s the greater point to the story: FaceApp merely highlights how much we’ve already lost control of our digital data.

To have more stories like this delivered directly to your inbox, sign up for our Webby-nominated AI newsletter The Algorithm. It's free.

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.