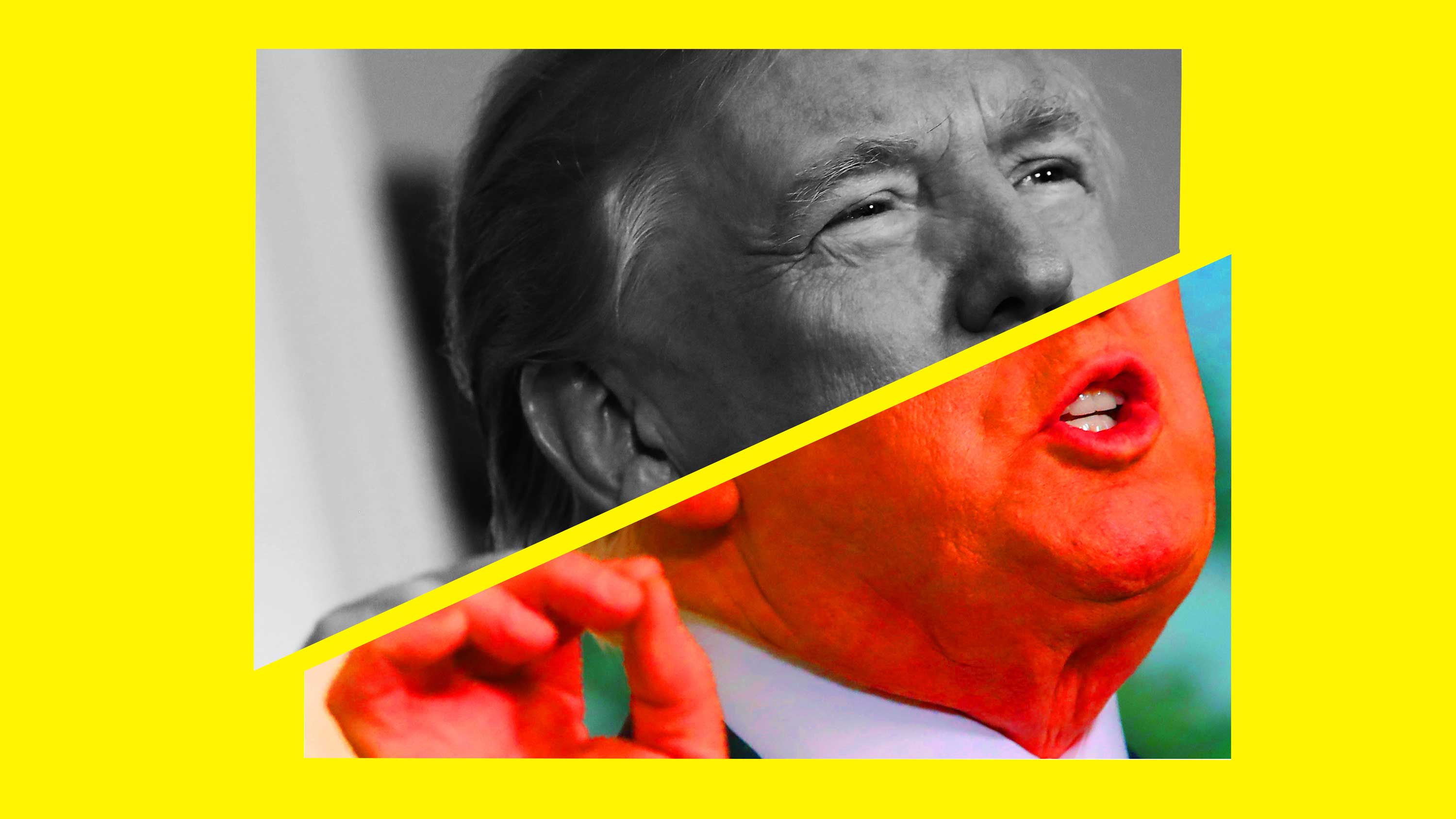

Deepfakes have got Congress panicking. This is what it needs to do.

The recent rapid spread of a doctored video of Nancy Pelosi has frightened lawmakers in Washington. The video—edited to make her appear drunk—is just one of a number of examples in the last year of manipulated media making it into mainstream public discourse. In January, a different doctored video targeting President Donald Trump ended up airing on Seattle television. This week, an AI-generated video of Mark Zuckerberg was uploaded to Instagram. (Facebook has promised not to take it down.)

With the 2020 US election looming, the US Congress has grown increasingly concerned that the quick and easy ability to forge media could make election campaigns vulnerable to targeting by foreign operatives and compromise voter trust.

In response, the House of Representatives will hold its first dedicated hearing tomorrow on deepfakes, the class of synthetic media generated by AI. In parallel, Representative Yvette Clarke will introduce a bill on the same subject. A new research report released by a nonprofit this week also highlights a strategy for coping when deepfakes and other doctored media proliferate.

It’s not the first time US policymakers have sought to take action on this issue. In December of 2018, Senator Ben Sasse introduced a different bill attempting to prohibit malicious deepfakes. Senator Marco Rubio has also repeatedly sounded the alarm on the technology over the years. But it is the first time we have seen such a concerted effort from US lawmakers.

The deepfake bill

The draft bill, a product of several months of discussion with computer scientists, disinformation experts, and human rights advocates, will include three provisions. The first would require companies and researchers who create tools that can be used to make deepfakes to automatically add watermarks to forged creations.

The second would require social-media companies to build better manipulation detection directly into their platforms. Finally, the third provision would create sanctions, like fines or even jail time, to punish offenders for creating malicious deepfakes that harm individuals or threaten national security. In particular, it would attempt to introduce a new mechanism for legal recourse if people’s reputations are damaged by synthetic media.

“This issue doesn’t just affect politicians,” says Mutale Nkonde, a fellow at the Data & Society Research Institute and an advisor on the bill. “Deepfake videos are much more likely to be deployed against women, minorities, people from the LGBT community, poor people. And those people aren’t going to have the resources to fight back against reputational risks.”

The goal of introducing the bill is not to pass it through Congress as is, says Nkonde. Instead it is meant to spark a more nuanced conversation about how to deal with the issue in law by proposing specific recommendations that can be critiqued and refined. “What we’re really looking to do is enter into the congressional record the idea of audiovisual manipulation being unacceptable,” she says.

The current state of deepfakes

By coincidence, the human rights nonprofit Witness released a new research report this week documenting the current state of deepfake technology. Deepfakes are currently not mainstream: they still require specialized skills to produce, and they often leave artifacts within the video, like glitches and pixelation, that make the forgery obvious.

But the technology has advanced at a rapid pace, and the amount of data required to fake a video has dropped dramatically. Two weeks ago, Samsung demonstrated that it was possible to create an entire video out of a single photo; this week university and industry researchers demoed a new tool that allows users to edit someone’s words by typing what they want the subject to say.

It’s thus only a matter of time before deepfakes proliferate, says Sam Gregory, the program director of Witness. “Many of the ways that people would consider using deepfakes—to attack journalists, to imply corruption by politicians, to manipulate evidence—are clearly evolutions of existing problems, so we should expect people to try on the latest ways to do those effectively,” he says.

The report outlines a strategy for how to prepare for such an impending future. Many of the recommendations and much of the supporting evidence also aligns with the proposals that will appear in the House bill.

The report found that current investments by researchers and tech companies into deepfake generation far outweigh those into deepfake detection. Adobe, for example, has produced many tools to make media alterations easier, including a recent feature for removing objects in videos; it has not, however, provided a foil to them.

The result is a mismatch between the real-world nature of media manipulation and the tools available to fight it. “If you’re creating a tool for synthesis or forgery that is seamless to the human eye or the human ear, you should be creating tools that are specifically designed to detect that forgery,” says Gregory. The question is how to get toolmakers to redress that imbalance.

Like the House bill, the report also recommends that social-media and search companies do a better job of integrating manipulation detection capabilities into their platforms. Facebook could invest in object removal detection, for example, to counter Adobe’s feature as well as other rogue editing techniques.

It should then clearly label videos and images in users’ newsfeeds to call out when they have been edited in ways invisible to the human eye. Google, as another example, should invest in reverse video search to help journalists and viewers quickly pinpoint the original source of a clip.

Beyond Congress

Despite the close alignment of the report with the draft bill, Gregory cautions that the US Congress should think twice about passing laws on deepfakes anytime soon. “It’s early to be regulating deepfakes and synthetic media,” he says, though he makes exceptions for very narrow applications, such as their use for producing nonconsensual sexual imagery. “I don’t think we have a good enough sense of how societies and platforms will handle deepfakes and synthetic media to set regulations in place,” he adds.

Gregory worries that the current discussion in Washington could lead to decisions that have negative repercussions later. US regulations could heavily shape what other countries do, for example. And it’s easy to see how in countries with more authoritarian governments, politician-protecting regulations could be used to justify the takedown of any content that’s controversial or criticizes political leaders.

Nkonde agrees that Congress should take a measured and thoughtful approach to the issue, and consider than just its impact on politics. “I’m really hoping they will talk [during the hearing] about how many people this technology impacts,” she says, “and the psychological impact of not being able to believe what you can see and hear.”

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.