The new benchmark quantum computers must beat to achieve quantum supremacy

Twice a year, the TOP500 project publishes a ranking of the world’s most powerful computers. The list is eagerly awaited and hugely influential. Global superpowers compete to dominate the rankings, and at the time of writing China looms largest, with 229 devices on the list.

The US has just 121, but this includes the world’s most powerful: the Summit supercomputer at Oak Ridge National Laboratory in Tennessee, which was clocked at 143 petaflops (143 thousand million million floating point operations per second).

The ranking is determined by a benchmarking program called Linpack, which is a collection of Fortran subroutines that solve a range of linear equations. The time taken to solve the equations is a measure of the computer’s speed.

There is no shortage of controversy over this choice of benchmark. Computer architectures are usually optimized to solve specific problems, and many of these are very different from the Linpack challenge. Quantum computers, for example, are entirely unsuited to solving these kinds of problems.

And that raises an important question. Quantum computers are on the verge of outperforming the most powerful supercomputers for certain kinds of problems, but exactly how powerful are they? At issue is the question of how to measure their performance and compare it with that of classical computers.

Today we get an answer thanks to the work of Benjamin Villalonga at the Quantum Artificial Intelligence Lab at NASA Ames Research Center in Mountain View, California, and a group of colleagues who have developed a benchmarking test that works on both classical and quantum devices. In this way, it is possible to compare their performance.

What’s more, the team has used the new test to put the Summit, the world’s most powerful supercomputer, through its paces running at 281 petaflops. The result is the benchmark that quantum computers must beat to finally establish their supremacy in the rankings.

Finding a good measure of quantum computing power is no easy task. For a start, computer scientists have long known that quantum computers can outperform their classical counterparts in only a limited number of highly specialized tasks. And even then, no quantum computer is currently powerful enough to perform any of them particularly well because, for example, they are incapable of error correction.

So Villalonga and co looked for a much more fundamental test of quantum computing power that would work equally well for today’s primitive devices and tomorrow’s more advanced quantum machines, and could also be simulated on classical machines.

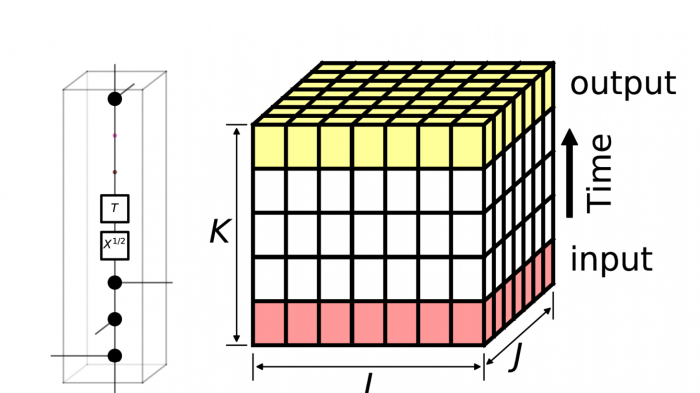

Their chosen problem is to simulate the evolution of quantum chaos using random quantum circuits. Simple quantum computers can do this because the process does not require powerful error correction, and it is relatively straightforward to filter out results that have been overwhelmed by noise.

It is also straightforward for classical machines to simulate quantum chaos. But the classical computing power required to do this rises exponentially with the number of qubits involved.

Two years ago, physicists determined that quantum computers with at least 50 qubits should achieve quantum supremacy over a classical supercomputer at that time.

But the goalposts are constantly moving as supercomputers are upgraded. For example, Summit is capable of significantly more petaflops now than in the last ranking in November, when it tipped the scales at 143 petaflops. Indeed, Oak Ridge National Labs this week unveiled plans to build a 1.5-exaflop machine by 2021. So being able to continually benchmark these machines against the emerging quantum computers is increasingly important.

Researchers at NASA and Google have created an algorithm called qFlex that simulate random quantum circuits on a classical machine. Last year, they showed that qFlex could simulate and benchmark the performance of a Google quantum computer called Bristlecone, which has 72 qubits. To do this, they used a supercomputer at NASA Ames with 20 petaflops of number-crunching power.

Now they’ve shown that the Summit supercomputer can simulate the performance of a much larger quantum device. “On Summit, we were able to achieve a sustained performance of 281 Pflop/s (single precision) over the entire supercomputer, simulating circuits of 49 and 121 qubits,” they say.

This 121 qubits is beyond the capability of any existing quantum computer. So classical computers remain a hair’s breadth ahead in the rankings.

But this is a race they are destined to lose. Plans are already afoot to build quantum computers with 100+ qubits within the next few years. And as quantum capabilities accelerate, the challenge of building ever more powerful classical machines is already coming up against the buffers.

The limiting factor for new machines is no longer the hardware but the power available to keep them humming. The Summit machine already requires a 14-megawatt power supply. That’s enough to light up an entire a medium-sized town. “To scale such a system by 10x would require 140 MW of power, which would be prohibitively expensive,” say Villalonga and co.

By contrast, quantum computers are frugal. Their main power requirement is the cooling for superconducting components. So a 72-qubit computer like Google’s Bristlecone, for example, requires about 14 kw. “Even as qubit systems scale up, this amount is unlikely to significantly grow,” say Villalonga and co.

So in the efficiency rankings, quantum computers are destined to wipe the floor with their classical counterparts sooner rather than later.

One way or another, quantum supremacy is coming. If this work is anything to go by, the benchmark that will prove it is likely to be qFlex.

Ref: arxiv.org/abs/1905.00444 : Establishing the Quantum Supremacy Frontier with a 281 Pflop/s Simulation

Deep Dive

Computing

Inside the hunt for new physics at the world’s largest particle collider

The Large Hadron Collider hasn’t seen any new particles since the discovery of the Higgs boson in 2012. Here’s what researchers are trying to do about it.

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Algorithms are everywhere

Three new books warn against turning into the person the algorithm thinks you are.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.