Trained neural nets perform much like humans on classic psychological tests

In the early part of the 20th century, a group of German experimental psychologists began to question how the brain acquires meaningful perceptions of a world that is otherwise chaotic and unpredictable. To answer this question, they developed the notion of the “gestalt effect”—the idea that when it comes to perception, the whole is something other than the parts.

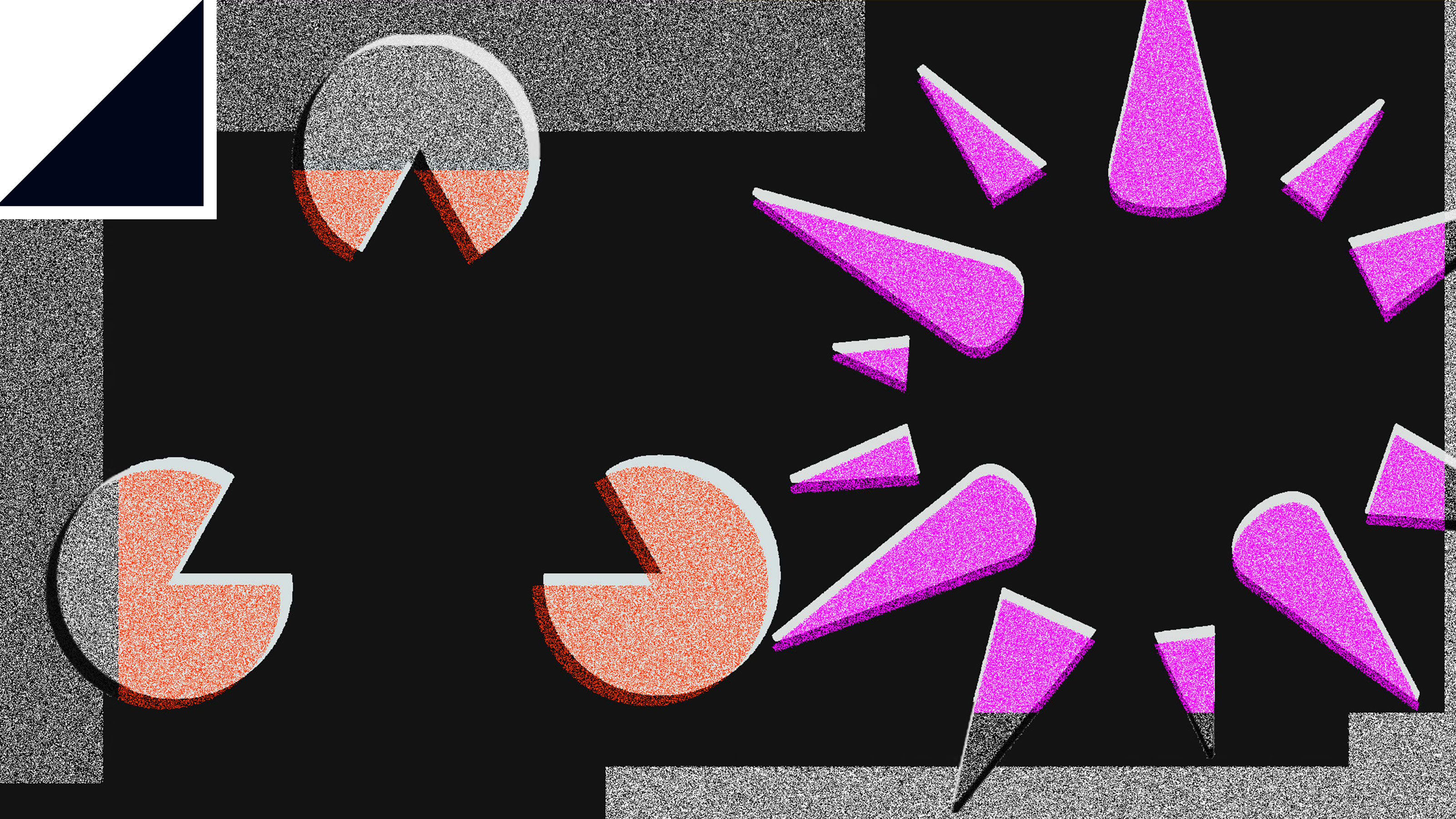

Sine then, psychologists have discovered that the human brain is remarkably good at perceiving complete pictures on the basis of fragmentary information. A good example is the figure shown here. The brain perceives two-dimensional shapes such as a triangle and a square, and even a three-dimensional sphere. But none of these shapes is explicitly drawn. Instead, the brain fills in the gaps.

A natural extension to this work is to ask whether gestalt effects occur in neural networks. These networks are inspired by the human brain. Indeed, researchers studying machine vision say the deep neural networks they have developed turn out to be remarkably similar to the visual system in primate brains and to parts of the human cortex.

That leads to an interesting question: can neural networks perceive a whole object by looking merely at its parts, as humans do?

Today we get an answer thanks to the work of Been Kim and colleagues at Google Brain, the company’s AI research division in Mountain View, California. The researchers have tested various neural networks using the same gestalt experiments designed for humans. And they say they have good evidence that machines can indeed perceive whole objects using observations of the parts.

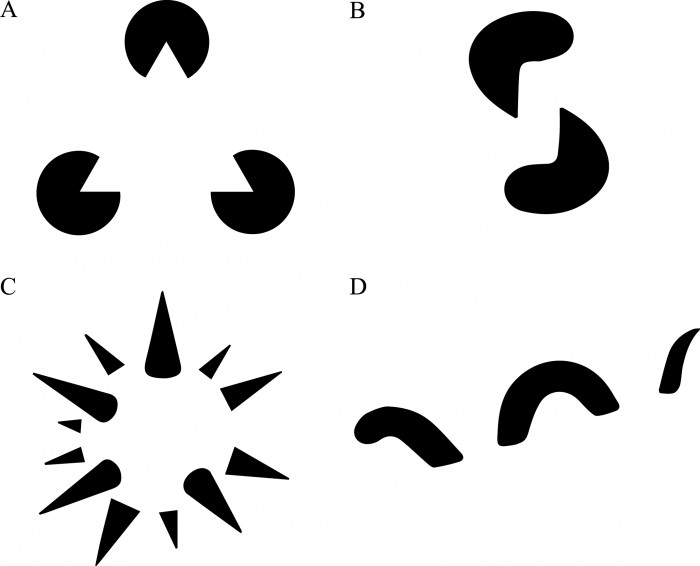

Kim and co’s experiment is based on the triangle illusion shown in the figure. They first create three databases of images for training their neural networks. The first consists of ordinary complete triangles displayed in their entirety.

The next database shows only the corners of the triangles, with lines that must be interpolated to perceive the complete shape. This is the illusory data set. When humans view these types of images, they tend to close the gaps and end up perceiving the triangle as a whole. “We aim to determine whether neural networks exhibit similar closure effects,” say Kim and co.

The final database consists of similar “corners” but randomly oriented so that the lines cannot be interpolated to form triangles. This is the non-illusory data set.

By varying the size and orientation of these shapes, the team created almost 1,000 different images to train their machines.

Their approach is to train a neural network to recognize ordinary complete triangles and then to test whether it classifies the images in the illusory data set as complete triangles (while ignoring the images in the non-illusory data set). In other words, they test whether the machine can fill in the gaps in the images to form a complete picture.

They also compare the behavior of a trained network with the behavior of an untrained network or one trained on random data.

The results make for interesting reading. It turns out that the behavior of trained neural networks shows remarkable similarities to human gestalt effects. “Our findings suggest that neural networks trained with natural images do exhibit closure, in contrast to networks with randomized weights or networks that have been trained on visually random data,” say Kim and co.

That’s a fascinating result. And not just because it shows how neural networks mimic the brain to make sense of the world.

The bigger picture is that the team’s approach opens the door to an entirely new way of studying neural networks using the tools of experimental psychology. “We believe that exploring other Gestalt laws—and more generally, other psychophysical phenomena—in the context of neural networks is a promising area for future research,” say Kim and co.

That looks like a first step into a new field of machine psychology. As the Google team put it: “Understanding where humans and neural networks differ will be helpful for research on interpretability by enlightening the fundamental differences between the two interesting species.” The German experimental psychologists of the early 20th century would surely have been fascinated.

Ref: arxiv.org/abs/1903.01069 : Do Neural Networks Show Gestalt Phenomena? An Exploration of the Law of Closure

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.