Quantum computing should supercharge this machine-learning technique

Quantum computing and artificial intelligence are both hyped ridiculously. But it seems a combination of the two may indeed combine to open up new possibilities.

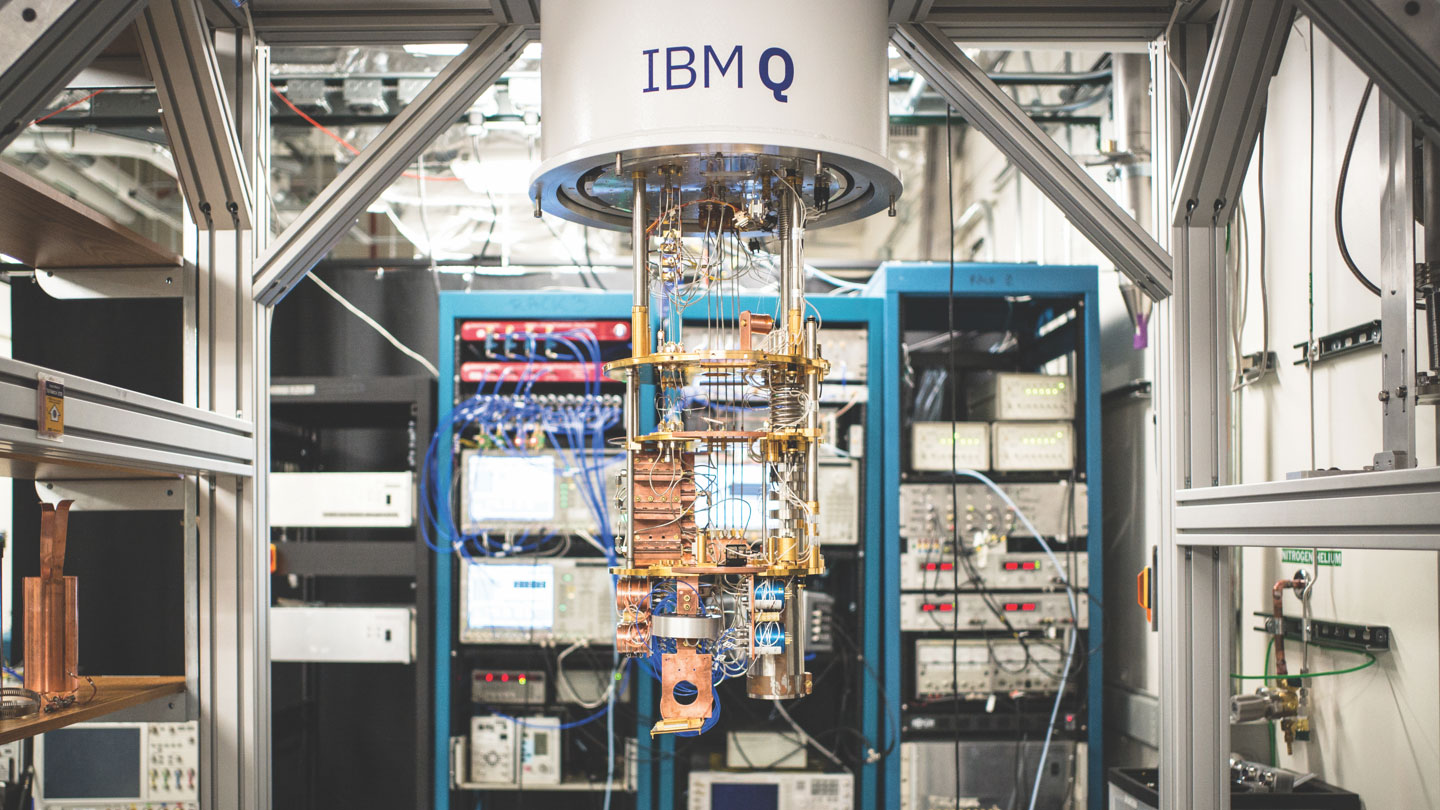

In a research paper published today in the journal Nature, researchers from IBM and MIT show how an IBM quantum computer can accelerate a specific type of machine-learning task called feature matching. The team says that future quantum computers should allow machine learning to hit new levels of complexity.

As first imagined decades ago, quantum computers were seen as a different way to compute information. In principle, by exploiting the strange, probabilistic nature of physics at the quantum, or atomic, scale, these machines should be able to perform certain kinds of calculations at speeds far beyond those possible with any conventional computer (see “What is a quantum computer?”). There is a huge amount of excitement about their potential at the moment, as they are finally on the cusp of reaching a point where they will be practical.

At the same time, because we don’t yet have large quantum computers, it isn’t entirely clear how they will outperform ordinary supercomputers—or, in other words, what they will actually do (see “Quantum computers are finally here. What will we do with them?”).

Feature matching is a technique that converts data into a mathematical representation that lends itself to machine-learning analysis. The resulting machine learning depends on the efficiency and quality of this process. Using a quantum computer, it should be possible to perform this on a scale that was hitherto impossible.

The MIT-IBM researchers performed their simple calculation using a two-qubit quantum computer. Because the machine is so small, it doesn’t prove that bigger quantum computers will have a fundamental advantage over conventional ones, but it suggests that would be the case, The largest quantum computers available today have around 50 qubits, although not all of them can be used for computation because of the need to correct for errors that creep in as a result of the fragile nature of these quantum bits.

“We are still far off from achieving quantum advantage for machine learning,” the IBM researchers, led by Jay Gambetta, write in a blog post. “Yet the feature-mapping methods we’re advancing could soon be able to classify far more complex data sets than anything a classical computer could handle. What we’ve shown is a promising path forward.”

“We’re at stage where we don’t have applications next month or next year, but we are in a very good position to explore the possibilities,” says Xiaodi Wu, an assistant professor at the University of Maryland’s Joint Center for Quantum Information and Computer Science. Wu says he expects practical applications to be discovered within a year or two.

Quantum computing and AI are hot right now. Just a few weeks ago, Xanadu, a quantum computing startup based in Toronto, came up with an almost identical approach to that of the MIT-IBM researchers, which the company posted online. Maria Schuld, a machine-learning researcher at Xanadu, says the recent work may be the start of a flurry of research papers that combine the buzzwords “quantum” and “AI.”

“There is a huge potential,” she says.

Deep Dive

Computing

Inside the hunt for new physics at the world’s largest particle collider

The Large Hadron Collider hasn’t seen any new particles since the discovery of the Higgs boson in 2012. Here’s what researchers are trying to do about it.

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Algorithms are everywhere

Three new books warn against turning into the person the algorithm thinks you are.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.