Personal AI Privacy Watchdog Could Help You Regain Control of Your Data

Keeping track of the personal data your mobile apps are collecting, using, and sharing requires making sense of long, ambiguous, and often confusing privacy policies and permission settings. Now there’s an app for that.

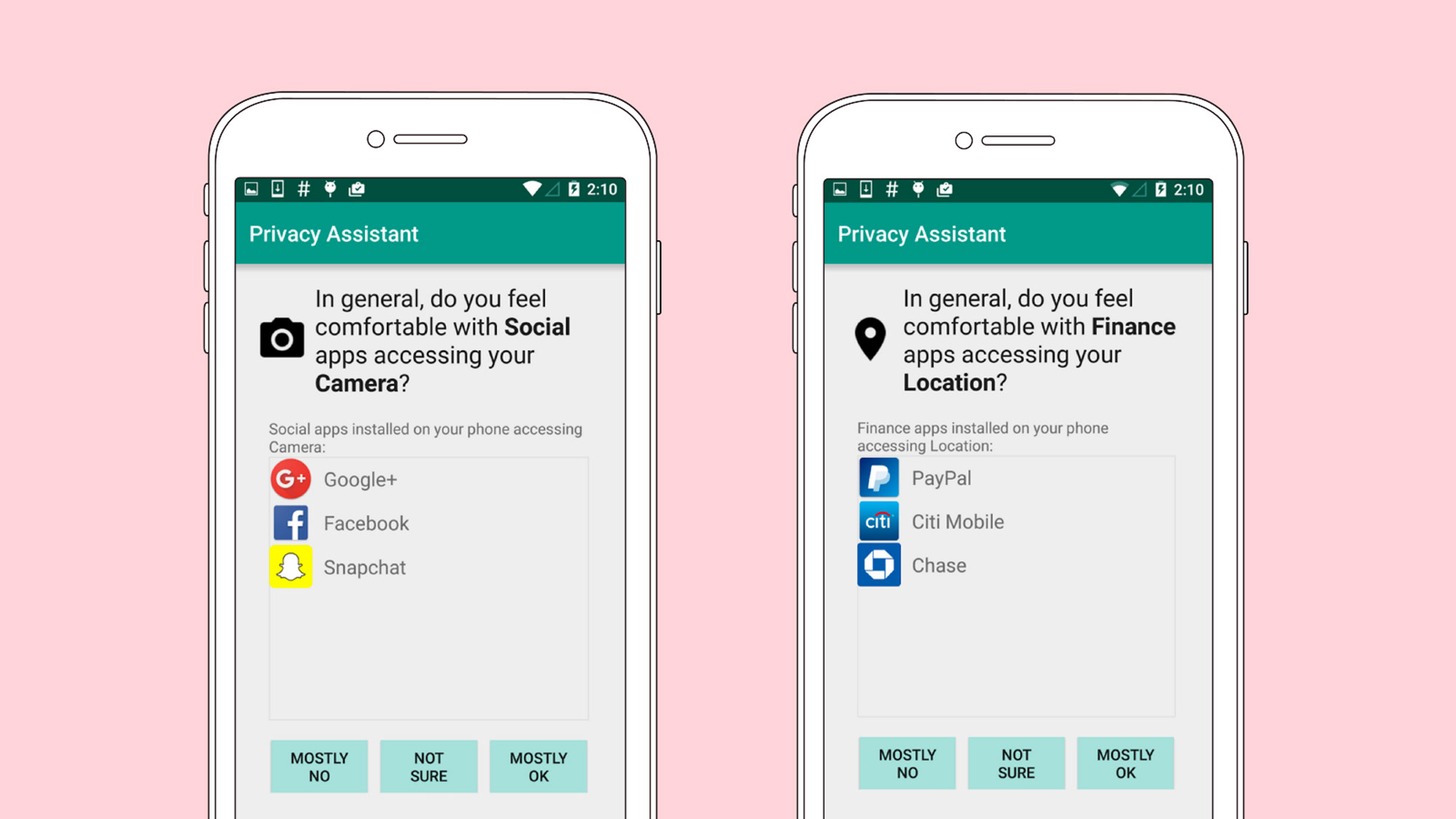

Privacy Assistant, a creation of researchers at Carnegie Mellon University, uses machine learning to give users more control over the information that the apps on their Android phones collect. It combines a user’s answers to several questions (for example, “In general, do you feel comfortable with finance apps accessing your location?”) with information it gleans from analyzing the apps on the user’s phone to make specific recommendations about how that person should manage permissions.

The app is available only for rooted devices, meaning their operating systems have been unlocked to allow for unapproved apps. But Norman Sadeh, a computer science professor who leads CMU’s Personalized Privacy Assistant Project, hopes that a major tech company will eventually see the technology as a way to differentiate itself from its competitors, and pave the way for it to become a mainstream tool.

Collection and analysis of user data for targeted advertising is growing more prevalent and sophisticated, and for many consumers it may as well happen in a black box. Most people don’t read privacy policies or pay close attention to app permissions, and even if they do, the language is often unhelpfully vague. According to recent research from Pew, 91 percent of U.S. adults believe that consumers have lost control of how companies collect and use personal information. If federal regulators continue to take a relatively hands-off approach to consumer privacy regulation, more people may turn to technologies like Privacy Assistant to help them maintain some level of control over their data (see “Welcome to Internet Privacy Limbo”).

The CMU group has shown that machine learning can model the privacy preferences of individuals. A user’s answers to a few questions are enough to place them into one of a few like-minded “clusters.” Privacy Assistant recommends data sharing settings—pertaining to location information, contacts, messages, phone call data, and the phone’s camera and microphone—based on a profile of the group that best fits the user.

Sadeh’s team is also exploring the idea of nudges, or notifications that inform or remind people which data they are sharing and prompt them to review their settings. The group has shown that nudges that tell people things like how often their location data has been collected, or how many apps are collecting their contact information or trying to access their microphone or camera, inspire people to look again at their settings. “Many people who otherwise would not take a look at their settings realize that there is a lot of stuff that they are not aware of,” says Sadeh.

Users of Apple’s iOS may be familiar with notifications that check whether they are comfortable with a given app sharing their location data. But they can also be frustrating because they don’t say why the app is collecting that data. By scanning the code of hundreds of thousands of free apps, the CMU group has found that when they collect location information, more often than not it is for things other than the core functioning of the app, often for advertising purposes.

Sadeh says there should be finer controls, which would let someone say, for instance, “I’m willing to let the app access my location for the purpose of giving me navigation or telling me about nearby restaurants or what have you, but I’m not willing to share my location with analytics companies that are building extensive trails of my whereabouts.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.