Treating Addiction with an App

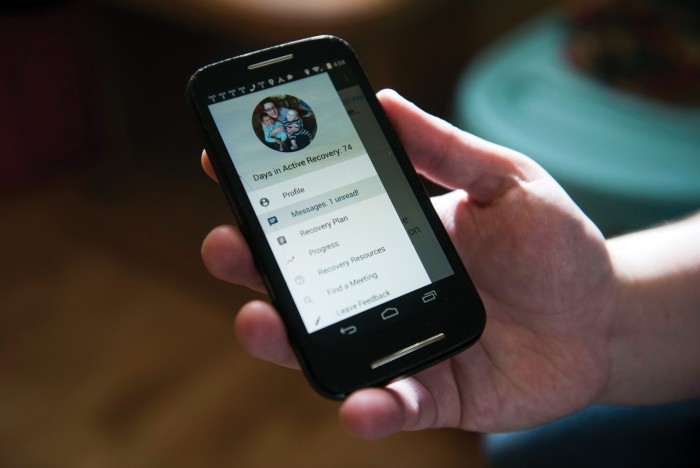

When I spoke to Tasha Hedstrom this winter, she had been sober for more than 61 days. After struggling with opioid addiction for 15 years, Hedstrom is taking Vivitrol, a drug that blocks the pleasurable effects of opioids and reduces cravings. She goes to a court-mandated recovery program three days a week and tracks her progress on a phone app she found on Facebook, called Triggr Health.

Hedstrom says she has never found peer support programs like Narcotics Anonymous helpful. “I don’t like the atmosphere. I feel like people are talking about using and glorifying it,” she says. “I don’t like telling my story a million different times.”

Triggr has been a different way to access support. In addition to tracking the number of days she has been in recovery, the app connects Hedstrom to a team of recovery coaches, who chat with her periodically throughout the day by text and app message. If she has not contacted Triggr for a full day, the team contacts her. Generally, they talk about how her day is going or goals she has set for herself, but recently they helped her through an unexpected challenge. A stranger followed her car into a lot and parked next to her, then offered her drugs. Not sure what to do, Hedstrom texted Triggr. “It’s not just about addiction,” she says. “It’s like we’re on a friend basis. You need to have backup supports.”

In 2015, 33,000 people in the United States died from opioid overdoses—the highest number ever recorded, and more than double the 2005 figure, according to the National Institute on Drug Abuse. More than half a million hospitalizations related to opioid dependence occur each year, at a cost of $15 billion, according to a recent study. Tens of billions more are spent on clinics and other treatment.

In total, 23 million Americans have a substance use disorder involving illicit drugs or alcohol, according to 2013 data collected by the Substance Abuse and Mental Health Services Administration, part of the U.S. Department of Health and Human Services. But fewer than 20 percent of those who need treatment will receive it. And while the most common form of treatment, Alcoholics Anonymous or Narcotics Anonymous, can be quite effective for some, according to one survey 75 percent of people in such programs relapse in their first year. Though a wide range of treatments are available, says James R. McKay, an expert in addiction and a professor of psychology at the University of Pennsylvania, “there are people for whom none of those things really work.”

New technologies are offering yet another option, making use of the computers we carry in our pockets. Of the 165,000 smartphone apps for health care, mental-health apps are the single largest category, including hundreds of addiction-related options offering inspirational quotes, directions to nearby AA meetings, hypnosis guides, and online peer support groups.

Triggr is more ambitious. Using data collected from smartphones, the company aims not only to help people handle cravings and the stresses that trigger drug use but to actually predict when someone is going to relapse and intervene. Triggr collects clues from things like screen engagement, texting patterns, phone logs, sleep history, and location. Those are combined with information gathered from participants’ communications with the startup’s staff on its platform—such as drug preference, drug history, and the presence of dangerous words like “craving” or “stress”—and fed into a series of algorithms. The system has access to general information about other texting and e-mail activity but not to the content of private texts or calls. Using machine learning, it searches for patterns that point to an increased likelihood of relapse. When the likelihood rises to a dangerous level, a member of the recovery team steps in or alerts a customer’s outside care team.

Neither the company nor clients will say how much the platform costs to use, though in some pilot projects Triggr seems to be charging very little or nothing. Hedstrom downloaded the app for free but now pays a monthly fee for using the system, which she says is less than two dollars a day. The most promising way for Triggr to make money could be to share in the financial savings use of the app could offer the insurers and government agencies that pay the medical costs associated with addiction. An initial 30-day inpatient treatment can cost $17,000, and emergency room visits and other associated costs add up quickly.

Chris Olsen, a partner at the venture capital firm Drive Capital, one of Triggr’s investors, says it has been estimated that Ohio Medicaid is spending as much as $5 billion a year on hepatitis C infections, which are strongly correlated with injection drug use. “If we can reduce that,” says Olsen, “I just believe there will be a revenue model down the line.” Among those using the app today are patients who have been through rehab at Sprout Health Group, a chain of addiction treatment centers headquartered in New Jersey. Sprout CEO Arel Meister--Aldama says that before Triggr, a patient who had been in a full-time program for 45 days on average and then returned to the community would have been tracked with periodic phone calls and invited to alumni events, but it was hard to know how people were really doing. Now Sprout’s counselors get alerts from Triggr when a patient seems at risk. “There are false alarms, but often we’ll catch people on the way to their drug dealers. Or they’ll be sitting outside a bar thinking about going in,” says Meister-Aldama.

Sprout’s readmission rates have actually gone up since the company started using Triggr, but overall cost per patient has declined. That’s because its counselors have been able to help patients earlier, avoiding expensive stays in treatment facilities and emergency treatment. With the data he is getting from Triggr, Meister-Aldama says, he has a better understanding of what it will cost to treat each patient. He expects that in the future he will be able to agree to flat payments per patient instead of charging fees based on services.

The platform Meister-Aldama has found so useful wouldn’t work without ubiquitous smartphones and recent advances in machine learning. And it wouldn’t exist at all if it had not been for one college student’s pain—and her mother’s timely intervention.

Motherly intuition

John Haskell, Triggr’s cofounder and CEO, came up with the idea for the app and the broader system of care during a challenging period in his own life. While an undergraduate at Stanford, he battled manic depression, spending five years at school without earning a degree. And one of his friends at Stanford struggled with mental-health problems and substance abuse. She got to a point at which she did not want to continue with treatment and considered suicide. At a particularly critical moment, her mother called. The call set her daughter on a more positive path, and when Haskell asked the mother what had prompted the call just at that moment, she attributed it to “motherly intuition.”

Motherly intuition was something Haskell thought should be reproducible with the help of smart technology.

“She knew something was wrong. She could feel it. But what was particularly interesting about that experience was that it was all these data points. And all trackable on your phone,” he says. For example, his friend had always loved Words with Friends, an online multiplayer game similar to Scrabble, but she had stopped playing. She was sending texts in the middle of the night, an obvious sign she was not sleeping. “The concept of intuition is purely a data question,” says Haskell. “Why can’t you scale motherly intuition?”

Six years later, Haskell’s motherly-intuition machine occupies two long white tables in a second-floor walk-up in Chicago’s River North neighborhood. At one table sit a small group of programmers and data scientists, many with backgrounds at larger companies including Google, building the app and its platform. On the other side of a partial partition wall, at an identical white table, sit the recovery group, a team of four to five people who interact with participants on the platform. Everyone faces a computer screen.

The technologists work with the data that sensors are pulling off participants’ phones as well as from their interactions with the recovery team, identifying patterns that signal a move in the wrong direction. Twenty-four hours a day, seven days a week, Triggr actively watches over everyone on the platform, with a single member of the recovery team following 500 people at any time. Each participant has a rating on a scale of 1 to 10 based on the patterns Triggr’s algorithm is tracking. A 1 means things are going very well. A 10 is an alert that the person is exhibiting a pattern of behavior that may be on the edge of relapse and needs to be contacted.

Most staff communication with clients takes place via text or app messaging. Without the clues they might get in person from eye contact and body language, or on the phone from someone’s tone of voice, the team relies heavily on alerts from its algorithms.

Are you a robot?

Triggr’s machine-learning systems have made the platform smarter over time by studying both those interactions with participants and the millions of data points collected from their smartphones. The systems search for anomalies, breaks from a client’s typical routine. As more people use the system and more data is gathered and studied, the ability to see signs of a potential relapse improves. Eighty-five percent accurate a year ago, Triggr can now predict with 92 percent accuracy when a client is likely to slip in the next three days. The early intervention such predictions make possible is significantly improving clients’ results, the company says.

The messiness of the data is what convinced Triggr’s data scientist, John Santerre, that machine learning could be effective against the problem. Some of the most important warning signs of an impending slip have nothing directly to do with drugs or alcohol. Instead, they’re life events, like the death of a family member or another user, an affair, an issue with housing. Just one deviation from a client’s normal routine—something as small as a text that comes in at an unusual time—increases the chance of relapse in the next few days. Triggr does not even need to know whom that text comes from or what it says. The interruption of routine is the critical clue.

Triggr is collecting every piece of data it can on how to help people resist an urge as it swells and then drops off, and has taken on the tricky task of building a system designed to work with minimal human input while producing a service customized to each participant. While algorithms may determine that a slip is coming, intervening to stop it isn’t necessarily suited to automation. “Our goal is to make it as human as possible,” says Haskell. Still, clients do sometimes ask the recovery coaches if they are robots. Tasha Hedstrom did; Triggr responded by asking if she was a robot. Humor is one of the techniques the algorithm has determined work well with some participants.

The coaches are always testing messages sent to clients in response to different types of issues. Those that resonate are shared with the engineering team; when a similar call comes in later, the system will know to suggest that effective response. Once Triggr determines that a person is in danger of relapsing, it’s time for the really hard part: intervention to stop the self-destructive behavior. Humans do oversee the interaction, but when someone’s risk is rising, a member of the recovery team is automatically alerted to the most effective way to reach out to that client and the type of message to which he or she is most likely to respond. This is as close to Haskell’s idea of digital intuition as Triggr has come so far.

No smartphone

A big focus for Haskell is developing connections to community service organizations, and on a wet morning in January, he was standing in a conference room in Framingham, Massachusetts, excitedly explaining the app to a group of counselors from the South Middlesex Opportunity Council (SMOC), a local nonprofit. SMOC had just launched Triggr as part of a program to connect with drug users in the emergency room after they have overdosed. Like many parts of the Northeast, the Midwest, and Appalachia, Framingham is suffering a rising number of opioid-related overdoses: they now average 10 a month.

Some counselors in the room worried that not all potential clients have smartphones. Others wanted a service Triggr does not offer: alerts when a client has contacted a drug dealer or used drugs again. Haskell had answers for every question, but a month and a half after the presentation, Krystin Fraser, who is running the grant, said that of the first eight people who signed up, only one agreed to download Triggr. Some do not have a smartphone, she explained, while others simply do not want someone watching them. Over the next month, 13 more people signed up for the app.

Most health apps are not regulated by the Food and Drug Administration and the company has chosen not to publish any clinical trials of its platform, something it is not required to do. It is tracking the long-term outcomes for people who use Triggr, and its decision does put the burden on the company to show that it really has made something extraordinary. It is in a crowded field. “There’s been a glut of mental-health apps, most of questionable use and efficacy,” says John Torous, a director of the digital psychiatry program at Boston’s Beth Israel Deaconess Medical Center. Torous is part of a study using passive phone data to follow people suffering from schizophrenia, a mental disorder that is quite different from addiction but can feature similar underlying behavior, such as disrupted sleep. “People underestimate how complex it is to work with this data,” says Torous. “We’ve had mass-market smartphones for 10 years and we still haven’t revolutionized mental-health care. If this were as easy as building an app, in 10 years it would have been done. People are complex. We can collect all this data, but how do we analyze it in a valid way?”

Jukka-Pekka Onnela, a professor of biostatistics at Harvard’s T.H. Chan School of Public Health and Torous’s collaborator on the schizophrenia study, is more optimistic. As people use phones for more and more daily needs such as schedules, navigation, and communication, the data from these devices becomes “very, very powerful,” Onnela says. That’s especially so for conditions where behavior is strongly influenced by a person’s surroundings and recent history, as it is for people with psychological disorders or addiction.

When they’re awake, people’s phone screens may be on more than 20 times an hour. Onnela has found that frequency to be a reliable indicator of sleep patterns, something essential to understanding psychological illness and treating it.

“In the past a lot of measurement has been confined to labs or doctor’s offices,” says Onnela. “What we are trying to do is to capture symptoms in the wild, the way people actually experience their lives.”

Deep Dive

Policy

Is there anything more fascinating than a hidden world?

Some hidden worlds--whether in space, deep in the ocean, or in the form of waves or microbes--remain stubbornly unseen. Here's how technology is being used to reveal them.

A brief, weird history of brainwashing

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever.

What Luddites can teach us about resisting an automated future

Opposing technology isn’t antithetical to progress.

Africa’s push to regulate AI starts now

AI is expanding across the continent and new policies are taking shape. But poor digital infrastructure and regulatory bottlenecks could slow adoption.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.