Supercomputer Simulation Offers Peek at the Future of Quantum Computers

Computer scientists have a name for the point at which quantum computers become more powerful than ordinary computers. They call it “quantum supremacy,” and, by all accounts, that time is rapidly approaching.

The current thinking is that a quantum computer capable of handling 49 qubits will match the capability of the most powerful supercomputer on the planet. And anything bigger than that will be beyond the ken of ordinary computing machines.

That isn’t quite possible yet. But it raises important questions about how we can know whether these quantum computers will work as expected. To find out, computer scientists have begun using powerful classical computers to simulate the behavior of quantum computers.

The idea is to calibrate and benchmark their behavior as accurately as possible, while we still can. After that, we’ll just have to trust the quantum world.

Of course, nobody has yet simulated a 49-qubit quantum computer. But today, Thomas Haner and Damian Steiger from ETH Zurich in Switzerland announce the most ambitious attempt to date.

These guys have used the fifth most powerful supercomputer in the world to simulate the behavior of a 45-qubit quantum computer. “To our knowledge, this constitutes a new record in the maximal number of simulated qubits,” say Haner and Steiger. And they show how more powerful simulations ought to be possible.

These simulations are difficult because of the sheer magnitude of the calculations that quantum computers make possible. This great power comes from the quantum phenomenon of superposition, which allows quantum particles, such as photons, to exist in more than one state at the same time.

For example, a horizontally polarized photon can represent a 0 and a vertically polarized photon can represent a 1. But when a photon exists as a superposition of both horizontal and vertical polarizations at the same time, it can represent both a 0 and 1 in a calculation.

In this way, two photons can represent four numbers, three photons can represent eight numbers, and so on. This is where quantum computers get their computational horsepower, and it is why classical computers pale in comparison.

For example, just 50 photons can represent 10,000,000,000,000,000 numbers. A classical computer would require a petabyte-scale memory to store that many.

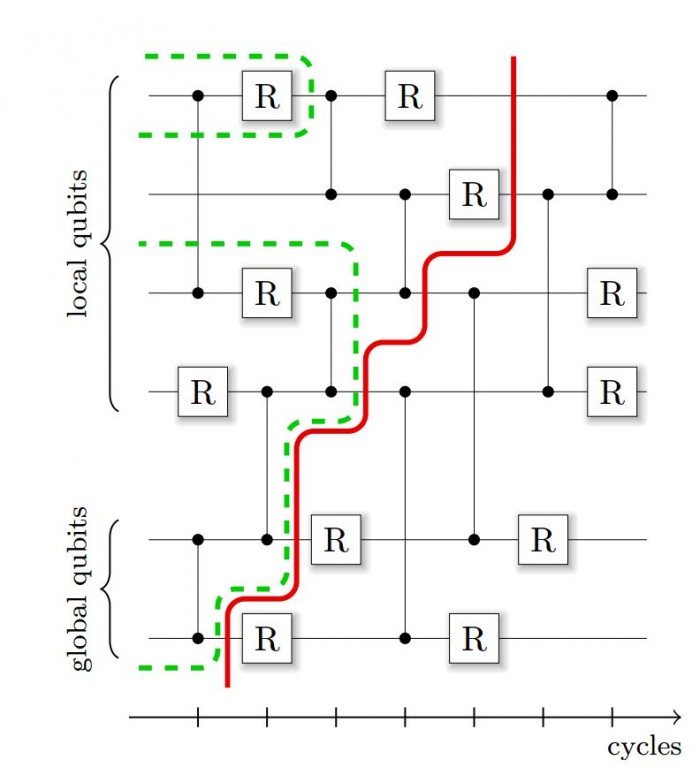

Processing these numbers on a classical computer is an even bigger task. That’s because most supercomputers are made up of many processing units connected in a giant computing network. As a result, managing the dataflow to and from these nodes is a significant communications overhead.

This challenge has limited the size of simulations to well below the quantum-supremacy limit. The current world record is a simulation of 42 qubits, work that was done on the Julich supercomputer in 2010. Little progress has been made since then because of the problems with computational overheads.

That has now changed thanks to the work of Haner and Steiger. Their breakthrough is to find ways of reducing the overhead so that the simulation can run more than an order of magnitude faster than before.

The researchers have applied these improvements to a set of simulations on the Cori II supercomputer at the Lawrence Berkeley National Laboratory in California. This device consists of 9,304 nodes, each containing a 68-core Intel Xeon Phi processor 7250 running at 1.4 gigahertz. This leads to a peak performance of 29.1 petaflops with one petabyte of memory.

Named after Gerty Cori, the first woman to win a Nobel Prize for medicine, the Cori II is the fifth most powerful supercomputer on the planet. So it is not short of computational horsepower.

Haner and Steiger used this device to simulate the way a quantum computer would perform calculations using 30, 36, 42, and 45 qubits. For the biggest simulation, they used 0.5 petabytes of memory and 8,192 nodes, achieving a performance of 0.428 petaflops.

That’s significantly less than the machine is capable of, even with the speedups the team has designed. The team put down this loss of performance to the communication overhead, which still takes up 75 percent of the computational time.

Haner and Steiger compared the results with simulations of 30- and 36-qubit computers run on a less powerful supercomputer called Edison, also at the Lawrence Berkeley Lab. They found that their approach also sped up these calculations. “This indicates that the obtained speedups were not merely a consequence of a new generation of hardware [for Cori II],” say Haner and Steiger.

They say this improvement suggests that simulation of a 49-qubit computer ought to be possible in the near future.

That’s interesting work that paves the way for future quantum computers. The data from this work will play an important role in ensuring that physicists have confidence in quantum calculations when quantum supremacy is finally achieved. And that day is surely not too far in the future.

Ref: arxiv.org/abs/1704.01127 : 0.5 Petabyte Simulation of a 45-Qubit Quantum Circuit

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.