For Facebook and Google, the Best Way to Fight Fake News Is You

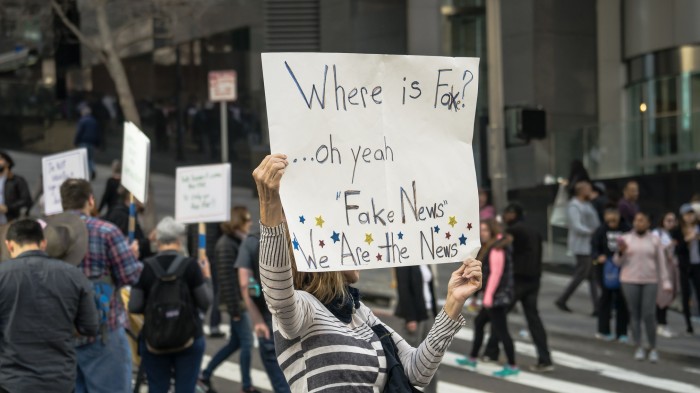

The battle against fake news continues—but new features from Google and Facebook designed to help fight misinformation still firmly distance the companies from the thorny issue of choosing between real and fake content.

In the wake of the presidential election, Facebook came under heavy criticism for the proliferation of fake news in its users’ feeds. Despite attempts to fix the problem, using third-party fact-checkers to flag potentially incorrect news, it remains an issue for the social network. And more recently Google also found itself criticized for allowing its search algorithms to happily serve up misinformation.

Today, both companies have rolled out tools that they hope will ease the problem. Google has taken a page out of Facebook’s, er, book, adding a “Fact Check” tag to snippets of articles that appear under the News tab of its search results. Like Facebook, it uses analysis by fact-checking organizations to alert users to content that appears to be inaccurate.

Ultimately, though, the system leaves the user to decide whether to believe the content or not. “These fact checks are not Google’s and are presented so people can make more informed judgments,” explain Google’s Justin Kosslyn and Cong Yu in a blog post describing the feature. “Even though differing conclusions may be presented, we think it’s still helpful for people to understand the degree of consensus around a particular claim and have clear information on which sources agree.”

Meanwhile, Facebook’s new initiative also puts the onus on the user. Today, millions of users’ news feeds in 14 countries, including the U.S. and the U.K., will be splashed with banners that encourage people to learn “how to spot fake news.” The tips, which were developed by the U.K. fact-checking organization Full Fact, include sensible advice—from checking URLs, date stamps, and formatting, to questioning headlines and inspecting photographs. Facebook’s is only a temporary experiment, though, meant to last just “a few days.” Presumably if it’s successful the trial may be widened out—though what counts as success here is up for debate.

Indeed, both Google and Facebook are painfully aware that this is an incredibly hard problem to solve. As we’ve pointed out in the past, deciding between what’s true and false can be incredibly difficult: there are murky lines between disagreeable opinions, bad information, and outright lies. Picking them apart is understandably something that both Internet giants are afraid to do.

For his part, Mark Zuckerberg has stated that the issue is “complex, both technically and philosophically,” and that he wants the social network to “be extremely cautious about becoming arbiters of truth.” For now, then, that role falls to you.

(Read more: Facebook, Google, Full Fact, “Google’s Algorithms May Feed You Fake News and Opinion as Fact,” “Facebook Will Try to Outsource a Fix for Its Fake-News Problem,” "Facebook’s Content Blocking Sends Some Very Mixed Messages")

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.