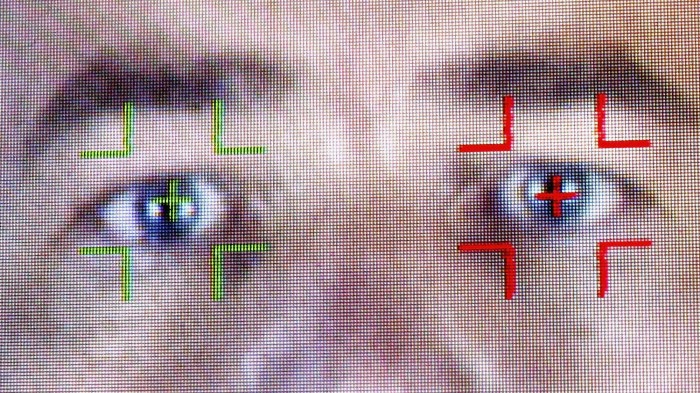

The FBI’s Facial Recognition Program Is Sprawling and Inaccurate

Smile: perhaps you’re being misidentified.

Last year, we learned about the remarkable scale of the FBI’s facial-recognition technology, with its access to nearly 412 million photos—many originating from sources unrelated to crime, such as ID documents. The intelligence agency has been trying to create a system that can accurately identify criminals in, say, CCTV footage—though it wasn't then known how well the bureau's software worked, nor whether it actually improved investigations.

Now, we have at least a little more insight into the program. The Guardian reports that a House oversight committee hearing last week revealed some interesting new details about the proliferation and abilities of the FBI’s facial-recognition systems.

First, there is a very good chance that you, as an American, appear within the database. Among those many millions of photos, it turns out, are the likenesses of around half the country's adult population. That’s possible because images that are derived from sources unrelated to crime account for 80 percent of the database. So when you send off forms and pictures for a passport, driver’s license, or some other official identification, you’re likely helping grow the data set.

Perhaps more worrying is the quality of the FBI’s recognition. The hearing revealed that its software currently misidentifies individuals almost 15 percent of the time. In contrast, market-leading facial recognition technology is now so accurate that it’s used for authorizing payments in China, and Baidu claims to correctly confirm someone's identity 99.77 percent of the time.

In the FBI’s defense, it’s probably working with images of far lower quality, both in its database and in the images that it tries to perform recognition on, than those available to the likes of Baidu. But error rates of 15 percent are still high, and could lead to false leads or unfounded intrusions on privacy.

The software also appears to incorrectly identify black people more frequently than it does white people. Sadly, that effect is predictable: we’ve explained in the past that facial-recognition systems are inherently biased because they’re trained on data sets that underrepresent some demographics.

But that problem can have troubling consequences. As the Guardian notes, black people are more likely to have facial-recognition software used to identify them, so it seems strange—unfair even—to use tools that are clearly ill-equipped to discern their likeness.

These nuggets all add to the privacy concerns that have already plagued the FBI’s initiative to build a system that could accurately identify criminals. The problems, at least, have been revealed—now they just need to be solved.

(Read more: The Guardian, “As It Searches for Suspects, the FBI May Be Looking at You,” “Paying with Your Face,” “Are Face Recognition Systems Accurate? Depends on Your Race.”)

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.