Machine-Learning Algorithm Predicts Laboratory Earthquakes

Earthquakes take a dreadful human toll. Some 10,000 people die each year in earthquakes and their aftermath, but the toll can be much higher. Over 230,000 people died in the tsunami that followed the magnitude 9 quake off the coast of Sumatra in 2004; more than 200,000 died in Haiti in 2010 after the country was hit by a magnitude 7 quake; and more than 800,000 are thought to have died in a quake in China in 1556.

So a better way—any way—to forecast quakes would be hugely valuable.

Enter Bertrand Rouet-Leduc at Los Alamos National Laboratory in New Mexico and a few pals who have made a remarkable discovery. They've trained a machine-learning algorithm to spot the tell-tale signs that a laboratory earthquake is about to give way using only the sounds it emits under strain. The team is cautious about the new technique’s utility for real earthquakes, but the work opens up new avenues of research in this area.

First some background. Geologists have long been able to work out the approximate risk of an earthquake. Their approach is to work out when the fault moved in the past and use any periodicity to predict the future.

The most famous example involves the Parkfield segment of the San Andreas Fault in California, one of the most carefully studied faults on the planet. Earthquakes occurred here in 1857, 1881, 1901, 1922, 1934, and 1966, suggesting a pattern in which quakes occur every 22 years give or take a few years. Geologists therefore predicted that a quake would occur between 1988 and 1993, but they had to wait until 2004 for their temblor.

And that’s about as good as earthquake forecasts have got—in most other places, the error bars are orders of magnitude larger.

Such predictions are useful for enforcing things like building standards in regions known to be earthquake-prone. But they are of little use in preventing deaths when the quakes occur. For that, forecasts over time spans measured in days are needed. There has been little evidence that this type of prediction will ever be possible, even though there is much anecdotal evidence to suggest that animals can somehow sense the imminent onset of a quake.

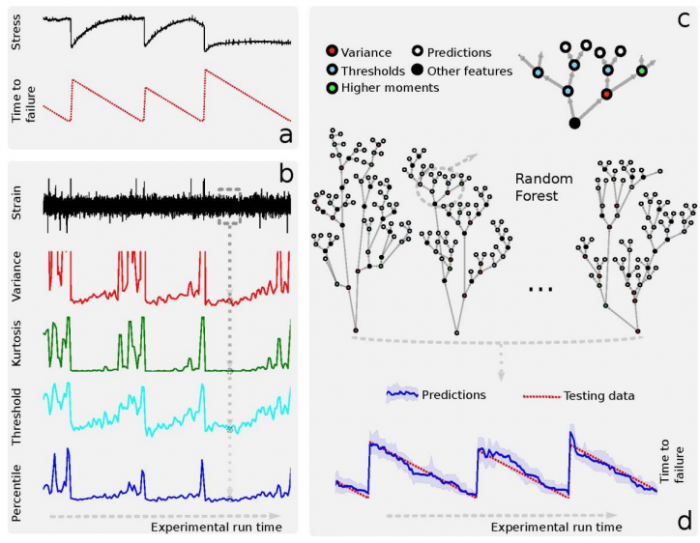

Rouet-Leduc and co’s work may change that. They created artificial earthquakes in their lab by pulling on one block sandwiched between two others. At the interface between the blocks, they packed a mixture of rocky material, called gouge material, to simulate the properties of real faults.

This kind of artificial quake system has been well studied. Geologists know that as a quake approaches, the gouge material begins to fail, emitting groans and cracks as it shears—a kind of seismic chatter. The block then slips quasi-periodically.

This system has some similarities with real earthquakes. For example, the size distribution of the slips is the same as the size distribution of real earthquakes. It generates lots of small slips and just a few large ones—a distribution that follows the well-known Gutenberg-Richter relation, just as a real earthquakes do. So geologists are confident that this system mimics at least some of the behaviors seen in the real world.

The question these guys ask is whether the sound emitted by the fault can be used to predict the time of next slip. Until now, nobody has spotted pattern in these sounds that can be used to make such a prediction. But Rouet-Leduc and co have taken a new approach.

They’ve recorded the acoustic emissions from the experiment and fed them into a machine-learning algorithm. The idea was to see if the machine could decipher some pattern that geologists had so far missed. And indeed it did.

The results are something of a surprise. The researchers fed the algorithm a sliding window of acoustic emissions, asking it to make a prediction at each instant of the likelihood of an earthquake. To their astonishment, the machine gave accurate predictions even when an earthquake was not imminent. “We show that by listening to the acoustic signal emitted by a laboratory fault, machine learning can predict the time remaining before it fails with great accuracy,” they say.

The puzzle is how the machine can do this. Rouet-Leduc and co hypothesize that seismic precursors can be much smaller than had previously been thought and so are not usually recorded in the real world. The machine seems to have spotted an entirely new signal that geologists had previously dismissed as noise in the laboratory quakes. “Our machine-learning analysis provides new insight into the slip physics,” they say.

That’s fascinating work that has significant implications. The first and most obvious question it raises is whether the same technique could predict real earthquakes accurately.

Rouet-Leduc and co are cautious in this respect. They point out that the laboratory experiment is different in various important ways to real quakes. The shear stresses are orders of magnitude larger than in real quakes and the temperatures of the rocks involved are different too.

But there are other ways in which the lab quakes are similar to those on Earth. So the team’s next goal is to apply the same kind of analysis to real quakes that most resemble the laboratory ones. One such is Parkfield, which experiences many repeating earthquakes over relatively short periods. “Repeaters at these fault patches may be emitting chattering in analogy to the laboratory,” they suggest.

The big test of course, will be to actually predict an earthquake accurately. That’s a difficult task that will require careful observation over many years.

In the meantime, the same technique could be applied to predicting earthquake-like failures in other materials, such as turbines in aircraft and power stations.

However the new technique is applied, Rouet-Leduc and co have set the cat among the pigeons in the world of geology. As they conclude: “The stage has been set for potentially marked advances in earthquake science.”

Ref: arxiv.org/abs/1702.05774: Machine Learning Predicts Laboratory Earthquakes

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.