Single Photon Carries 10 Bits of Information

Single photons are ideally suited for sending information in digital form so that each photon encodes a 0 or a 1. In that case, it’s easy to imagine that this is all the data that a single photon can hold. Not so! In theory, there is no limit to the amount of information a single photon can encode.

And that raises an interesting question. How much information can physicists pack into a single photon in practice? What does current technology allow?

Today we get an answer thanks to the work of Tristan Tentrup and pals at the University of Twente in the Netherlands. They have packed more than 10 bits into a single photon for the first time.

Their method is straightforward, in theory. The approach is to associate a single photon with a unique member of an alphabet. When the alphabet contains lots of members, the photon carries lots of information.

It’s not hard to see why. When an alphabet contains only two members, such as binary code, each member encodes one bit of information. This is the amount of information needed to describe each symbol in the alphabet.

But when the alphabet is bigger, it takes more information to uniquely describe each member. So each member can encode that amount of data.

The actual amount of information is given by the log to base 2 of the number of members. For example, in an alphabet of 10 symbols, such as each decimal number, each symbol encodes about 3.3 bits. In an alphabet of 26 symbols, such as the English alphabet, each symbol encodes 4.7 bits. And so on.

Tentrup and co achieve their goal by creating an alphabet with 9,072 symbols. In that case, each symbol encodes more than 13 bits of information.

Creating this alphabet is simple. Tentrup and co do it by defining a 112 x 81 grid of pixels—that’s 9,072 of them. Each pixel represents a different symbol of the alphabet. To encode a photon with one of these symbols, all they have to do is point the photon toward that part of the grid. So when a specific pixel registers the arrival of a photon, it registers that symbol.

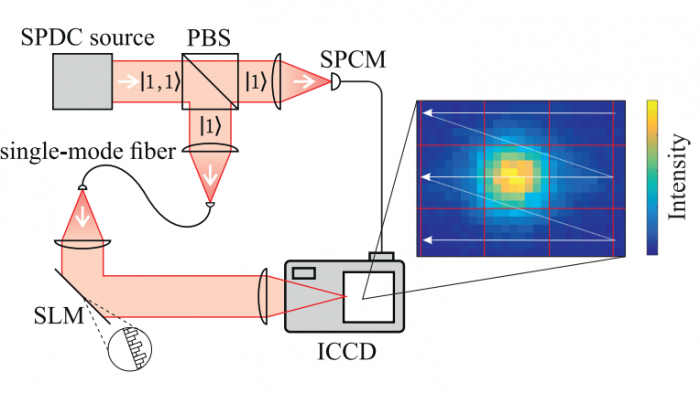

The tricky part is doing this accurately with single photons. One way to steer photons is with a tilting mirror that simply reflects them in a specific, controllable direction. But Tentrup and co use a more flexible device called a spatial light modulator which modifies a photon’s wavefront as it reflects it. This uses diffraction effects to steer the photon toward its target.

Detecting single photons is also a potential banana skin, since any stray light can overwhelm the signal. Tentrup and co have a handy trick for preventing this. Instead of creating single photons, they create them in pairs and encode just one of them with information using this steering mechanism. They look out for the other as a warning that the first is about to arrive at the pixel.

This allows them to switch on the pixel at the very instant the first photon arrives. And this dramatically reduces the chances of a stray photon swamping the signal. Nevertheless, noise still has an impact and the photons end up carrying slightly less information than the theoretical maximum.

The results are nonetheless impressive. “We demonstrate high-dimensional encoding of single photons reaching 10.5 bit per photon,” say Tentrup and co. That significantly improves on the previous record of just seven bits per photon and immediately suggests ways to encode even more by increasing the size of the grid.

The work has immediate applications. Physicists already use information encoded in single photons for applications such as the distribution of keys in quantum cryptography.

This information is currently encoded in single photons using the binary code of 0s and 1s. But the new technique immediately allows each photon to carry an order of magnitude more. “A very promising direction for this work would then be the implementation of a large-spatial-alphabet encoding for quantum key distribution,” says Tentrup and co.

So we may not have to wait long to see this record-breaking technology in action.

Ref: http://arxiv.org/abs/1609.04200: Transmitting More Than 10 Bit with a Single Photon

Deep Dive

Computing

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Why it’s so hard for China’s chip industry to become self-sufficient

Chip companies from the US and China are developing new materials to reduce reliance on a Japanese monopoly. It won’t be easy.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.