New Self-Driving Car Tells Pedestrians When It’s Safe to Cross the Street

Perhaps the biggest challenge for self-driving cars will not be tracking other vehicles or avoiding unfamiliar obstacles so much as dealing with that most erratic and confusing of phenomena: human behavior.

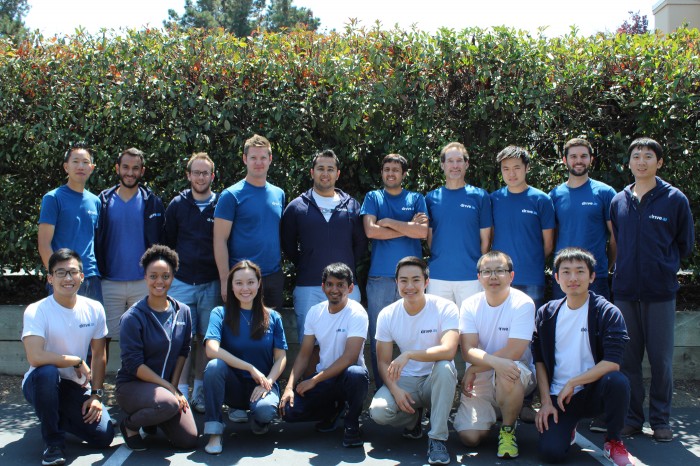

A new automated driving startup, Drive.ai, is making human interaction a key part of its strategy. The company, which includes numerous AI researchers from Stanford University, is developing systems that can be trained to interpret data from sensors and control a vehicle’s behavior. But more unusually, they are also exploring ways that vehicles might learn the norms of driver and pedestrian behavior. Within the next few weeks the company will begin testing in California automated vehicles fitted with displays and sound systems designed to communicate with pedestrians.

This effort may turn out to be very important, especially since the first self-driving vehicles may be far from fully autonomous (see "Prepare to be Underwhelmed by 2021's Autonomous Cars").

“Everybody talks about this magical world where all the cars on the road are self-driving," says Carol Reiley, cofounder and president of Drive.ai. "It’s surprising that the human side has been engineered out of the equation."

Reiley, a roboticist who studied at Johns Hopkins and previously worked on medical robots, says the company is unique in applying machine learning to both driving and human interaction. She notes that subtle everyday interactions, like apologizing after cutting off another driver or saying thank you after someone lets you in at an intersection, will be completely different with automated driving.

"We fully anticipate people’s behaviors to change around a self-driving car," Reiley says. Self-driving cars may need to behave differently in different locations, she adds, and it will take time to figure out what works best. “But ultimately, we believe that we need to factor in the human element from day one, find out what works, and be responsive,” Reiley says.

The company’s first product will be hardware required to retrofit a vehicle so that it can drive itself. It will be offered to companies that operate fleets of vehicles along specific routes, such as delivery or taxi services. Besides sensors and systems for controlling the car, this will include a roof-mounted communications system and a novel in-car interface.

The company will experiment with using text, sounds, lights, and even motion to communicate with drivers and pedestrians. The display on a vehicle might tell a pedestrian when it’s safe to cross the road, while edging forward could tell another driver to give way. The systems will make use of deep learning, a technique that has proven very powerful for teaching machines how to do tasks that would be difficult to program by hand.

Bryan Reimer, a research scientist at MIT who studies automation and driver behavior, says too little attention has been paid to human behavior by those who are developing automated driving systems.

"The sensing and processing difficulties that many of the key technology firms are currently focused on will be solved faster than our ability to devise cohesive human-centered designs," he says. "While we will see many deployments of higher order automated vehicles, I believe our roadways will be dominated with lower-level automated systems for decades to come."

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.