Facebook Releases Code to Let Computers See More Like Us

To make sense of the visual world, it’s not enough to know that you are looking at, say, a cat. You need to know where the cat stops and the background begins.

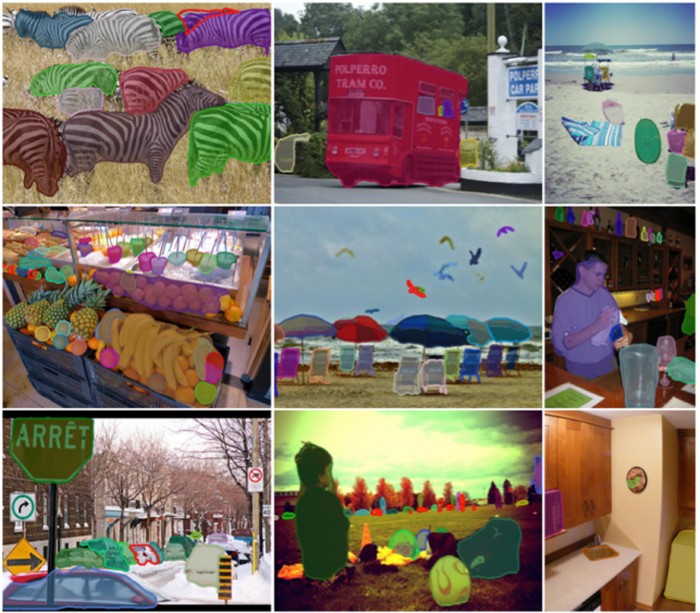

A computer vision algorithm developed by Facebook and made publicly available to other researchers today gives computers this ability. It can identify not only what’s in an image, but also the shapes that correspond to particular objects. That might seem like a simple trick, but it’s devilishly difficult to program a computer to do it correctly, and is beyond the capabilities of existing vision systems.

For now, Facebook’s algorithm is just a research tool. Ultimately, though, it could have a range of important applications: enabling an image-editing program to automatically change the background or brighten the people shown in a picture; providing ways of describing images in detail to blind computer users; even making augmented reality games like Pokémon Go far more realistic by recognizing objects for Pikachu to climb on.

There have been significant advances in computer vision in recent years, but the progress has mainly been in recognizing objects or types of scenes. Researchers have begun to turn their attention toward deeper image understanding, however, and this is important for making machines more intelligent overall (see “The Next Big Test for AI: Making Sense of the World”).

“One of the hardest things [for computers to do] is to understand reality—what’s actually out there,” says Larry Zitnick, a research manager at Facebook who was involved with the work. “Image segmentation is a critical part of scene reasoning.”

Zitnick says the algorithm might eventually be used to develop a system that automatically highlights the products in an image posted to Facebook, or to create more realistic augmented reality apps. “If you want to put a [virtual] puppy in a room,” he says, “you actually want to put it on a sofa, and on a particular part of that sofa.”

Much progress has been made in computer vision over the past few years using large simulated neural networks trained to categorize images using numerous examples. These “deep learning” systems typically recognize a range of features, such as color and texture, but do not necessarily recognize the outline of an object.

Facebook’s algorithm combines a series of neural networks to perform this sort of “image segmentation.” The first couple of networks are used to determine whether individual pixels are part of one object or another; a third network is then used to determine what those particular objects are.

Stefano Soatto, a professor at UCLA who specializes in computer vision, says the work is “very significant” and could have many applications because image segmentation is deceptively difficult: “Every two-year-old can point to objects and trace their outline in a picture,” Soatto says. “This, however, is deceptive. There are millions of years of evolution and half of the real estate of the brain that goes into accomplishing this feat.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.