Single-Pixel Camera Reaches Milestone, Mimicking Human Vision

Computational imaging is undergoing a revolution. This is the discipline of making images using computational techniques rather than optical ones. Its best known breakthrough is the ability to record high resolution images and movies using a single pixel. But researchers have also used it to build lensless cameras, 3D imaging systems and more.

Today, they take the technique even further by using it to mimic the way humans see the world. David Phillips at the University of Glasgow and a few pals say they’ve found a way to use a single pixel to create images in which the central area is recorded in high resolution while the periphery is recorded in low resolution. That exactly mimics animal vision systems in which the retina has a central region of high visual acuity called the fovea surrounded by an area of lower resolution.

The team have even shown how to move the “foveated” region to follow objects within the field of view. The technique has the potential to change the way many imaging systems work in future.

First some background. A single pixel imaging system records light from a scene at a single point. This light has to be randomised in some way, for example by passing it through frosted glass or reflecting it off a micro-mirror array which is randomly arranged.

It’s easy to think that little can be gained by recording light randomised in this way. The trick of course is to take lots of single pixel images in this way. Although each data point seems to be a random sample of light, consecutive data points are correlated because they are reflections from the same scene.

So the trick behind computational imaging is to use a data mining algorithm to find the correlation between successive images. A bit of number crunching can then recreate the original scene.

It turns out that this is relatively straightforward, provided that the light from the scene is properly randomised each time the pixel records it. The resolution of the final image then depends on the number data points used to create it.

In other words, each data point can be thought of as recording a pixel in the final image. It is this idea that allows Phillips and co to vary the resolution throughout an image.

These guys use a digital micro-mirror array to randomise the light from a scene reaching their single pixel light detector. But they are also able to control the resolution of the randomisation in this array. So they can use high resolution randomisation in parts of the scene to increase the resolution of the final image. This is the “foveated image”

Their micro-mirror array can display some 10,000 randomised patterns per second which allows them to generate 32 x 32 pixel images at the rate of about 10 per second.

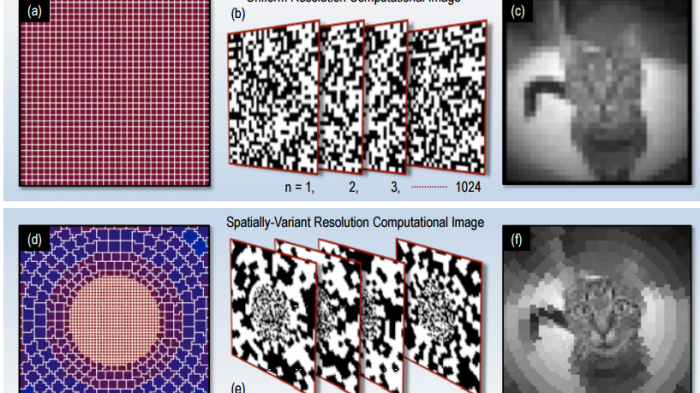

To start with, the pixels are square and equal in size in each 32 x 32 image. But a foveated image has smaller, more densely packed pixels in the centre and larger pixels in the peripheries.

Phillips and co achieve this by randomising the light from the scene with higher resolution in the centre of the image.

And the results are impressive. The team show how the resulting images clearly have a higher resolution at the centre. “We have demonstrated that the data gathering capacity of a single-pixel computational imaging system can be enhanced by mimicking the adaptive foveated vision that is widespread in the animal kingdom,” they say.

But they also show how it is possible to move the fovea to track objects of interest from one image to the next. They even show how it is possible to have two fovea in a single image to track two different objects, thereby taking the technique beyond the capability of the animal world. And they demonstrate the technique with both visible and infrared light.

That’s interesting work that has some important potential applications. The most obvious is for imaging systems in which pixel arrays are not practical. For example, single pixels are available for terahertz frequencies but pixel arrays are not.

But the technique is more generally applicable. In all imaging systems there is a trade-off between resolution and frame rate. This technique allows this trade off to be optimised on the fly and allows attention to focus on the parts of an image that are of greatest interest.

That could be made much more powerful by combining it with other machine vision techniques algorithms have begun to outperform humans in tasks, such as face and object recognition.

Humans and animals have long outperformed machines in vision tasks. But with techniques like this, this mastery will not last for much longer.

Ref: arxiv.org/abs/1607.08236 : Adaptive Foveated Single-Pixel Imaging With Dynamic Super-Sampling

Deep Dive

Computing

Inside the hunt for new physics at the world’s largest particle collider

The Large Hadron Collider hasn’t seen any new particles since the discovery of the Higgs boson in 2012. Here’s what researchers are trying to do about it.

How ASML took over the chipmaking chessboard

MIT Technology Review sat down with outgoing CTO Martin van den Brink to talk about the company’s rise to dominance and the life and death of Moore’s Law.

How Wi-Fi sensing became usable tech

After a decade of obscurity, the technology is being used to track people’s movements.

Algorithms are everywhere

Three new books warn against turning into the person the algorithm thinks you are.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.