Data Mining Reveals the Crucial Factors That Determine When People Make Blunders

The way people make decisions in the real world is a topic of increasing interest among psychologists, social scientists, economists, and others. It determines how economies perform, how elections are run, and how conflicts break out and get resolved.

One idea has provided a focal point for decision-making research. This is the notion of bounded rationality—that people are limited by various constraints in the real world, and these play a crucial role in the decision-making process. People are limited by the difficulty of the decision they have to make, their own decision-making skill, and the time they can spend on the problem. Nevertheless, whatever the circumstances, a decision has to be made and the consequences accepted.

That raises an important set of questions. How do these factors influence the quality of the decision being made? Does time pressure have a bigger impact than, say, decision-making skill on the quality of a decision?

These are hard questions to answer, given the difficulty of setting up a controlled experiment to test them. Indeed, nobody has found a satisfactory way of studying the problem.

Until now. Today, Ashton Anderson at Microsoft Research in New York City, Jon Kleinberg at Cornell University in Ithaca, and Sendhil Mullainathan at Harvard University in Cambridge unveil the first large-scale study of decision making under controlled conditions. For the first time, these guys have been able to study how the quality of decision making changes with the time available, the skill of the decision maker, and the difficulty of the decision at hand.

Their laboratory? The game of chess. “We have used chess as a model system to investigate the types of features that help in analyzing and predicting error in human decision-making,” they say.

Their research focuses on a database of 200 million chess games played online between amateurs and another database of around one million games played between grand masters. What’s interesting about these databases is that the outcome of the game reveals whether a player has made a mistake. And the recorded moves reveal exactly when the losing player makes the blunder.

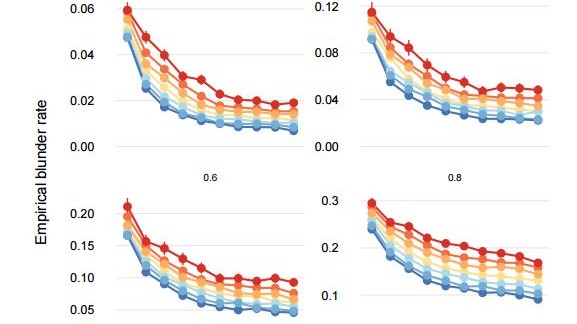

The team are then able to see what factors have played a role. They can see whether the player was under time pressure, for example. They can see the difficulty of the decision by examining the position on the board and its complexity. They do this by totting up all possible moves and then working out what fraction of them are blunders. So a position in which all moves except one are blunders is more difficult than a position in which only one move out of many is a blunder.

The team also know the skill level of the players. The skill level of every chess player is given by a number called the Elo rating (after Arpad Elo, who came up with it). Most amateurs have a rating between 1000 and 2000, strong amateurs get up to 2400, and the world’s top players receive rankings of around 2600. There are generally just a handful of players at any time with a ranking over 2800. A difference of 400 points between players suggests that the higher-ranked player is overwhelmingly likely to win.

And the huge size of the database allows them to cut and dice the data in a way that holds two of these variables constant while allowing the other to vary. For example, the team can examine board positions of the same difficulty while players are under the same time pressure to see how any variation in their skill level influences the quality of the decisions they make. Equally, the researchers can hold skill and time pressure constant while allowing board position to vary; and so on.

The results make for interesting reading. They find, for example, that the amount of time spent on a decision is a factor in blundering, but only up to a point. Quick decisions are more likely to lead to a blunder, but after about 10 seconds or so the likelihood of a blunder flattens out. So when players spend more time than this on a move, it is probably because they don’t know what to do.

The difficulty of the decision is an important factor, too. More difficult positions are more likely to lead to a blunder. And skill levels have a big impact in reducing the likelihood of a blunder. In general, better players make better decisions.

But Anderson and co have found evidence of an entirely counterintuitive phenomenon in which skill levels play the opposite role, so that skillful players are more likely to make an error than their lower-ranked counterparts. The team call these “skill anomalous positions.”

That’s an extraordinary discovery which will need some teasing apart in future work. “The existence of skill-anomalous positions is surprising, since there is a no a priori reason to believe that chess as a domain should contain common situations in which stronger players make more errors than weaker players,” say Anderson and co. Just why this happens isn’t clear.

These results have an important application. They allow the team to predict when a player is most likely to make a mistake. And it turns out that one of the factors is a much more powerful predictor than the others.

The bottom line is that the difficulty of the decision is the most important factor in determining whether a player makes a mistake. In other words, examining the complexity of the board position is a much better predictor of whether a player is likely to blunder than his or her skill level or the amount of time left in the game.

That could have important implications for the way researchers examine other decisions. For example, how does the error rate of highly skilled drivers in difficult conditions compare with that of bad drivers in safe conditions? If the difficulty of the decision is the crucial factor, rather than driver skill, then much more emphasis needs to be placed on this. “We think of inexperienced and distracted drivers as a major source of risk, but how do these effects compare to the presence of dangerous road conditions?” ask Anderson and co.

And given the team’s discovery of skill-anomalous conditions, are there road conditions that make skillful drivers more likely to make a mistake than less skillful ones?

This kind of work will have big implications beyond driving. Economists might well ask what all this means for buying decisions, election officials will ask about the complexity of information related to voting decisions, and negotiators will think about its impact on resolving conflict.

Fascinating work and plenty of food for thought.

Ref: arxiv.org/abs/1606.04956 : Assessing Human Error Against a Benchmark of Perfection

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.