How DARPA Took On the Twitter Bot Menace with One Hand Behind Its Back

One of the more disturbing phenomena on Twitter is the proliferation of bots that generate tweets automatically in an attempt to spread spam, to make money illicitly through click fraud, and, most worryingly, to influence the discussion on topics such as terrorism and politics.

The number of Twitter accounts involved in this kind of activity isn’t small. In 2014, Twitter admitted that more than 8 percent of its accounts were automated—that’s some 23 million active Twitter users.

The company pointed out that many of these were perfectly legitimate—many of these accounts openly repost or display tweets from other users. Nevertheless, a significant number are clearly up to no good, and the “influence bots” are a particular concern.

For instance, the group calling itself Islamic State uses online social media to persuade young people to embrace their cause. Some observers believe Russia embarked on a major social media disinformation campaign the annexation of Crimea. Others say bots played a significant role in influencing the outcome of elections in India in 2014.

So a way of reliably spotting influence bots on Twitter would be hugely useful. Last year, the Defense Advanced Research Projects Agency (DARPA) set out to find such a method by running a four-week competition in which teams were asked to spot bots in a stream of posts on the topic of vaccinations. One team emerged as a clear winner, and the results demonstrated some significant new strategies for identifying bots in the real world.

Today we get a unique insight into this competition and the strategies the teams employed thanks to a paper by V.S. Subrahmanian at the University of Maryland in College Park and Sentimetrix and a few pals.

The competition was about as realistic as DARPA could make it. The tweets were messages harvested from the Twitter stream during a 2014 debate on vaccinations. In this debate, a number of bots had been created as part of a competition to see how they could influence the discussions. So DARPA had ground truth knowledge of which accounts were artificial and which were real.

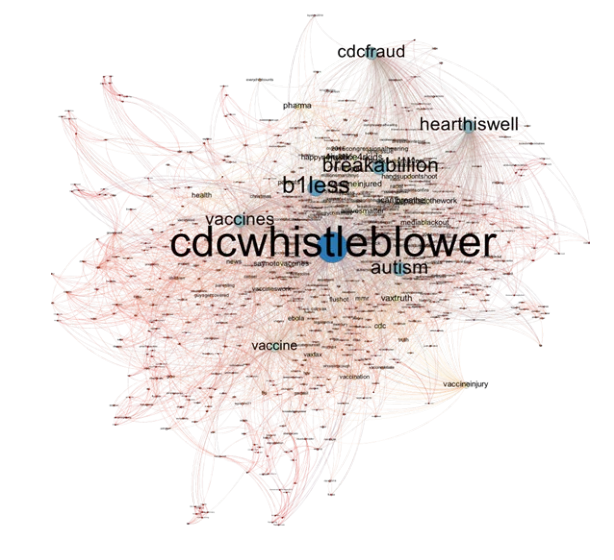

In total, the dataset contained over four million messages from more than 7,000 accounts of which 39 were bots in either the pro- or anti-vaccination lobbies. Each message contained a unique ID, a user profile including an image, a url, and a picture, where these were included. The data also included a time and date stamp as well as information about followers and when one account unfollowed another. All this was played to the competitors in a synthetic Twitter environment over four weeks in February and March.

The teams then had to analyze this Twitter stream and guess which users were bots. Each correct guess got them a single point but a team lost 0.25 points for each incorrect guess. A team that guessed all the bots d days before the end of the challenge also got d points, since DARPA is particularly interested in the early detection of influence bots.

The winning team was from the social media analytics company Sentimetrix, which guessed all the bots 12 days ahead of the deadline while making only one incorrect guess. That gave them a score of 50.75 points. (The second-place team, from the University of Southern California, scored 45 points, finding all the bots six days ahead of the deadline with no incorrect guesses.)

The winning strategies are revealing. The teams began by attempting to identify an initial set of bots in the data. Interestingly, none of the teams were able to automate this step and most used significant human input.

Sentimetrix used a pretrained algorithm to search for bot-like behavior. The team had trained this algorithm on Twitter data from the 2014 Indian election which featured many bots. It looked for unusual grammar, the similarity of the linguistics to natural language chatbots such as Eliza, and unusual behaviors such as extended periods of tweeting without a break that a human could not easily perform.

This revealed four accounts that were clearly bots, and Sentimetrix then used these to find others. One assumption was that bot-makers tend to produce many similar bots and link them to each other to inflate their popularity. So the team was able to use network and cluster analysis to find other likely bots, which they then compared to known bots.

The team also used features such as the temporal activity of the accounts on the assumption that an automated account would show unusual regularities. Sentimetrix also looked for users who changed allegiance during the debate from pro- to anti-vaccination (or vice versa). This they assumed could be a bot strategy for infiltrating one side of the argument and then posting opposing arguments.

A key feature in Sentimetrix’s success was the way it visualized the results of its work on an online dashboard so that a human user could easily see the status of analysis for each user.

In this second stage, Sentimetrix identified another 25 bots. That gave them enough data to train a machine learning algorithm to hunt through the data for other bots. And this approach led them to the remaining 10 bots.

The teams did not know how many bots were at work so a major problem was to know when to stop searching. Sentimetrix, for example, stopped when it could no longer find accounts that looked like bots.

That’s impressive work that could have an important influence on efforts to find bots that are attempting influence online discussions in inappropriate ways. Publishing the strategies like this should help other players develop anti-bot tactics, too.

But it could also have a negative impact. The battle between bots and bot-hunters is one that is constantly evolving. With papers like this, the bot-hunters are revealing their hand in a way that allows bot-makers to design strategies to specifically defeat these algorithms. In a way, it is like fighting with one hand tied behind your back.

Nevertheless, the temptation to keep bot-hunting strategies secret would be a dangerous one to promote. This kind of openness is part of our free society and surely one of the key reasons it is worth fighting to preserve.

Either way, this cat-and-mouse battle is set to continue.

Ref: The DARPA Twitter Bot Challenge : arxiv.org/abs/1601.05140

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.