How One Intelligent Machine Learned to Recognize Human Emotions

When it comes to communication, humans are hugely sensitive to each other’s emotional states. Indeed, most people expect their emotional state to be taken into account by their correspondents. And when this happens, communication tends to be more effective.

So if computers are ever to interact effectively with humans, they will need some way of repeating this trick and assessing the emotional state of their interlocutors. Understanding whether an individual has a positive or negative state of mind could make a huge difference to the quality of response that a computer might give.

But how to do this? One way of assessing a person’s state of mind is to analyze the electrical signals produced by the brain using an EEG machine. This can reliably reveal various aspects of brain state, such as the level of concentration or focus and so on.

However, the emotional states of a brain are complex, and much previous work has noted that the brain waves associated with specific emotions seem to change over time. Consequently, nobody has found a way to identify them clearly and reliably using brain waves.

Today, that changes thanks to the work of Wei-Long Zheng and pals at Shanghai Jiao Tong University. These guys have found a way to identify the emotional brain states and to repeat it reliably. They put the technique through its paces by identifying emotional states in the same subjects week after week by looking only at their brain waves.

Wei-Long and company began by creating a database to study. For this they asked 15 students to watch 15 film clips that were each associated with positive, negative, or neutral emotions.

During each viewing, the team recorded the subject’s face as well as the electrical signals from 62 electrodes attached to the subject’s head. They were then asked whether the film triggered a positive, negative, or neutral response and to rate their emotional arousal levels on a scale of 1 to 5. Crucially, the team repeated the experiment on the same subjects over a period of weeks.

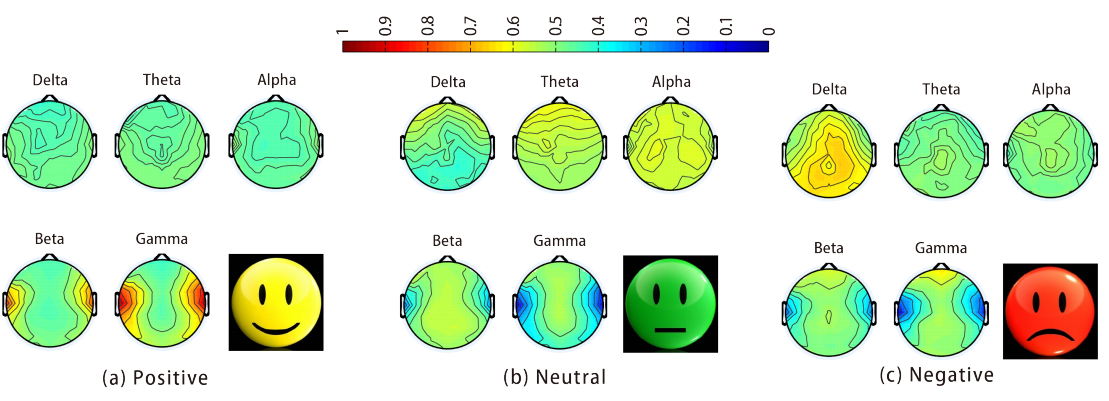

Wei-Long and company then used a machine learning algorithm to analyze the data set, looking for common features in the brain waves of people in the same emotional states.

Sure enough, the algorithm found a set of patterns that clearly distinguished positive, negative, and neutral emotions that worked for different subjects and for the same subjects over time with an accuracy of about 80 percent. “The performance of our emotion recognition system shows that the neural patterns are relatively stable within and between sessions,” they say.

There is more to be done, of course. The subjects in this study were all relatively young students at a Chinese university. Wei-Long and company want to look at how emotional brains states change with age, gender, and race. That’s for the future.

For the moment, that’s interesting work that could improve the study of emotions and could one day help intelligent machines better understand the emotional states of humans they interact with.

Ref: arxiv.org/abs/1601.02197 : Identifying Stable Patterns over Time for Emotion Recognition from EEG

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.